OpenAI, which recently drew criticism for introducing ads due to financial constraints, has released figures that somewhat defy expectations.

At least in terms of revenue, the numbers are substantial:

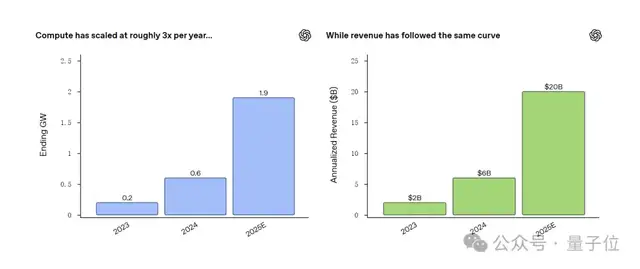

Annual Recurring Revenue (ARR) has surged from $2 billion two years ago to $20 billion.

Moreover, revenue is growing in tandem with computing power: between 2023 and 2025, computing capacity increased by a factor of 9.5, while revenue grew tenfold.

These figures come from an announcement released by OpenAI’s Chief Financial Officer.

Netizens are puzzled: why is OpenAI pursuing the widely criticized strategy of displaying ads?

In response, a clear answer has emerged: although earnings are high, expenditures are equally massive.

Anyway, as public discussion intensifies regarding OpenAI’s revenue, new details have also surfaced about the company’s first hardware product.

An OpenAI executive stated that its inaugural device is likely to launch in the second half of 2026.

Computing Power and Revenue Both Up 10x, But High Earnings Mean Higher Burn Rates…

Regarding the logic connecting computing power and revenue, OpenAI explicitly noted:

Investments in computing power drive leaps in research and model capabilities. Powerful models lead to better products and broader adoption, which in turn drives revenue growth. This revenue then supports the next round of computing investments and innovation. This cycle continues to strengthen.

Based on this, let’s examine OpenAI’s financial situation.

It is well known that OpenAI’s recent decision to “forcefully” introduce ads sparked significant controversy. In response, the company issued a statement to explain its rationale.

We will not delve into the specific contents of the announcement, but only highlight one key logic revealed within it: the more computing power OpenAI has, the higher its total revenue, thereby continuously driving technological innovation.

Specifically, between 2023 and 2025, OpenAI’s computing capacity grew by a factor of 9.5, and revenue followed a similar growth curve, increasing threefold year-over-year and tenfold over the three-year period.

- 2023: Computing power 0.2 GW; ARR (Annual Recurring Revenue) $2 billion

- 2024: Computing power 0.6 GW; ARR $6 billion

- 2025: Computing power 1.9 GW; ARR exceeding $20 billion

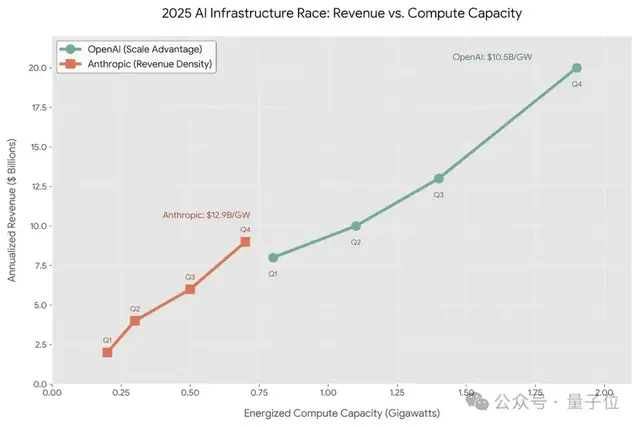

One company’s scale might not provide much context, so let’s compare OpenAI with its “old rival” (the parent company of Claude):

It is evident that OpenAI’s overall scale is significantly larger than that of its competitor.

However, under this flywheel effect, while OpenAI earns more, it also burns through cash even faster…

To date, although OpenAI has established cloud service partnerships with Microsoft and Oracle, considering long-term future needs, it has begun investing heavily in building its own AI data centers.

Starting from late last year, the company has collaborated with manufacturers such as NVIDIA, Oracle, and SoftBank to advance GW-scale data center projects across multiple fronts.

Regardless of how costly self-building is, just looking at previously disclosed bills for renting cloud computing power reveals how quickly money can be spent.

In October 2025, statistics provided by Epoch AI showed that—

OpenAI spent a total of $7 billion on computing resources in 2024, with this astronomical bill paid almost entirely through renting cloud computing power from Microsoft.

Note: This figure does not include upfront investments in data centers.

Therefore, to maintain the flywheel OpenAI envisions, whether $20 billion in ARR is sufficient remains uncertain.

Given the increasingly critical role of computing power, revenue may ultimately be, as netizens suggest:

Merely a natural result of expanded computing capacity.

According to OpenAI’s logic, there is another crucial link between computing power and revenue: powerful models and products.

After all, more applications lead to higher revenues…

Explaining why the company rolled out ads across its platform, OpenAI’s CFO stated:

Business models should expand in tandem with the value created by intelligence.

Initially, ChatGPT was free. However, as users demanded greater capabilities and reliability, subscription services were introduced accordingly.

Simultaneously, to enable developers and enterprises to embed intelligence into their systems via APIs, OpenAI built a platform-based business model.

The introduction of ads aims further to help users with decision support in commercial and transactional scenarios.

In the future, beyond ad revenue, the company will continue to drive growth through tiered subscriptions and usage-based API pricing (tied to production workloads).

Furthermore, as agents penetrate fields such as scientific research, drug discovery, energy systems, and financial modeling, new business models will emerge.

This implies that even OpenAI’s upcoming first hardware product serves to reinforce the “re-computing power” cycle.

First Hardware Product Expected to Launch This Year

Regarding OpenAI’s first hardware device, latest news has emerged.

Chris Lehane, Chief Global Affairs Officer, recently stated that the company is “on track” to launch its inaugural device in the second half of 2026.

He also teased that more details would be shared later this year.

Multiple leaks suggest that this hardware is likely a screenless AI smart pen, described in form factor as being comparable in size to an iPod Shuffle.

Earlier, OpenAI CEO Sam Altman made a peculiar comment:

When the design is “right,” users will want to bite it.

Hmm… based on the description, it does seem, perhaps, possibly like a pen?

Regardless, with the latest information released, we now know that this hardware’s debut is drawing closer.

After all, the commitment previously given by Altman and Jony Ive was: complete the launch within two years.

When they mentioned the two-year timeline before, outsiders speculated that their hardware development was facing difficulties due to a lack of computing resources.

Now, with the timeline (at least in perception) shortening, it is reasonable to infer that this is also related to computing power.

Indeed, increased revenue → expanded computing power → faster hardware rollout fits perfectly into the cycle.