The undisputed leader in cloud computing, Amazon Web Services’ Lobster, has just been served.

This lobster is called Amazon Quick.

It is the kind of “living” assistant that resides on your computer, directly connecting to your local files, calendar, email, and various applications without requiring any manual file uploads (with authorization).

But most importantly, Amazon Quick has achieved ecosystem integration:

Whether you use Slack or Teams, Outlook or Gmail, Salesforce or Asana, it works seamlessly across all of them.

For example.

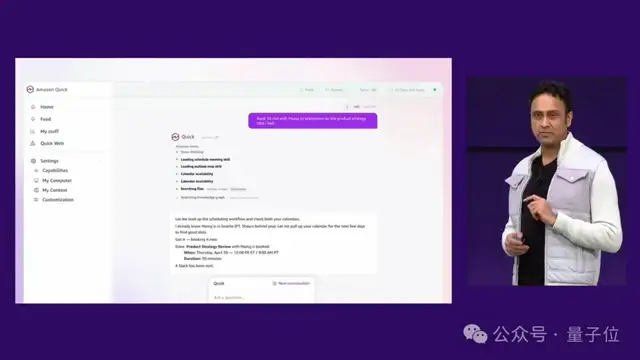

During the event, AWS Vice President Jigar Thakkar simply said to Amazon Quick:

Schedule a 30-minute meeting with Manuj to brainstorm product strategy.

Amazon Quick instantly understood the project context, recognized colleague relationships, automatically checked calendars and time zones, sent out the meeting invitation, and even posted a Slack message (similar to DingTalk) for notification.

There was no need to switch between tabs or engage in tedious copy-pasting; everything was executed in one smooth flow.

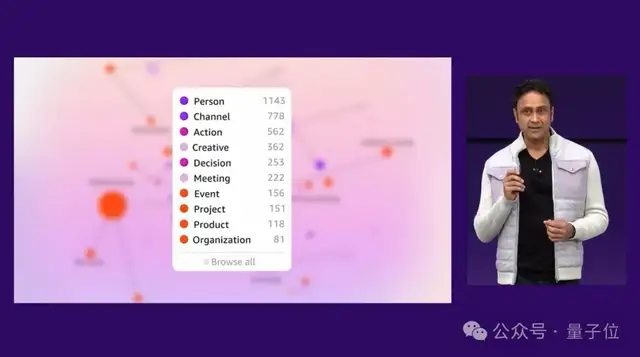

The reason Amazon Quick can do this is that it is backed by a knowledge graph that connects emails, Slack, CRM systems, calendars, local documents, and files.

In simple terms, it weaves people, projects, decisions, and actions into a living map of work.

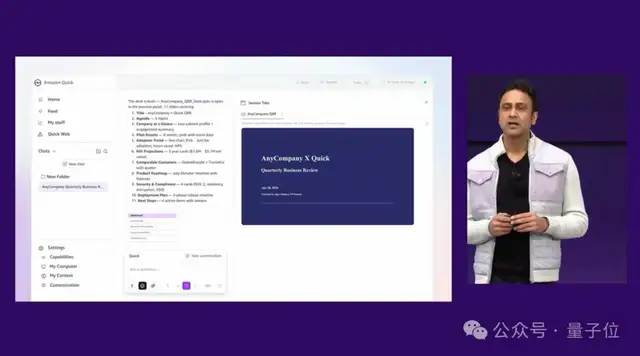

Moreover, Amazon Quick is an proactive Agent.

For instance, if you have a meeting with a client in the afternoon, it will proactively remind you without any action on your part and prepare materials for you:

It conducts company and industry research, pulls customer case studies from internal systems, retrieves product roadmaps from local files, and overlays them with the client team’s briefs, emails, and Slack threads. Finally, it generates a PPT ready to be taken into the meeting.

Just a few minutes—truly only a few minutes in total!

However, to be fair, AWS does not intend for Amazon Quick to merely be an efficiency tool. Based on Jigar Thakkar’s remarks, it aims to solve problems at three levels:

- Individual level: It helps schedule meetings, prepare briefs, and generate documents, emails, spreadsheets, and PPTs;

- Team level: It can create shared Spaces, consolidating workflows scattered across emails, shared drives, and approval systems into a single, continuously updated workspace;

- Enterprise level: When all employees work in this manner, Agents become part of the organizational operating model.

And this is merely the appetizer from AWS’s “What’s Next with AWS” press conference today.

Altman Stands by Cloud Despite Lawsuits

Yes, Sam Altman (Altman) also made an appearance this time, but in a “cloud” way (via a recorded video).

This is because he has been busy fighting a lawsuit against Musk in Oakland these past few days…

But what’s even more dramatic is that shortly after OpenAI adjusted its partnership with Microsoft, it immediately turned to Amazon Web Services.

This marks the first time AWS has integrated OpenAI’s strongest closed-source model (having previously taken in two open-source models last August), leading CEO Matt Garman to say on stage:

This is a request our customers have been making of us for a long time.

Altman also stated in the video:

Amazon Web Services redefined cloud computing, freeing developers from worrying about infrastructure; today, our collaboration with AWS aims to do the same thing in the Agentic AI era.

This deep “handshake” between the two parties directly delivered a triple impact (all currently in limited preview).

First, OpenAI’s latest frontier models have officially landed on Amazon Bedrock.

Starting today, customers can directly invoke the latest OpenAI models, including GPT-5.4, on Amazon Bedrock, with access to various other frontier large models (including GPT-5.5) coming in the next few weeks.

This means customers can finally evaluate, deploy, and fine-tune models from OpenAI, Anthropic, Meta, Mistral, and AWS’s own models within a single console.

More importantly, OpenAI models on Bedrock will directly inherit AWS enterprise-grade security controls: IAM-based access management, AWS PrivateLink connectivity, static/transit encryption, and comprehensive logging via CloudTrail.

There is no need to reconfigure infrastructure or learn new security models; data stays within the VPC (Virtual Private Cloud), running seamlessly within the enterprise’s existing security framework.

Secondly, Codex has also landed on Amazon Bedrock.

AWS stated that Codex already sees over 4 million users weekly. After integrating with Bedrock, enterprise teams can access Codex using AWS credentials, handle inference through Bedrock infrastructure, and start using it via the Codex CLI, Codex desktop app, and Visual Studio Code extension.

This echoes Matt Garman’s discussion on software development changes during his presentation.

He noted that AI and Agentic Development have transformed the software development lifecycle, affecting everything from creation and operations to security. In the past, fixing a bug might take weeks; now, some teams see bug reports on social channels and fix and release patches within 20 minutes.

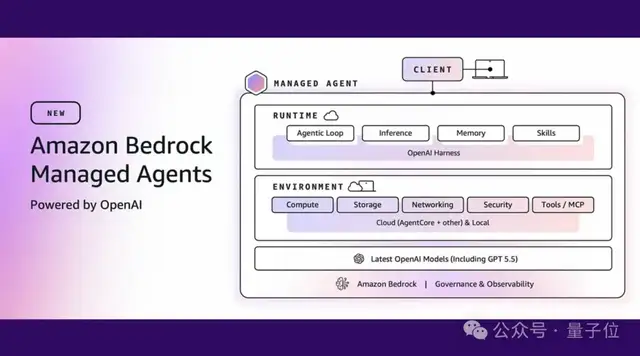

Finally, there is the newly introduced Bedrock Managed Agents, powered by OpenAI.

AWS’s assessment is that the best Agents require not just smart models but also production-grade infrastructure: cross-session memory, skills, identity permissions, compute environments, audit logs, and governance capabilities.

The positioning of Bedrock Managed Agents is to combine OpenAI’s frontier models and Agent capabilities with AWS’s global infrastructure, security, and service ecosystem, allowing customers to deploy production-level OpenAI Agents faster rather than spending excessive time building infrastructure.

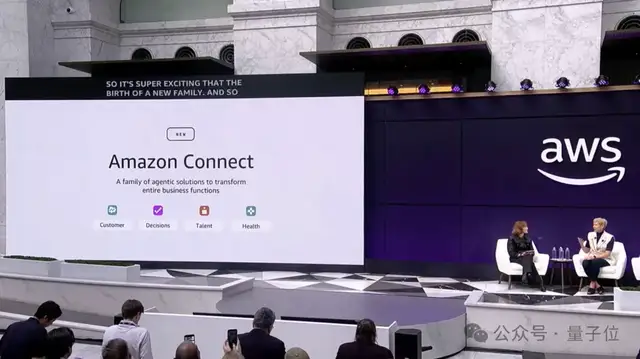

Amazon Connect Has Changed Too

If Quick is a desktop AI assistant for individuals and teams, and Bedrock is the foundation for AI models and Agents, then the changes in Amazon Connect are more like AWS packaging its business experience into a set of actionable Agent applications.

Today, Amazon Connect has expanded from a single product into four Agentic AI solutions:

- Amazon Connect Decisions

- Amazon Connect Talent

- Amazon Connect Customer

- Amazon Connect Health

These target supply chain, recruitment, customer experience, and healthcare, respectively.

Let’s look at Amazon Connect Decisions first.

This is an AI solution for supply chain decision-making. AWS found that supply chain disruptions often cost enterprises over two weeks to resolve, with teams manually collecting data across fragmented systems, spreadsheets, and emails.

Connect Decisions is built on more than 25 specialized supply chain tools, 30 years of Amazon’s operational science, and the Amazon Supply Chain Optimization Technologies (SCOT) foundation model.

The Agent understands business context, sets appropriate forecasts for different products, proactively asks about information that affects forecasts such as promotions or holidays, incorporates these factors into results, and compresses thousands of alerts into a few items requiring human judgment.

Next is Amazon Connect Talent.

It targets large-scale recruitment. Drawing from Amazon’s own experience, which hired 250,000 seasonal workers during the 2025 peak season, Connect Talent starts with existing job descriptions and uses AI Agents to analyze role requirements, generate interview plans, key competency areas, structured questions, and evaluation criteria. After recruiters approve them, the system can automatically invite candidates for voice interviews at their convenience.

For candidates, this means no more repeatedly coordinating schedules for phone screenings; for recruiters, opening the system the next day reveals not a pile of unprocessed resumes, but anonymous competency scores, full transcripts, and notes.

Amazon Connect Customer is an upgraded version of the original Amazon Connect customer service system.

It continues to serve voice, chat, and digital channels for customer experience but adds configuration capabilities that allow organizations to set up conversational AI experiences in weeks rather than months, without requiring strong technical backgrounds. Business teams can directly design and deploy complex customer processes such as authentication, payment processing, personalized recommendations, and problem resolution.

Finally, there is Amazon Connect Health.

It targets the healthcare sector, focusing on automating administrative tasks such as appointments and documentation, allowing medical staff to spend more time with patients.

In addition, AWS introduced an interesting product philosophy this time: Humorphism (human-centric design).

Colleen Aubrey, Senior Vice President of Applied AI Solutions, explained:

Past software interfaces were Skeuomorphic, bringing real-world folders onto the screen; in the Agent era, we need to align with the dynamics of human interaction. When you are stuck, teammates help; when you are focused, teammates wait. This is what Agents should look like.

What Is This Press Conference Trying to Say?

Looking at Quick, Bedrock, and Connect together, this “What’s Next with AWS” event essentially communicated one thing:

Agents have become a new enterprise operating system.

Julia White

The presentation opened by noting that we are entering the Agentic Era of AI. Although still in its early stages, agents have already begun to profoundly transform work methods. Matt Garman reinforced his previous assessment of a “world with billions of agents,” asserting that this trajectory has not only maintained momentum but is advancing faster than anticipated, with nearly every industry witnessing business changes driven by agent technology.

However, he cautioned that enterprises cannot simply hand over existing processes to agents unchanged.

This parallels the early days of cloud migration. Many companies initially merely lifted their on-premises data center architectures into the cloud before realizing that the true value of the cloud lies in horizontal scaling, serverless computing, rapid experimentation, and entirely new architectural paradigms.

Agents are no different. If AI is simply used to execute old workflows, efficiency may improve, but it will rarely deliver a five- or ten-fold transformation in user experience. The real opportunity lies in redesigning applications and workflows from the ground up.

Thus, the core question posed by this launch event is:

When AI evolves beyond merely answering questions—capable of understanding context, invoking tools, maintaining continuous memory, and proactively executing tasks—how should enterprises fundamentally change their operations?

Amazon Web Services’ answer is clear:

Integrate AI into desktops, model platforms, industry-specific workflows, and finally, into the daily fabric of enterprise operations.

Whether this “lobster” (a metaphor for a potentially delicious but initially unappealing concept) will truly prove its worth depends on actual enterprise implementation. Yet, judging by this launch event alone, the cloud computing giant is no longer content with merely providing the basic utilities of the AI era—power and water.

It aims to become the new workstation of the Agent age.