The World’s Number One Is Also Anxious: NVIDIA Undergoes Architectural Overhaul!

It is reported that at the upcoming GTC conference in San Jose this March, Jensen Huang will unveil a brand-new AI inference system.

At its core lies a new chip optimized specifically for inference.

Moreover, the chip’s first major customer has already been secured: OpenAI, which recently completed a massive $110 billion financing round.

More notably, the underlying architecture of this chip does not come from NVIDIA’s self-developed designs but is based on the LPU (Language Processing Unit) architecture created by the former Groq team.

This means: For the first time, NVIDIA is introducing external architectural designs on a large scale within its core AI computing product line.

Behind this decision to “not build it ourselves” lies last year’s industry-shaking deal—

NVIDIA spent approximately $20 billion in an “acqui-hire” (acquisition for talent and technology) of Groq’s core technologies and team.

Now, this inference chip represents the first tangible result of that investment.

It remains a classic Jensen Huang strategy: buy mature solutions, deploy quickly, go straight to battle, and waste not a penny unnecessarily.

Extreme ROI.

It’s LPU, Not GPU

According to The Wall Street Journal, NVIDIA is developing a new inference computing system that will integrate chips designed by Groq and be officially unveiled at the GTC conference.

Meanwhile, hints of this plan have already appeared in OpenAI’s latest financing documents:

Expanding long-term cooperation with NVIDIA, including the use of 3GW of dedicated inference capacity, as well as providing 2GW of training compute power on the Vera Rubin system.

If Jensen Huang does not delay, this “dedicated inference capacity” is highly likely based on this new chip.

As mentioned at the beginning, once implemented, this will mark NVIDIA’s first large-scale introduction of external architectural designs into its core AI computing product line—

Groq’s LPU.

The choice to directly introduce an external architecture rather than relying entirely on self-development is closely tied to time windows.

Over recent months, top-tier clients like OpenAI have been actively seeking more efficient inference alternatives and negotiating with other chip companies.

With inference demand growing rapidly, NVIDIA needed to provide targeted solutions faster.

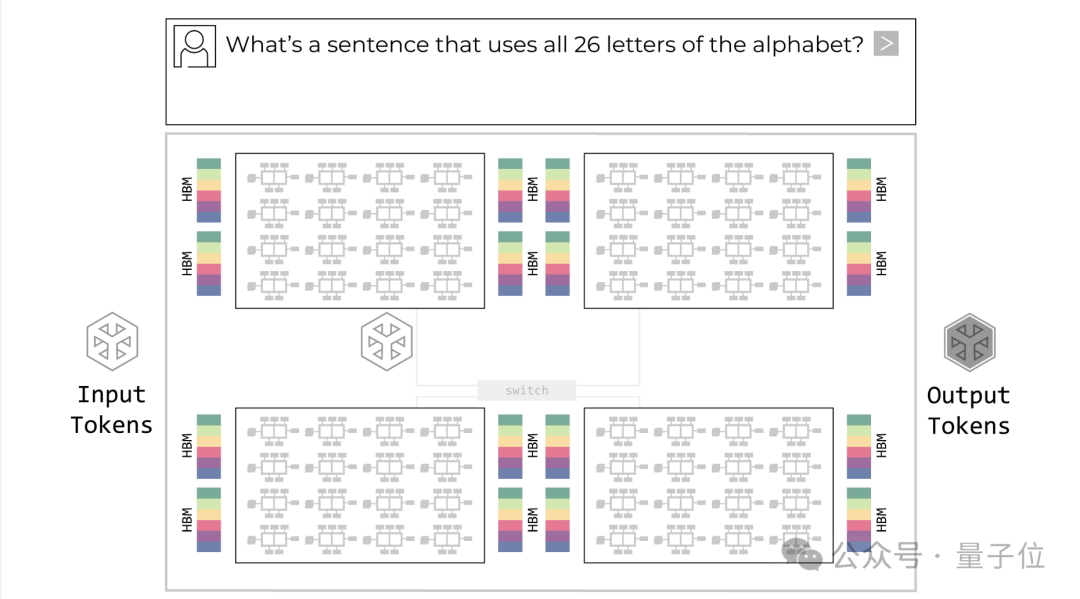

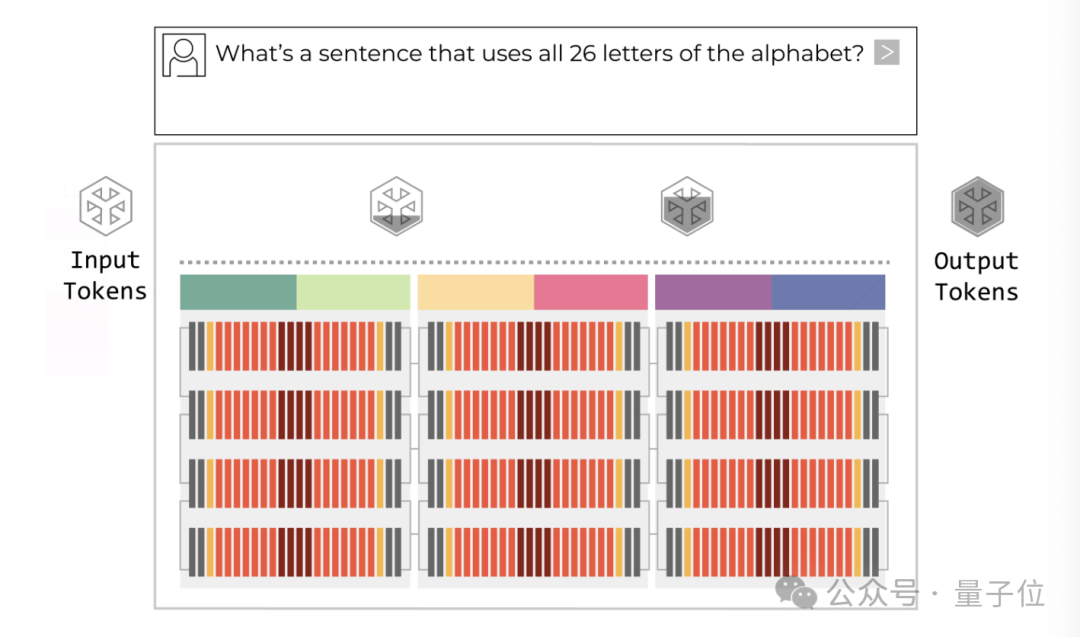

The reason for using LPU instead of GPU lies in the adaptation to inference scenarios.

GPUs typically store large model parameters in external HBM (High Bandwidth Memory), requiring frequent data movement between compute cores and memory. During training, this movement cost is amortized through massive parallelism.

However, during inference—especially the decode phase—the batch size becomes smaller and latency sensitivity increases. System bottlenecks stem more from data movement than from compute power itself.

Groq’s LPU architecture changes this logic—

It employs high-density on-chip SRAM, keeping data “close to the compute units,” significantly shortening data paths. This reduces latency and energy consumption from an architectural perspective, making it better suited for low-latency inference scenarios. Theoretically, its maximum speed can be 100 times faster than GPUs.

As Agent applications become increasingly prevalent, the AI computing structure is shifting from “training-first” to “inference-first.”

Inference is no longer just a supplementary step after training; it has become a larger-scale, higher-frequency, long-term workload.

If NVIDIA officially incorporates LPU into its core product line, this will not only be the launch of a new chip but also a response to the shift in computing priorities.

This also explains why NVIDIA completed the integration of Groq’s core technologies and team for approximately $20 billion last year, bringing in key members such as founder Jonathan Ross (the father of Google TPU).

In short, the inference market is reshaping the computing landscape, and NVIDIA must secure its position.

NVIDIA’s Inference Chips Face Threats

Over the past year, with the explosion of Agent applications, the structure of computing demand has changed significantly: the market focus has shifted from training to inference.

Training remains important, but inference involves higher call frequencies, larger scales, and longer durations, making cost a core variable.

Some AI service providers have begun separating training and inference deployments—continuing to use NVIDIA GPUs for training while turning to more cost-effective dedicated chips for inference.

For example, last month, OpenAI signed a multi-billion-dollar computing cooperation agreement with Cerebras.

Cerebras specializes in inference-optimized chips. Its CEO, Andrew Feldman, publicly stated that their chips are faster than NVIDIA GPUs in specific scenarios.

Anthropic relies more on self-developed chips from Amazon Web Services and Google Cloud to support model operations rather than relying entirely on NVIDIA solutions.

Meta has also reached a large-scale chip order cooperation with AMD, jointly optimizing GPU architectures for inference tasks to reduce dependence on NVIDIA.

In the domestic market, model companies are also beginning to turn toward local computing solutions.

In recent news, DeepSeek even bypassed NVIDIA, granting exclusive early access to DeepSeek V4 to Huawei, and has already completed model migration on the Ascend platform.

Another rumor involves Cambricon. Regardless of which rumor proves true, neither is favorable for NVIDIA.

According to Bernstein Research predictions, by 2026, Huawei’s market share in China’s AI chips could reach 50%, while NVIDIA’s share may drop to single digits.

Meanwhile, NVIDIA’s competitors are strengthening their layouts for inference-specific architectures.

On one hand, Google, which has long been laying out TPU infrastructure, and Amazon, which secured computing ecosystem cooperation rights in OpenAI’s latest financing plan, are promoting the implementation of self-developed chips in high-frequency inference scenarios. Amazon will focus on activating its own Trainium chips to support Agent applications.

On the other hand, domestic players such as ByteDance, Alibaba, and Baidu have also begun manufacturing chips themselves.

Thus, the trend is clear: inference has become the main battlefield, and customers are beginning to diversify risks.

So, why aren’t GPUs suitable for inference?

Because the training phase pursues “massive parallelism” and overall throughput, while the inference phase seeks “single-token speed” and stable response times.

Specifically, inference is divided into two stages: pre-fill (processing user input) and decode (generating output token by token).

What truly determines user experience is the second step—low-latency generation.

At this point, the system bottleneck lies not in compute power but in frequent access and data movement. Although GPU architecture is powerful, it was designed for parallelism; LPU adjusts storage and compute paths to better fit inference workloads.

For these reasons, The Washington Post even commented that this marks the first time NVIDIA has faced an architectural challenge at the core hardware level since the AI wave began.

Although NVIDIA still holds over 90% of the global GPU market share, and Hopper, Blackwell, and the upcoming Rubin series remain the mainstays for training, NVIDIA must respond directly to the surge in inference demand.

This LPU chip is their answer.

One More Thing

In addition to this mysterious chip, Jensen Huang previously announced:

The GTC conference this year will also unveil a new product line “unseen in the world.”

The outside world generally speculates that this includes next-generation GPUs from the Rubin series or completely new architecture chips from the Feynman series.

Or perhaps more specifically, delayed consumer-grade graphics cards???

References

- Nvidia plans new chip to speed AI processing, shake up computing market — www.wsj.com

- NVIDIA Kicks Off the Next Generation of AI With Rubin — Six New Chips, One Incredible AI Supercomputer — NVIDIA today kickstarted the next generation of AI with the launch of the NVIDIA Rubin platform, comprising six new chips designed to deliver one incredible AI supercomputer.

- Nvidia’s Blackwell Ultra and Vera Rubin AI Chips — Electronics