OpenAI is leaking information like a sieve. News about the latest “Spud” (Potato) model has arrived once again.

This “potato” is none other than the highly anticipated GPT-6.

According to leaks, this “potato” is fully cooked and will be released on April 14.

Insiders state that this model is entirely focused on achieving AGI:

Performance has surged by 40%, comprehensively outperforming GPT-5.4 in coding, reasoning, and agent tasks.

It features native multimodality, using a single architecture to handle text, audio, images, and video.

It also boasts an enormous context window of 2 million tokens.

Its ultimate form is even more critical:

GPT-6 will evolve into a super engine responsible for fusing ChatGPT, Codex, and the Atlas browser into a unified agent.

Yes, that is the desktop-level “super app” OpenAI has been talking about for a long time.

Most eye-catching of all is how OpenAI internally positions this model.

Internal employees describe it as:

This is the “last mile” of AGI; they are cutting everything else to bet on it.

Is GPT-6 Coming?

The source bringing us this insider information is Strawberry Bro @iruletheworldmo.

This individual has some serious clout; big names like Peter (father of the Lobster), Gavin Baker, and Jim Fan are all his followers on 𝕏.

Strawberry Bro excitedly stated that OpenAI’s internal leaks have been rampant lately, allowing him to gather significant scoop material.

First, the reason OpenAI is cutting all side projects is to pour all resources into GPT-6.

Sam Altman (referred to here as Brockman in the source text, likely referring to Sam Altman or Greg Brockman) previously stated in an interview that progress toward AGI has reached approximately 80%.

In the view of OpenAI employees, GPT-6 represents the remaining 20%.

How so? Let’s look at the data.

A native multimodal model has achieved a comprehensive leap in benchmark tests.

It is reportedly 40% stronger than GPT-5.4 in coding, reasoning, and agent tasks.

The context window has reached an astonishing 2 million tokens, double that of GPT-5.4 and Opus 4.6.

Regarding pricing, it continues OpenAI’s “excellent tradition”: $2.50 per million input tokens and $12 for output, meaning it is not much more expensive than GPT-5.4.

If compared to Claude, this would mean possessing Mythos-level intelligence while charging Sonnet-tier prices.

It is said that GPT-6’s pre-training was completed on March 17, with post-training and safety work also finished, ready for launch at any time.

The internally set release date is April 14.

As news leaked out, more internal details about OpenAI and GPT-6 emerged.

Since December 2025, OpenAI has been in a state of “coding red alert.”

Recently, Brockman admitted on a podcast that OpenAI had previously focused too much on leaderboard rankings, only to be severely beaten by Anthropic in the coding sector, losing many users.

The explosive popularity of AI-based coding products like Claude Code, Cowork, and OpenClaw made OpenAI suddenly realize, “It turns out relying solely on text can truly lead to AGI.”

This forced Altman into a corner, compelling him to cut almost all non-core product lines.

The most significant project cut was Sora, which rose rapidly but ended abruptly. This indirectly caused the rumored $1 billion contract between OpenAI and Disney to fall through completely.

However, that is not all.

New reports indicate that Altman has stopped pretending; he is now focusing entirely on data centers, pushing safety concerns aside for later!

Currently, the OpenAI safety team has been placed under the CRO (Chief Risk Officer).

Simultaneously, OpenAI’s product department has changed its name to AGI Deployment, highlighting its ambition.

After these major moves, Altman has finally brewed up a (perhaps) ultimate weapon capable of responding to Anthropic: GPT-6.

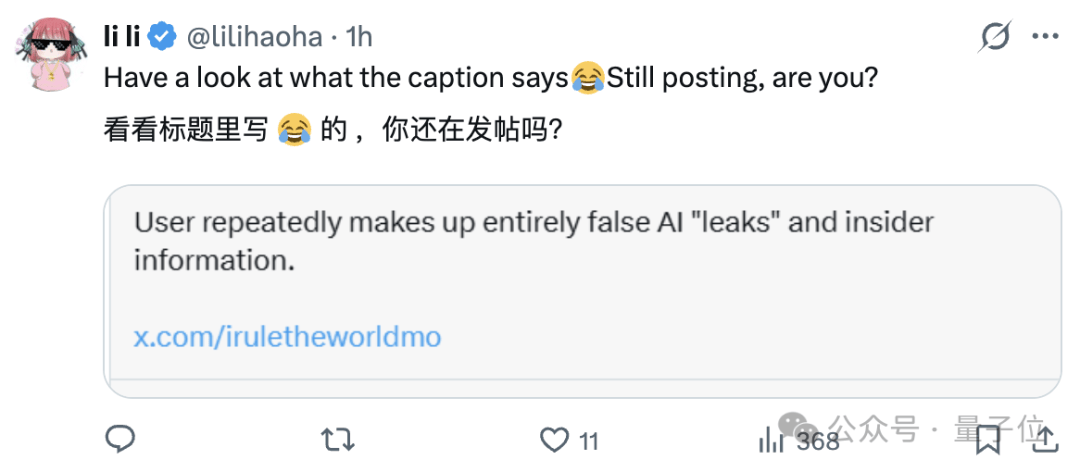

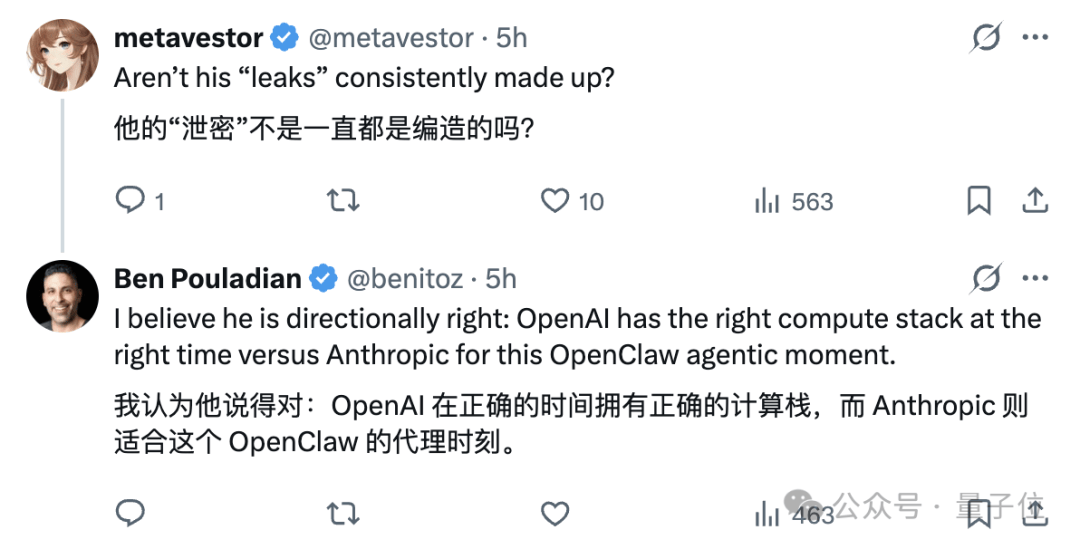

However, some commenters reminded us that Strawberry Bro’s leaks are not necessarily entirely accurate.

Others stepped up to support the claims, stating that while specific details may be questionable, the general direction is likely correct.

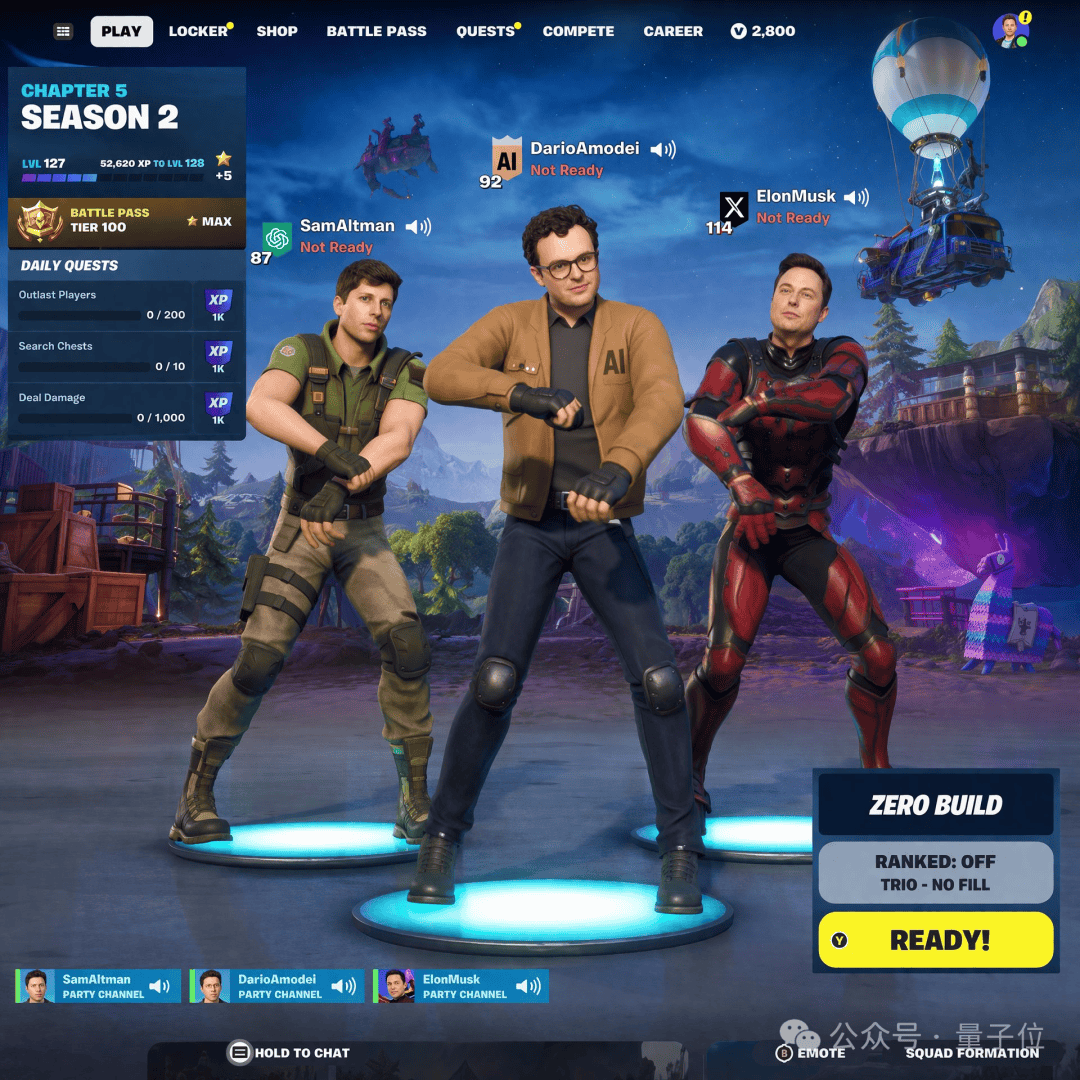

The Real GPT Image 2

While there is no confirmed date for GPT-6, GPT-Image 2 is truly coming.

It appeared briefly in the Arena yesterday, causing quite a stir upon its debut.

Why? Look at these images to understand:

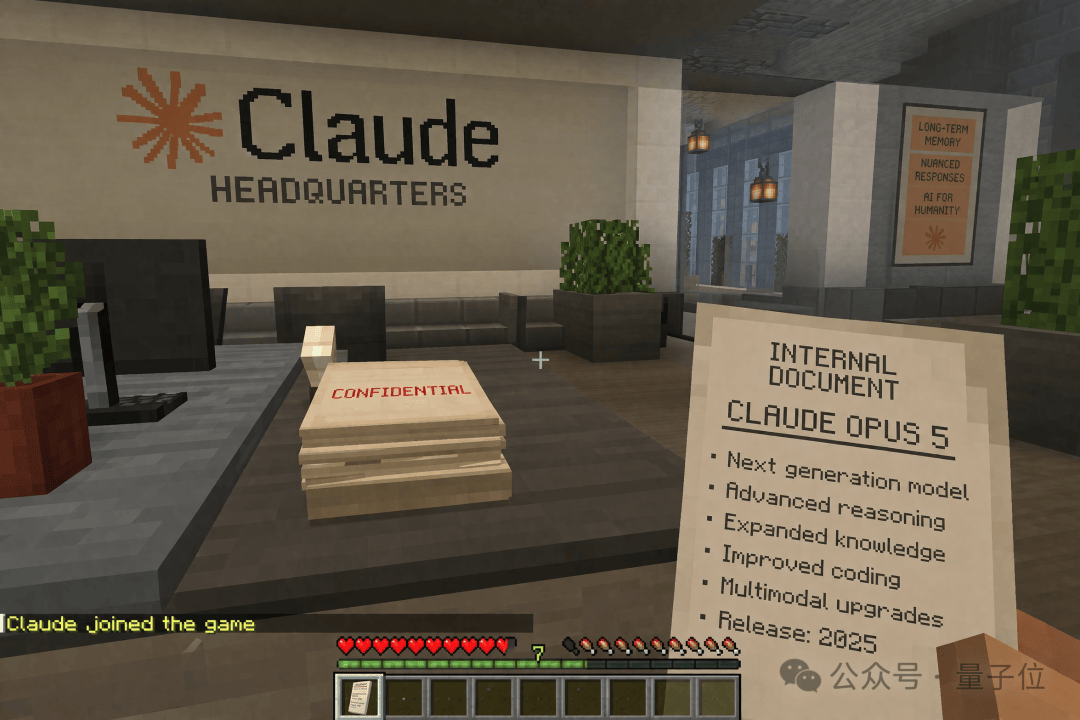

Friends, I did not post the wrong image, nor am I slacking off playing Minecraft.

This thing… is genuinely generated by users using GPT.

It can replicate almost any game 1:1, completely lacking that blurry AI feel; it’s impossible to distinguish real from fake.

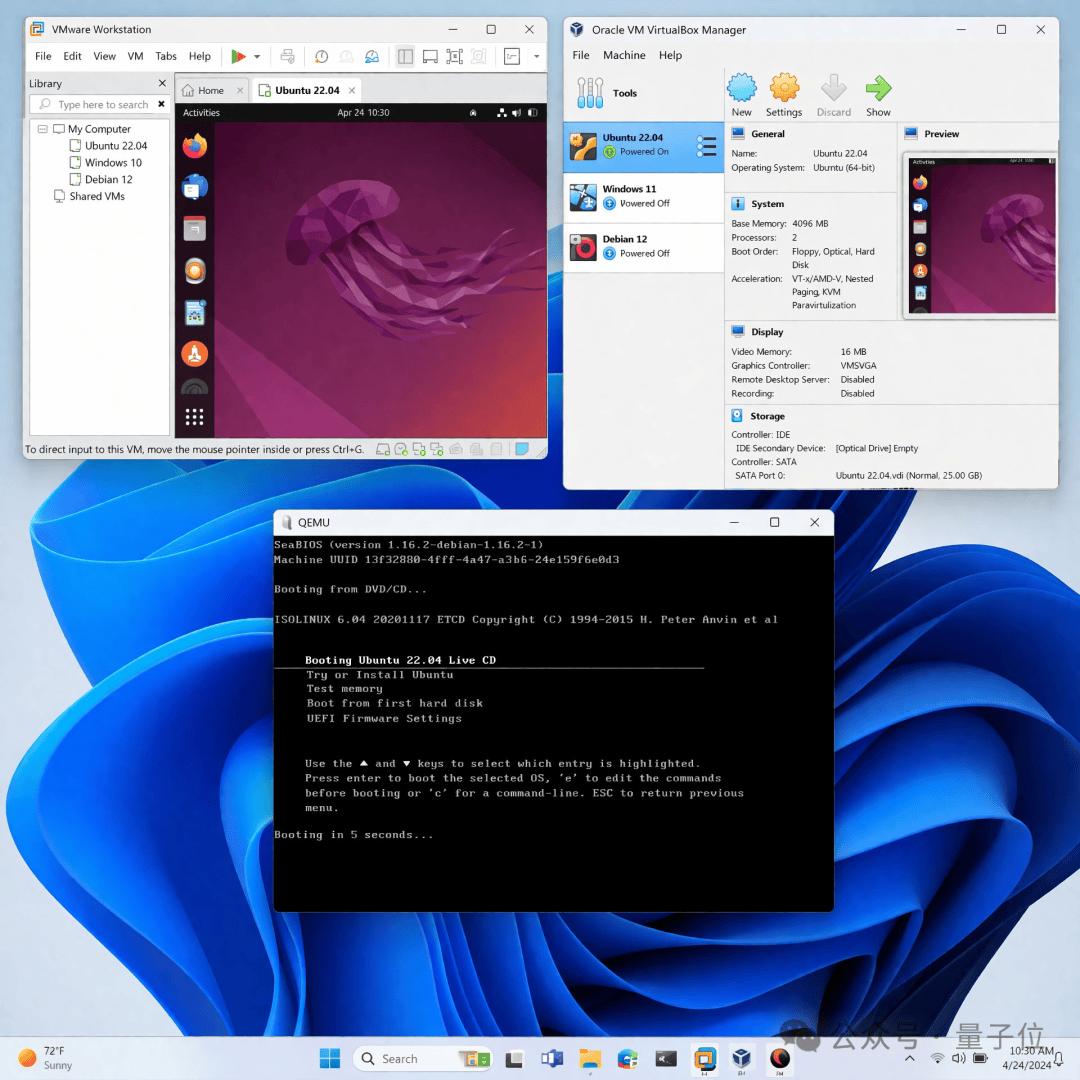

Then there is this Windows desktop screenshot. I was stunned for a moment when I saw it, wondering why someone would post a simple screenshot.

Only then did I realize, oh, this was generated by GPT-Image 2.

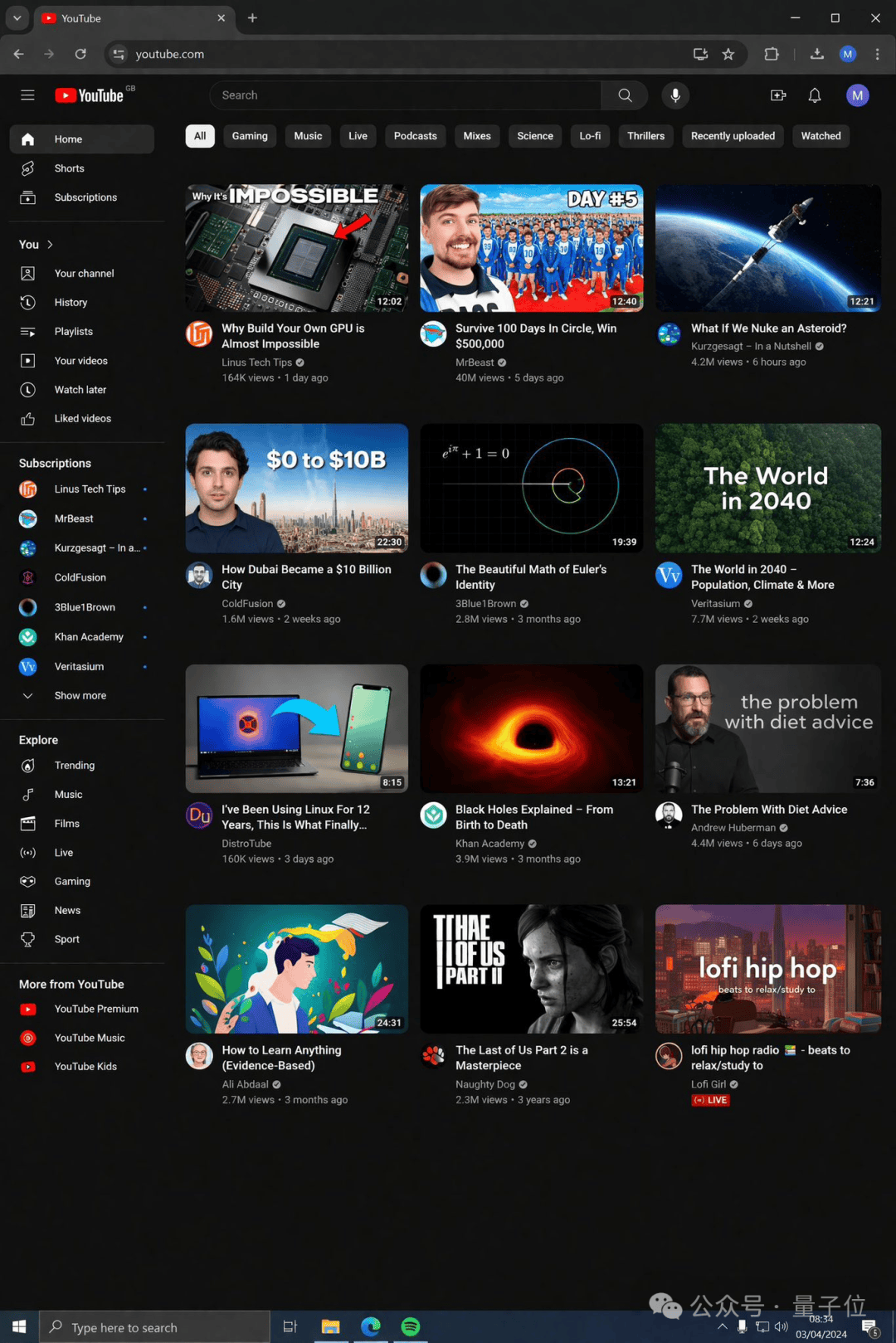

With clearer prompts, GPT-Image 2 can directly take over the YouTube homepage.

Its world knowledge capability has also improved significantly, aligning completely with Nano Banana Pro.

The aesthetics are quite good, avoiding the typical bright blue sci-fi AI color tone inherent in many generative image models.

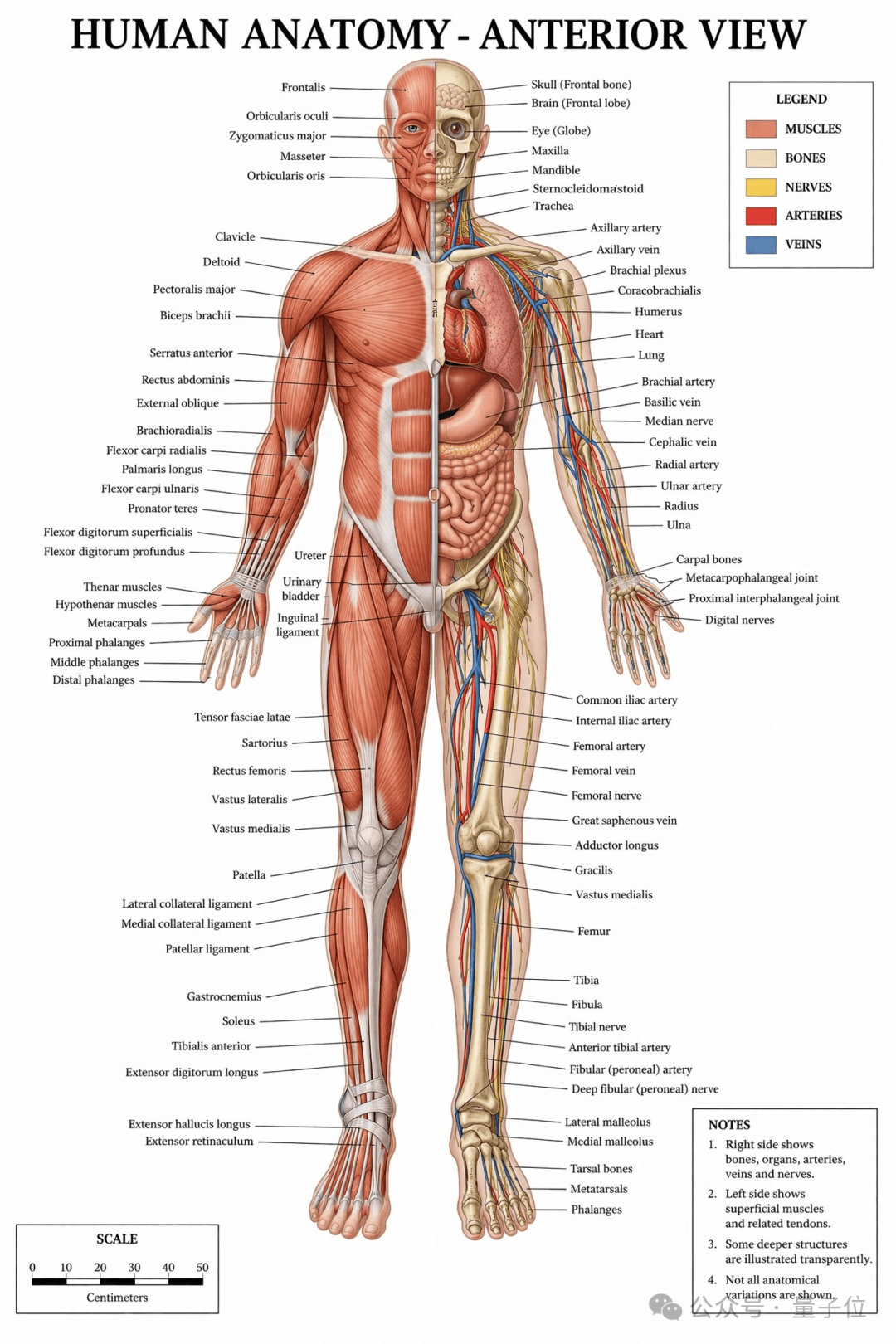

When drawing anatomical diagrams, the results look like illustrations from a textbook.

Realism has also improved significantly.

Finally, that ugly yellow filter is gone, and colors appear much more natural.

I’m looking forward to it. If the performance is truly this stable, it will undoubtedly become the most practical image generation model to date.

Unfortunately, this model was removed from the Arena yesterday, so it cannot be tested for now.

Compute Power is the Real Mastermind

All in all, as the AI race has progressed to this point, every model ultimately points to one thing: compute power.

And its importance has become fully apparent.

A series of recent events have subtly hinted at the shadow of compute power.

Anthropic’s decision to stop providing authorization channels for OpenClaw subscribers was not only to pave the way for its own KARIOS but also, perhaps out of helplessness—

It simply couldn’t hold on anymore.

Anthropic probably didn’t expect such high demand for this tool.

The rapid consumption of tokens recently might be due to this reason; OpenClaw has “taken the blame,” causing me token anxiety as well.

Now, with Sora being cut and the Disney contract torn up, these are also helpless moves by OpenAI to make way for the compute needs of its new model.

Last year, when people discussed data centers, it seemed like a distant issue, similar to environmental protection.

Now, the shockwaves from infrastructure impacts have transmitted along the industry chain to the application side.

This race is truly becoming more exciting.

Under the constraints of scarce compute power, even a CEO skilled at fundraising like Altman cannot leave OpenAI with any fallback options.

The competition now comes down to who dares to go all-in and bet on that single direction leading to the future.

References

2040508733944668408. 2040508733944668408 — x.com/iruletheworldmo/status/2040508733944668408 2040524473233985565. 2040524473233985565 — x.com/benitoz/status/2040524473233985565 2040338311873515597. 2040338311873515597 — x.com/marmaduke091/status/2040338311873515597