Right after the start of the year, OpenAI is facing another wave of personnel turbulence: its leading figure in reasoning models has resigned!

Jerry Tworek—the key architect behind o3, o1, GPT-4, ChatGPT, and OpenAI’s first AI coding model, Codex, as well as OpenAI’s Vice President of Research—has announced his difficult decision:

To leave OpenAI to explore research areas that are difficult to pursue within the company.

Curious about what he means by “research areas difficult to conduct at OpenAI”?

He stated that during his nearly seven years at OpenAI, he experienced many wonderful and crazy moments, but mostly wonderful ones.

(Is there a “seven-year itch” even for tech giants?)

Many current OpenAI employees reflected on their pleasant experiences working with Jerry in the comments of this post.

Wishing him a bright future ahead.

For the general public, the keywords in the comments were mainly “gratitude” and “admiration.”

However, there are still friends feeling frustrated over OpenAI losing key talent.

But the comments section of this particular post was even funnier.

Many people may know Jerry from his intermittent interviews and speeches, but their understanding might not be comprehensive.

Now, let’s take a serious, all-around look at this reasoning model expert to bid him farewell and wish him well on his new journey.

The Top Figure in OpenAI’s Reasoning Models

Jerry Tworek was born and raised in Poland and holds a master’s degree in mathematics from the University of Warsaw, giving him a strong foundation in theory and quantitative skills.

He did not enter the AI field immediately after graduation.

In the five years following school, he worked in Amsterdam in quantitative research, primarily focusing on quantitative trading strategies for futures markets.

During this period, Jerry used optimization theory and techniques to extract signals from noisy datasets to study and develop these strategies, which ultimately led him to begin researching reinforcement learning.

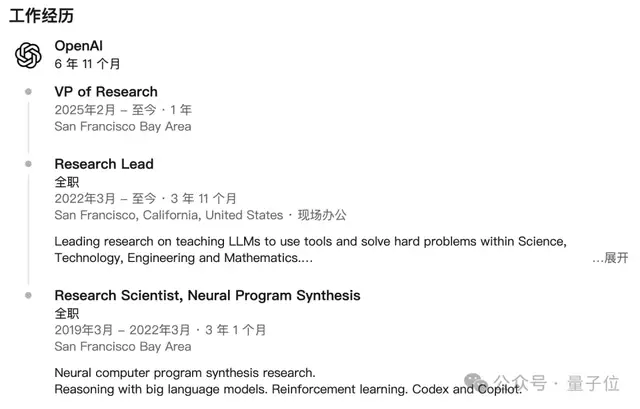

In 2019, Jerry joined OpenAI as a Research Scientist, focusing on neural program synthesis, reinforcement learning, and other areas.

At the time, GPT-2 had just been released, and OpenAI was still primarily a non-profit research lab with a small scale and relatively modest fame.

Early in his tenure, he participated in the robotics project “Solving Rubik’s Cube with a Robotic Hand,” presenting this work at the Deep Reinforcement Learning Workshop at NeurIPS 2019.

Jerry was also one of the earliest researchers to engage in the “large-scale pre-training + compute scaling” approach. Even before the ChatGPT era, he had already shown great interest in model reasoning.

After the release of GPT-3 in 2020, he began working on evaluating and training GPT-3 to solve reasoning and logic problems.

To date, Jerry has repeatedly emphasized the importance of “reasoning” rather than just “pattern-matching generation” in various public speeches and interviews. He tends to view large models as systems that can “learn thinking processes” through training, rather than merely black-box text predictors.

Between 2019 and 2022, he conducted research on neural program synthesis and large model reasoning at OpenAI, involving code models like Codex and Copilot, while using reinforcement learning to enhance reasoning and decision-making capabilities in complex tasks.

Starting in 2022, Jerry began serving as Research Lead at OpenAI, leading teams to study “how large language models can use tools to solve difficult STEM problems,” including plugins and Code Interpreter, among others.

After the emergence of ChatGPT, he gradually became more widely recognized—primarily as one of the key contributors to ChatGPT and the GPT series models.

Jerry is the Chief Researcher for GPT-4, led the research and development of the first reasoning model o1, and is publicly introduced as the core person in charge of GPT-5’s reasoning mechanisms and long-thinking capabilities.

He has also systematically explained GPT-5’s thinking methods and the evolutionary path of reasoning models in various interviews and podcast programs.

In 2025, Jerry was promoted to Vice President of Research at OpenAI.

On January 6, 2026, Jerry announced his resignation from OpenAI without disclosing his specific next steps.

Below is the translated original text of Jerry’s farewell post.

What Did Jerry Write in His Farewell Post?

Hello everyone, I have made a difficult decision—to leave OpenAI.

I have worked here for nearly seven years, experiencing many wonderful and crazy moments, but mostly wonderful ones.

I truly enjoyed my time working here. I was involved in the early development of reinforcement learning on robots and trained the world’s first coding model, which kicked off the programming revolution of large language models.

Before DeepMind released the Chinchilla model, I discovered what later became known as the “Chinchilla Scaling Law.”

I participated in the development of GPT-4 and ChatGPT. Recently, I assembled a team to establish a new paradigm for scaling training and inference compute—now commonly referred to as reasoning models.

I have made many friends, spent countless nights in the office, witnessed numerous technical breakthroughs, and laughed and worried alongside many people I consider close partners.

I had the privilege of building and growing what I believe is the strongest machine learning team in the world.

This has been a very pleasant experience. Although I am leaving OpenAI to explore research areas that are difficult to pursue within the company, it is a special organization and a unique presence in the world, having already secured an eternal place in human history.

I am deeply grateful for the trust OpenAI and you have placed in me over the years. Moments like these always feel somewhat unnatural, but viewed from a positive perspective, they can become catalysts for great things.

Together, we made machine intelligence more useful and reliable. I am a loyal user of ChatGPT reasoning models.

Thank you again, countless times.

Take care, dear Strawberries.

Jerry

One More Thing

Originally, attaching Jerry’s farewell post should have ended this article.

But I stumbled upon a comment that seemed funny at first glance but made some sense upon closer reflection:

Thinking about it carefully, friends leaving OpenAI indeed always write farewell posts. Is this an unwritten rule? Or part of the corporate culture?

Curious.jpg

References

2008237251751534622. 2008237251751534622 — x.com/MillionInt/status/2008237251751534622