Edited by the Editorial Team, sourced from MEET2026

Emergence is the “realm” that AI strategists are currently eager to see on the battlefield of artificial intelligence.

Since Scaling Laws brought about astonishing improvements in model capabilities, nearly every model manufacturer has been swept up in an endless wave of FOMO (Fear Of Missing Out). No one dares to stop.

I believe the most captivating aspect of large models lies in their non-linear changes, which represent immense uncertainty. However, once performance emergence occurs, it will far exceed imagination.

Sun Maosong, Executive Vice Dean of the Institute for AI at Tsinghua University and Foreign Member of the European Academy of Sciences, expressed these sentiments at this website’s MEET2026 Intelligent Future Conference.

As long as computing power can be stacked and parameters increased, the burning of capital must not cease.

However, with the marginal costs of scaling becoming increasingly high, what if we eventually discover this is a dead end and all investments are wasted?

Sun Maosong’s advice is to “aim for breadth” but even more so to “attend to details.”

From an industry perspective, a few exceptionally well-resourced teams can attempt to continue following international frontiers in the direction of “breadth.” However, the vast majority of AI companies should focus their primary efforts on “attending to details.”

To fully present Sun Maosong’s thoughts, this website has edited and organized his speech content without altering its original meaning, hoping to provide new perspectives and insights.

MEET2026 Intelligent Future Conference is an industry summit hosted by this website, featuring discussions among nearly 30 industry representatives. The offline audience numbered nearly 1,500, while online live viewership exceeded 3.5 million, garnering widespread attention and coverage from mainstream media.

Key Takeaways

-

As model size and data scale continue to grow, capability emergence may occur. This high degree of non-linear change brings uncertainty, which is precisely what makes large models most fascinating. It is expected that in the coming years, even humanity’s most difficult exams with standard answers may not stump machines.

-

The fundamental challenge currently facing large models and embodied intelligence lies in how to clarify the relationship between “speech,” “knowledge,” and “action,” enabling machines to truly achieve “unity of knowledge and action.” Solving this problem is extremely difficult and involves major innovations in AI theory and foundational methods.

-

How far Scaling Laws can go remains highly uncertain. Any information system typically tends toward saturation at a certain stage. However, the emergence of new phenomena can break through this saturation. Therefore, China still needs a small number of top-tier teams to closely follow global frontier developments and explore the limits of scaling.

-

It is almost impossible for humanoid robots to enter general open environments within the next few years to autonomously perform relatively complex tasks. Instead, efforts should be focused on achieving “spark-to-prairie-fire” style implementation of AI applications across as many specific real-world scenarios or tasks as possible. This is entirely feasible (though the robots do not necessarily need to be humanoid) and should be the primary focus for the vast majority of enterprises.

Below is the full text of Sun Maosong’s speech:

Eight Years of Rapid Progress

My talk is titled “Generative AI and Large Models: Frontier Trends, Core Challenges, and Development Paths.” Frankly, this topic is difficult to address, as everyone worldwide is discussing it; I will share some of my superficial insights.

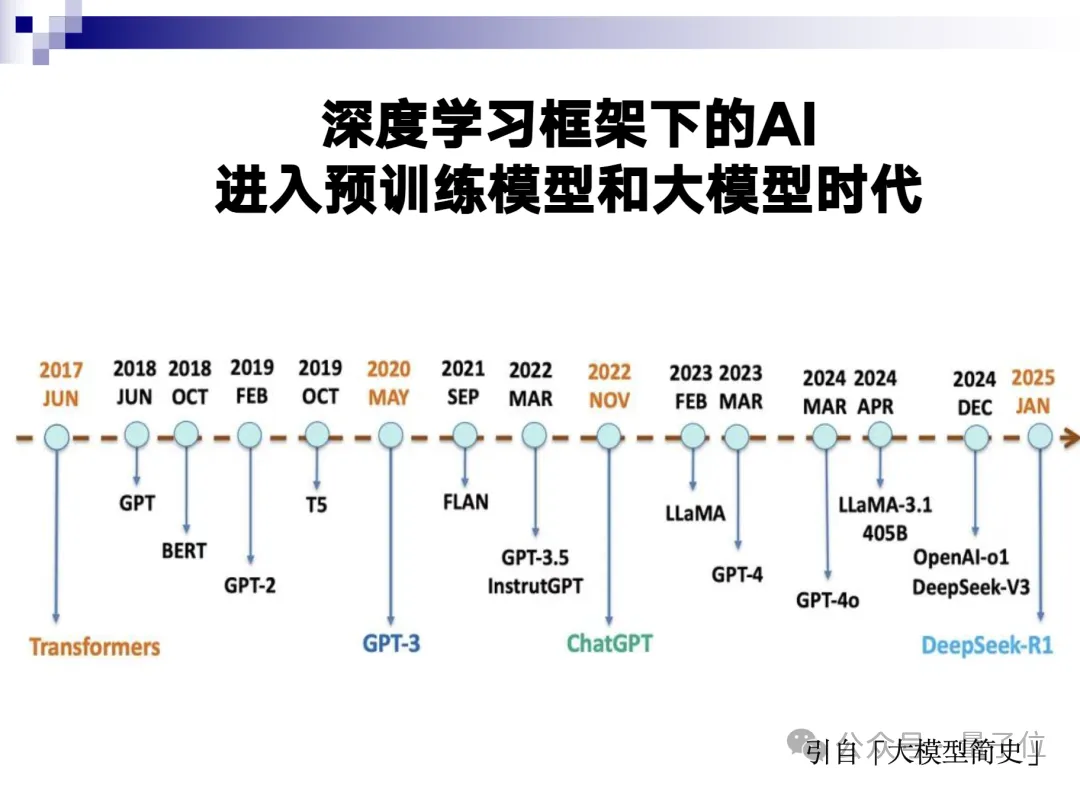

AI based on deep learning entered the era of pre-trained models and large models around 2017. Only eight years have passed since then.

There are several key milestones in these eight years:

-

GPT-3 was released approximately five years ago;

-

ChatGPT has been out for about three years;

-

DeepSeek emerged just one year ago.

We have traversed multiple stages in these eight years, echoing the ancient saying: “If you can renew yourself today, do so every day, and keep renewing.” This basically describes the normal state of large model development in recent years.

Especially in recent years, through long chain-of-thought reasoning, the ability of large models to solve complex tasks has risen sharply, presenting a scene where thousands of sails compete.

Why are we so obsessed with large models? Their most important characteristic is: as models and data volumes grow larger, capability emergence generally occurs—a phenomenon absent in previous models.

Once capability emergence happens, it becomes a non-linear change; you never know when or why it suddenly takes off.

If an endeavor does not produce performance emergence, it may remain unremarkable. But once emergence occurs, it can leave competitors far behind. Yet, whether this will happen cannot be predicted in advance. This is the most charming yet perplexing aspect of large models.

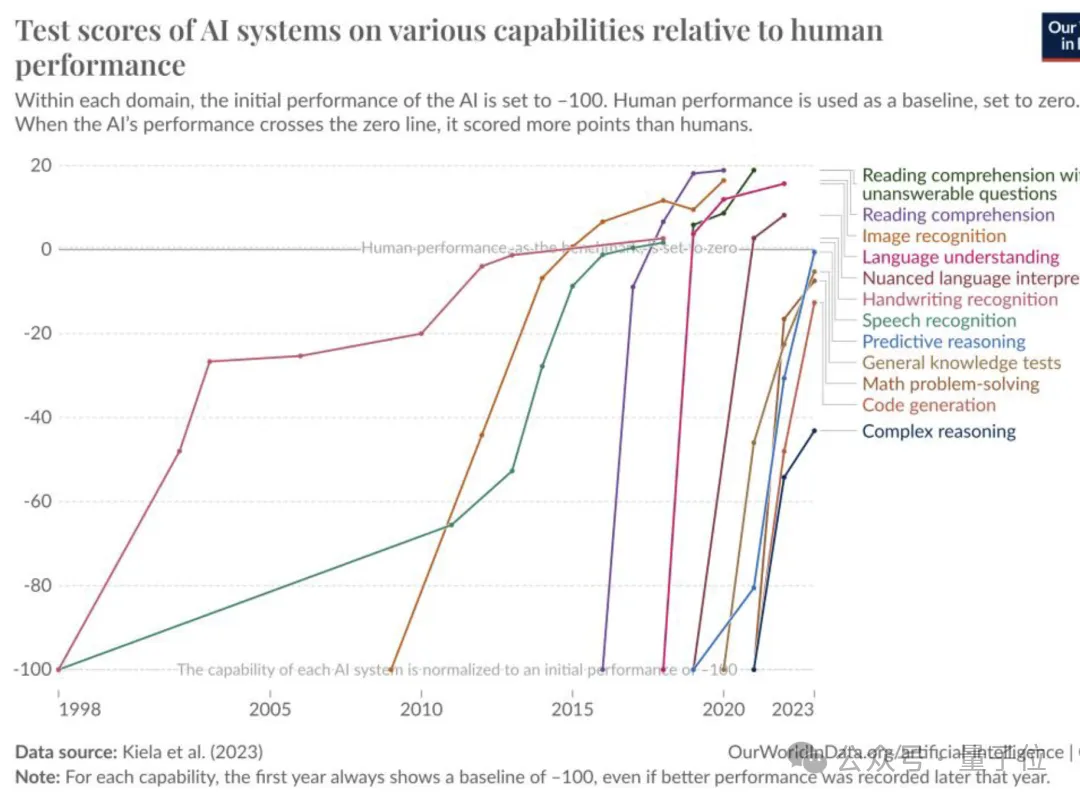

Development has been rapid in recent years. Text-based and image-text multimodal large models have nearly flattened all benchmarks.

There is a benchmark called “Humanity’s Last Exam,” designed to stump AI by collecting difficult questions from around the world—problems that have never appeared before and have no answers online.

Top human experts might score only five points on such tests, but current large models can achieve scores of thirty or forty.

It can be anticipated that in the coming years, machines will not be stumped by any exam with standard answers. This reflects the development status of text-based large models.

The progress of code large models is equally rapid. In this year’s International Collegiate Programming Contest (ICPC), the human first-place winner was outperformed by a large model. Additionally, everyone has witnessed the impressive performance of multimodal large models.

Overall, text, code, and multimodal large models have reached a fairly high level of basic capability, constituting the “basic landscape” of AI discussion today.

In his book Thinking, Fast and Slow, Daniel Kahneman proposed the famous System 1 (fast system) and System 2 (slow system).

After years of development, machines now possess strong capabilities in both System 1 and System 2, laying a very important foundation for AI to move beyond the text world toward embodied intelligence. Specifically, without System 1’s perceptual abilities, machines would be “bewildered” upon entering the real world and unable to do anything.

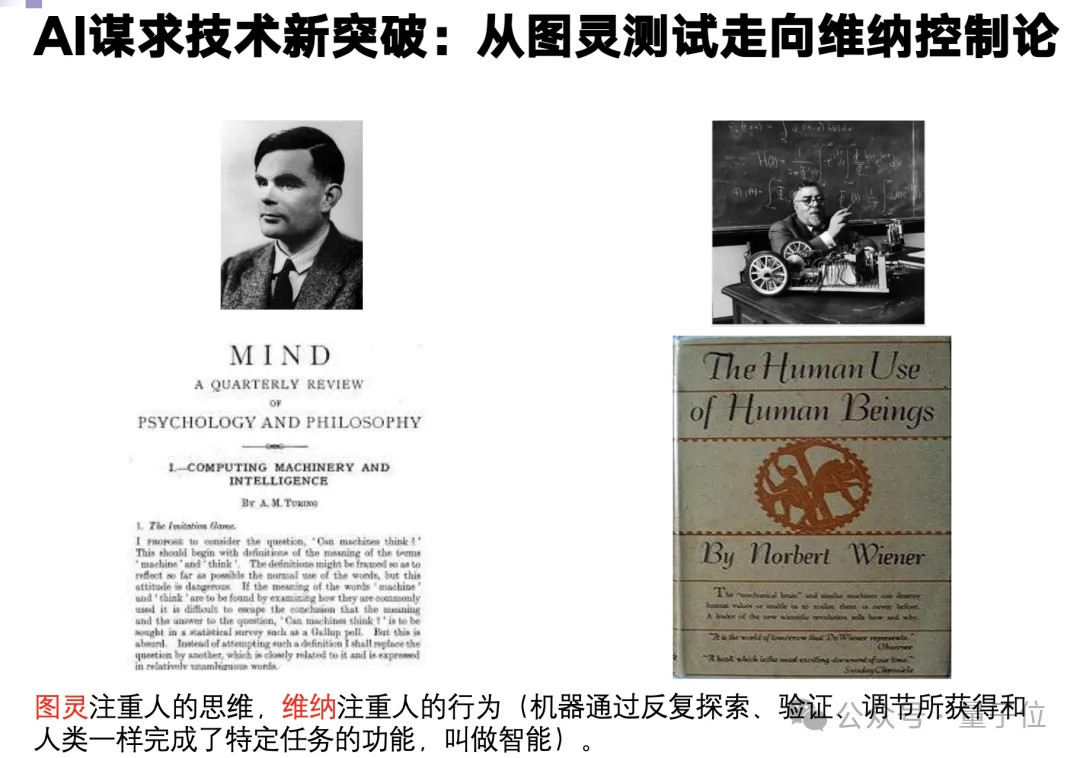

We often mention the Turing Test from 1950. At the linguistic level, it can be said that this test has been passed.

However, during the same period, Norbert Wiener, the father of cybernetics, proposed another equally important viewpoint in Cybernetics:

For a machine to possess intelligence, it must enter the real world. It needs to perceive this world, interact with it, receive rewards or punishments through feedback, and continuously self-adjust and self-learn based on these experiences. Only through this process can true intelligence form.

Today, we have certain conditions to put Wiener’s cybernetics into practice, which will elevate AI to a new level.

An old saying goes, “Speech is easy, action is hard,” and the poet Lu You wrote, “What is learned from books is superficial; to truly understand it, one must experience it personally.”

Language models are very good at “speech,” but once they move to “action,” there is a qualitative difference.

There is also an old saying: “Knowledge is difficult, action is easy.”

Although large models are currently very proficient in “speech”—as if all global knowledge has been parameterized and stored within them—their “knowledge” remains incomplete and unsystematic, lacking self-awareness.

Without any “knowledge,” “action” is meaningless.

However, although the “knowledge” of large models is imperfect, they have acquired about 70-80% of it. Therefore, in embodied intelligence, it may be possible to pursue the “unity of knowledge and action.”

Of course, moving from “speech” to “knowledge” is much more difficult. This constitutes today’s greatest challenge for AI: How to properly handle the relationship between “speech,” “action,” and “knowledge,” achieving the “unity of knowledge and action”?

Massive AI Investments Make Wall Street “Sweat Cold”; The Path Ahead Is Full of Challenges

AI development relies on Scaling Laws, involving large models, big data, and massive computing power. In recent years, new extensions have emerged: pre-training, post-training, and test-time scaling.

But there is a prerequisite condition: this scaling must be effective.

Any system encounters bottlenecks at a certain stage. Once performance begins to saturate, Scaling Laws may become ineffective; investing more money then might yield diminishing returns or even losses.

I emphasized earlier that large models may experience emergence. If emergence occurs, the money invested is well spent.

But how far Scaling Laws can go remains a huge question mark. The cost of supporting scaling is extremely expensive—too much capital burning and too much electricity consumption.

On November 3rd, France’s Les Echos (one of the country’s leading economic dailies) published an article titled: “Huge Investments in AI Make Wall Street ‘Sweat Cold.’”

Wall Street is usually known for sweating from heat; to be described as “sweating cold” indicates that these investments are indeed enormous.

The report cites several figures:

-

OpenAI’s current computing capacity is approximately 2 GW;

-

It plans to increase this by a factor of 125 by 2033, reaching 250 GW;

-

The corresponding investment scale could reach up to $10 trillion, not even including electricity costs.

Consider this: the average power generation capacity of one nuclear reactor is less than 1 GW. 250 GW is equivalent to 250 nuclear reactors. This represents an extremely aggressive level of investment and carries significant risk.

The problem lies in: We cannot afford not to keep up; if emergence occurs, we could be left far behind. But keeping up might be financially unsustainable.

Furthermore, consider embodied intelligence.

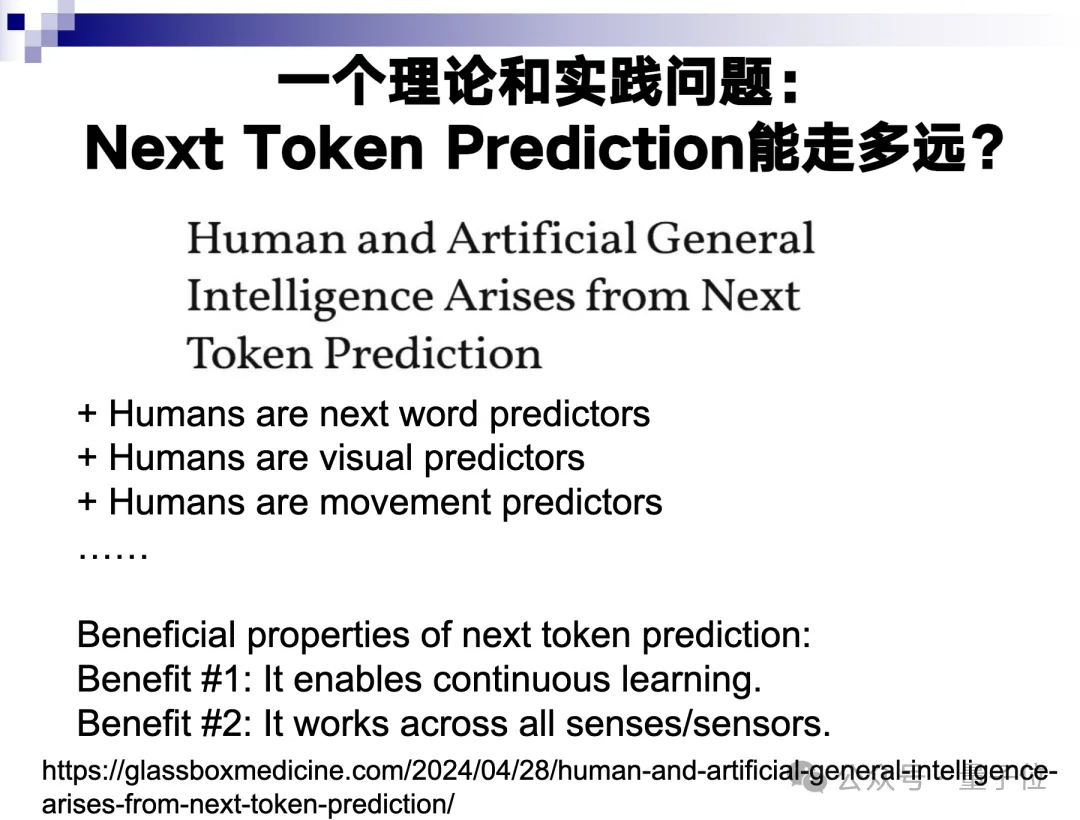

Fei-Fei Li proposed spatial intelligence, which essentially refers to the “action” mentioned earlier. This also faces theoretical and practical challenges: How far can Next Token Prediction go?

Text is generated entirely through Next Token Prediction. Later, various reinforcement learning techniques were added, but they are built upon this foundation. Image generation, including video generation, also relies heavily on this strategy to a large extent.

This strategy is nearly perfect in text, despite some hallucinations; it has reached expert-level proficiency. However, it becomes less effective with images, requiring cooperation with other strategies. Video generation is even more difficult; generating a 10-minute logically coherent video is quite challenging.

When moving to embodied intelligence, the prospects become highly uncertain.

Language succeeded because it is a linear sequence with the characteristic of “discrete infinity.”

For example, the word “apple” primarily has two meanings: the fruit or the specific company. Its semantic reference is clear, word boundaries are distinct, and sentence sequences are linear, making Next Token Prediction very effective.

But this does not work for images. It is unclear where the relatively explicit tokens in an image are located; instead, they must be treated as “patches.”

For instance, a 3x3 black block could be part of clothing, a corner of a desk, or an icon on a screen. Its semantic reference is highly uncertain. Moreover, it lacks holistic integrity; this black block might consist of a swarm of black ants or just a small portion of a patch on clothing.

Moving to video, which transitions from two dimensions to three, becomes even more difficult. Embodied intelligence operates in four dimensions—three-dimensional space plus time. The world is vast and ever-changing. Whether Next Token Prediction can handle such complex scenarios is uncertain.

I believe it is impossible for humanoid robots to autonomously complete relatively complex open-ended tasks in the real world within the next five years. For example, building an embodied robot capable of caring for the elderly at home? That is far too difficult.

Turing Award winner Geoffrey Hinton recently said something while discussing AI and unemployment:

If someone suggests you become a plumber, do not easily reject that advice.

This suggestion is reasonable; AI is still very far from possessing the capabilities of a plumber.

What might be feasible?

It would likely involve a simplified task space. For example, regarding dexterous hands mentioned earlier, handling relatively single and simple tasks. While doing this well is not easy, it is entirely possible.

Therefore, embodied intelligence will operate within limited domains and applications, but the development space there is still sufficient. We must act according to our capabilities, advance despite difficulties, but maintain appropriate limits in both progress and retreat.

We often speak of building world models, but this task is extremely difficult. There is currently no clear feasible technical path.

In the short term, we can only rely on Next Token Prediction. However, following this path will undoubtedly require order-of-magnitude increases in computing power and data requirements.

Of course, if capability emergence occurs again, robots might gain a higher degree of freedom even in relatively open task spaces.

”Embrace the Broad, Master the Detailed”

The development path appears to be relatively clear.

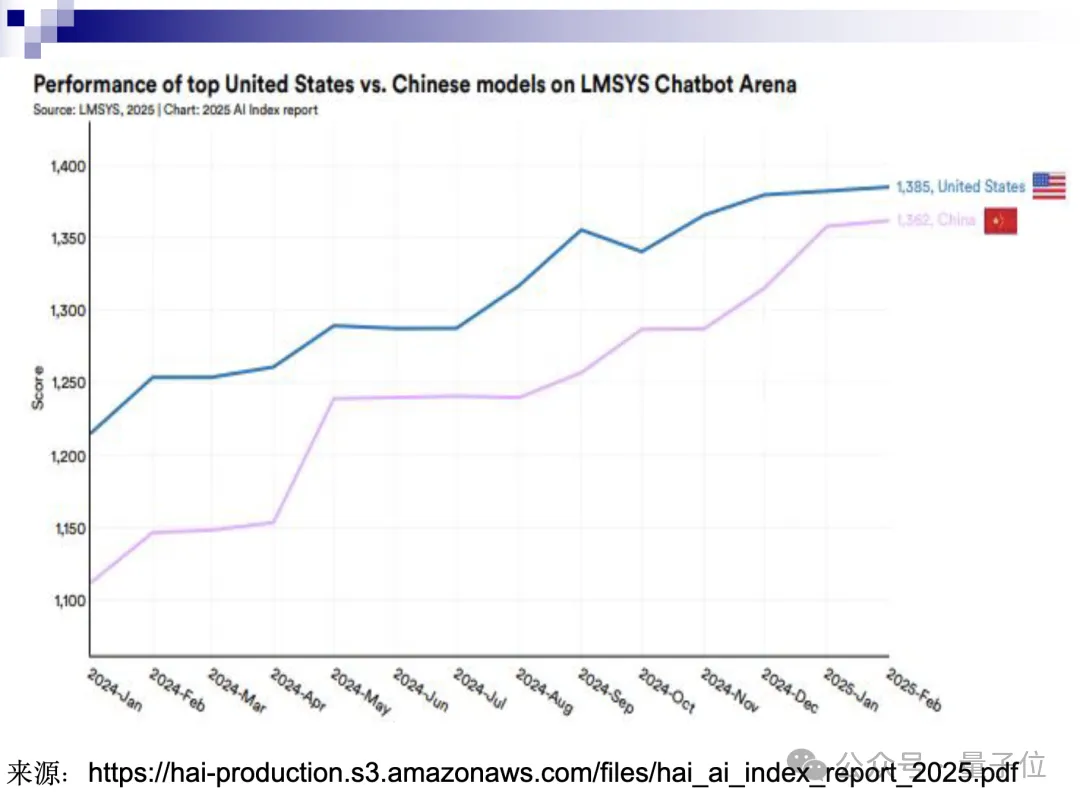

There is no need to elaborate on the United States’ approach. Domestically, highly representative models have emerged, such as DeepSeek and Qwen, both of which are performing exceptionally well. Comparative analyses suggest that the gap between Chinese and American capabilities has narrowed significantly.

There is a classical Chinese saying: “Embrace the broad, master the detailed.”

“Embracing the broad” means thinking big and acting on a large scale. This is currently the typical approach in the United States.

Deploying 100,000 GPUs, 1 million GPUs, or even hundreds of millions in the future requires massive capital investment—so much so that Wall Street finds it challenging to sustain. However, if this path succeeds and triggers an emergent breakthrough at a critical juncture, it could create a generational lead.

For China to follow this “frontal confrontation” approach, only a very few domestic tech giants would have the necessary resources, and even then, the journey would be arduous. Therefore, although the performance gap in large language models between China and the U.S. is currently small, significant uncertainty remains regarding the “embrace the broad” strategy over the next few years.

Against this backdrop, I believe that focusing on vertical AI applications—what we call “mastering the detailed”—is a sound strategic choice for China at present.

Open-source foundational models represented by DeepSeek and Qwen have already established a solid foundation. Building upon these bases to achieve deep integration across various industries is entirely feasible and could lead to global leadership.

However, this approach also presents significant challenges. It is unrealistic to expect that simply applying off-the-shelf large models will yield immediate results. In some cases, vertical domains themselves may even foster new AI algorithms. In this sense, “mastering the detailed” can itself be a form of “embracing the broad.”

Therefore, our path should be as follows:

- A small number of teams with exceptional resources can attempt to continue following international frontiers in the “embrace the broad” direction;

- However, the vast majority of AI companies should focus their primary efforts on “mastering the detailed.”

Vertical application development is highly challenging but carries lower risk. We have the conditions to outperform the United States in this area. Our rich application scenarios, strong industrial base, and the intelligence and diligence of our people all contribute to a competitive advantage in “mastering the detailed.”

As for “embracing the broad,” it involves whether the entire education system can cultivate talent capable of achieving 0-to-1 innovations, including answering Qian Xuesen’s famous question. That is a far more complex issue. It is acceptable to pause on that front for now; instead, we should first perfect “mastering the detailed” and then pivot back to “embracing the broad,” while keeping a close eye on it.

Many of you here are engaged in work related to “mastering the detailed.” I believe this is excellent and represents what we should be focusing on at present.

These are my personal observations and reflections, which may not be entirely accurate. Thank you all!