After completing the most significant organizational restructuring in its history, OpenAI immediately held a live broadcast.

The company publicly revealed specific timelines for its internal research goals, with the most notable being “achieving fully autonomous AI researchers by March 2028,” down to the month.

The press event was packed with information density. Even Sam Altman stated, “Given the importance of this content, we are sharing our specific research goals, infrastructure plans, and product strategy with unusual transparency.”

Has OpenAI truly become ‘Open’ again after its restructuring?

However, there were some hiccups. Originally, OpenAI invited users to submit questions via a post, but so many people complained about GPT-4o’s mandatory routing mechanism for sensitive conversations that the two speakers hesitated and exchanged awkward glances.

Altman ultimately admitted, “This time we messed up.”

Our goal is to protect vulnerable users while giving adult users more freedom. We have an obligation to protect minor users and adult users who are not in a reasonable mindset.

With the establishment of age verification, we will be better able to strike this balance. This wasn’t our best work, but we will improve it.

2028: Letting AI Conduct Research Itself – OpenAI Provides a Clear Timeline

At the start of the livestream, Altman acknowledged his mistakes.

In the past, we imagined AGI as an “oracle from heaven,” where superintelligence would automatically create beautiful things for humanity.

But now we realize that what truly matters is creating tools that allow people to build their own futures.

This shift in thinking was not accidental. Every technological revolution in human history has stemmed from better tools, from stone tools to steam engines, and from computers to the internet.

OpenAI believes AI will be the next tool to change the course of civilization, and its mission is to make this tool as powerful, user-friendly, and accessible as possible.

Next, Chief Scientist Jakub Pachocki unveiled an internal OpenAI goal and roadmap:

- September 2026: AI Research Intern Level. Capable of significantly accelerating researchers’ work through massive computation.

- March 2028: Fully Automated AI Researchers, capable of independently completing large-scale research projects.

While introducing research progress, he specifically emphasized that OpenAI believes deep learning systems are “possibly less than a decade away” from superintelligence. Here, “superintelligence” refers to systems that are smarter than humans in many key areas.

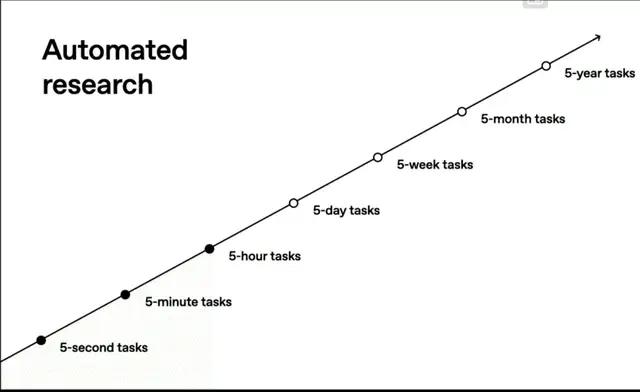

Their quantitative method for measuring AI capability progress is based on the time span required for models to complete tasks. From initial tasks taking just seconds to current tasks taking five hours (such as defeating top competitors in international math and informatics competitions), this time span is rapidly extending.

Consider the thinking time models currently spend on problems, and then consider how much time you would be willing to spend on truly significant scientific breakthroughs. It is acceptable to let models use the computational resources of an entire data center to think; there is huge room for improvement here.

Pachocki also detailed a new technology called “Chain of Thought Faithfulness.”

Simply put, this involves intentionally not supervising the model’s internal reasoning process during training, allowing it to remain faithful to its actual thoughts.

We do not guide the model to think “good thoughts,” but rather keep it faithful to its actual thinking process.

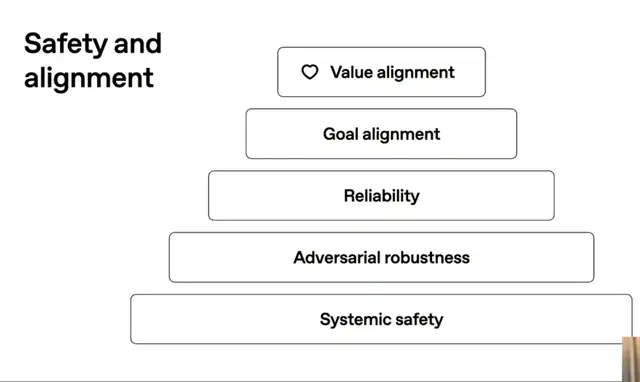

Within OpenAI’s five-layer AI safety architecture, Chain of Thought Faithfulness targets the top layer of value alignment.

What does AI truly care about? Can it adhere to high-level principles? How will it act when facing ambiguous or conflicting goals? Does it lack humanity?

This question is important because:

- When systems engage in long-duration thinking, we cannot provide detailed instructions for every step.

- As AI becomes extremely intelligent, it may face problems that humans cannot fully understand.

- When AI handles problems beyond human capability, providing complete specifications becomes difficult or even impossible.

In these situations, reliance on deeper alignment is necessary. People cannot write rules for every detail; they must rely on the AI’s intrinsic values.

Traditional methods involve viewing and guiding the model’s thinking process during training, which effectively teaches it to say what we want to hear rather than remaining faithful to its true thought process.

Currently, this method is widely used internally at OpenAI to understand how models are trained and how biases evolve. It is also used in external collaborative research; by examining unsupervised chains of thought, potential deceptive behaviors can be detected.

However, ensuring AI values do not conflict with monitoring is only half the success. Ideally, we want AI values to actually assist in monitoring models, which is what OpenAI is currently researching vigorously.

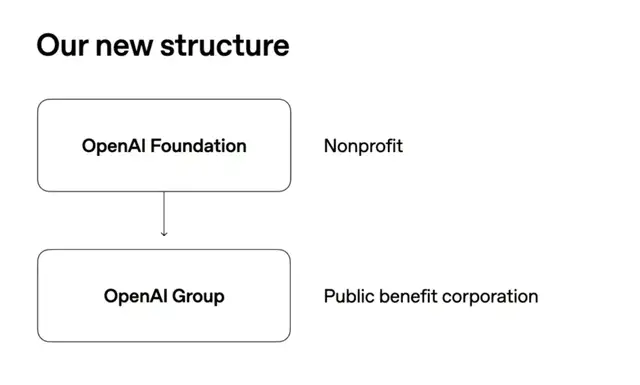

New Architecture Unveiled: Non-Profit Foundation Takes Control

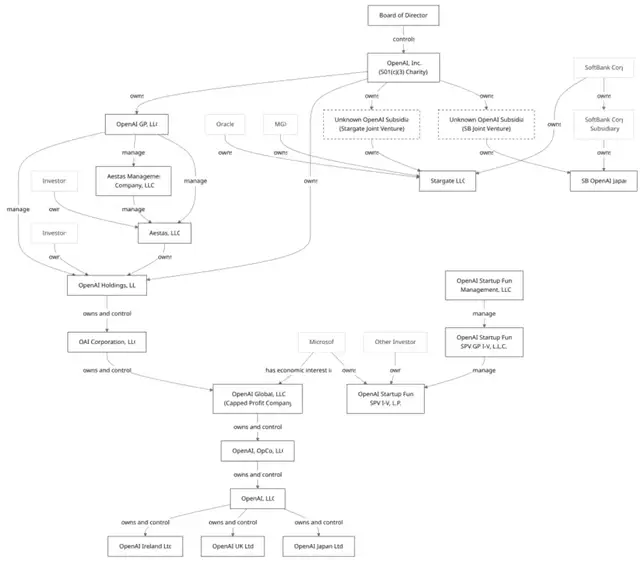

The much-anticipated OpenAI restructuring plan has finally been revealed, surprisingly simpler than the original proposal.

The old architecture included multiple interconnected complex entities:

The new architecture consists of just two layers:

The core is the OpenAI Foundation, a non-profit organization that will have full control over its subsidiary, the Public Benefit Company OpenAI Group.

Initially, the foundation will hold approximately 26% of the equity in the public benefit company. However, if performance is excellent, this percentage can be increased through warrants.

Sam Altman hopes the OpenAI Foundation will become the largest non-profit organization in history. Its first major commitment is investing $25 billion into AI-assisted disease treatment research.

Beyond medical research, the foundation will also focus on a brand-new field: AI Resilience.

OpenAI co-founder Wojciech Zaremba specifically introduced this concept, which has a broader scope than traditional AI safety.

For example, even if OpenAI can prevent its models from being used for dangerous purposes, if others use different models to cause trouble, society still needs rapid response mechanisms when problems occur.

Zaremba believes this is similar to cybersecurity in the early days of the internet. At that time, people were afraid to enter credit card numbers online and had to call each other to warn about viruses and disconnect from the network. Now, with a complete cybersecurity industry chain, people dare to place their most private data and life savings online.

Regarding infrastructure, OpenAI publicly disclosed its investment scale for the first time: committed infrastructure construction totals over 30 GW (gigawatts), with total financial obligations of approximately $1.4 trillion.

Altman also revealed a long-term goal: to build an infrastructure factory capable of creating 1 GW of computing power per week, and hopes to reduce the cost per gigawatt to around $20 billion over a five-year lifecycle.

To achieve this goal, OpenAI is considering investing in robotics technology to help construct data centers.

To help everyone understand this scale, OpenAI highlighted its first StarGate data center under construction in Abilene, Texas. Among multiple sites under development, this one has the fastest progress.

Thousands of people work on this site daily, and the entire supply chain involves hundreds of thousands, even millions of people, ranging from chip design and manufacturing to assembly and energy supply.

The Q&A Session Was Also Engaging

Q1: Technology is becoming addictive. Yet Sora mimics TikTok, and ChatGPT might add ads. Why repeat the same pattern?

Altman: Judge us by our actions. If Sora becomes an addictive scrolling experience rather than a tool for creation, we will discontinue that product. We hope not to repeat past mistakes, but we may make new ones, requiring rapid evolution and tight feedback loops.

Q2: When will large-scale unemployment caused by AI occur?

Pachocki: Many jobs will be automated in the coming years. What jobs will replace them? What kind of new pursuits are worth everyone’s participation?

I believe there will be several aspects: understanding more about the world, incredible amounts of new knowledge, new entertainment, and new intelligence will provide people with considerable meaning and a sense of achievement.

Q3: How far ahead is the internal model compared to the publicly deployed version?

Pachocki has high expectations for next-generation models and anticipates rapid progress in the coming months and year, but did not hide anything extremely radical.

Altman added that they have developed many components; only when combined do they produce impressive results.

Today we simply have many such components. We are not sitting on huge achievements unseen by the world, but we expect a significant leap in AI capabilities within a year.

Q4: How can OpenAI provide so many features for free-tier users?

Jakub first explained this phenomenon from a technical perspective:

When OpenAI develops next-generation models (such as GPT-5), it represents a new frontier of intelligence, i.e., the highest level AI can currently achieve.

Once this frontier is reached, cheaper methods to replicate this capability are quickly found.

Altman supplemented this discussion from a business perspective: Over the past few years, the price per unit of intelligence has decreased by approximately 40 times annually.

This creates an apparent contradiction: why is massive infrastructure still needed? The cheaper AI becomes, the more people want to use it, and ultimately, total costs are expected to increase.

OpenAI commits that as long as its business model remains viable, it will continue to dedicate its best technology to the free tier.

Q5: Is ChatGPT OpenAI’s ultimate product, or a precursor to something greater?

Pachocki explained that as a research lab, they did not initially intend to build a chatbot.

However, they have now recognized the consistency of this product with their overall mission. ChatGPT allows everyone to use powerful AI without programming knowledge or technical backgrounds.

Altman believes the chat interface is a good one but will not be the only one. The way people use these systems will change drastically over time.

For tasks under five minutes, the chat interface performs well, allowing for back-and-forth questioning and gradual refinement until satisfaction is achieved.

But for five-hour tasks, richer interfaces are needed. What about tasks lasting five years or five centuries? This almost exceeds our imagination.

Altman then depicted what he considers the most important direction of evolution: an ambient-aware, always-present companion—a service that observes your life and proactively helps you when needed.

Video Replay:

[https://openai.com/live/?video=1131297184](https://ope