Here it is, here it comes!

The next-generation flagship model, Qwen3-Max, has officially arrived—with a perfect score.

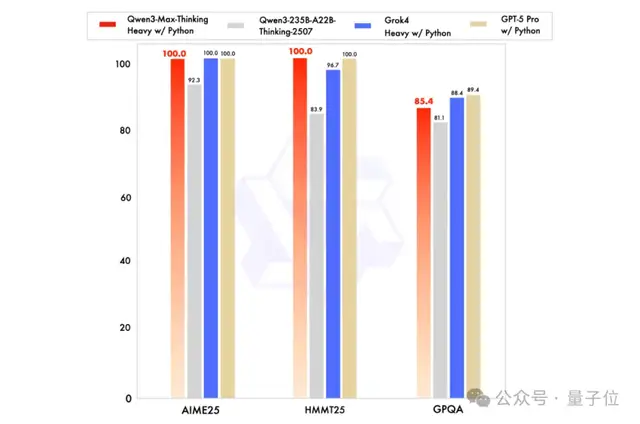

For the first time, a domestic large language model has achieved a 100% score on both the AIME25 and HMMT mathematics evaluation benchmarks!

Consistent with the recently released Qwen3-Max-Preview, the parameter count remains in the trillions.

However, this official release introduces a version split:

- Instruct Version

- Thinking Version

Qwen3-Max also demonstrates performance improvements across both emotional and cognitive intelligence metrics.

The perfect mathematics score mentioned earlier was achieved by the Thinking Version.

Meanwhile, the Instruct Version scored 69.6 on the SWE-Bench evaluation (which tests large models’ ability to solve real-world problems via coding), placing it in the global top tier.

It also scored 74.8 on the Tau2 Bench test (evaluating Agent tool-calling capabilities), surpassing Claude Opus 4 and DeepSeek V3.1.

It is indeed quite powerful.

But to be fair, if Qwen3-Max is the “fire,” then at the recent Apsara Conference, the Tongyi team also unveiled many other “stars.”

Visual: Major Open-Source Release of Qwen3-VL

The first “star” emerging from Qwen3-Max is the visual understanding model, Qwen3-VL.

It was actually open-sourced early this morning, making it fresh off the press, but it has certainly been one of the most anticipated releases.

Specifically, this model is named Qwen3-VL-235B-A22B, and it also comes in both Instruct and Reasoning versions.

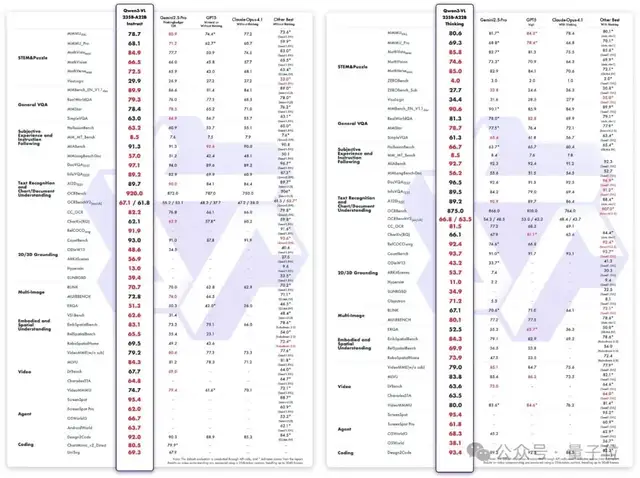

The Instruct version achieves performance that matches or exceeds Gemini 2.5 Pro across multiple mainstream visual perception benchmarks, while the Reasoning version delivers SOTA (State-of-the-Art) results on various multimodal reasoning evaluation baselines.

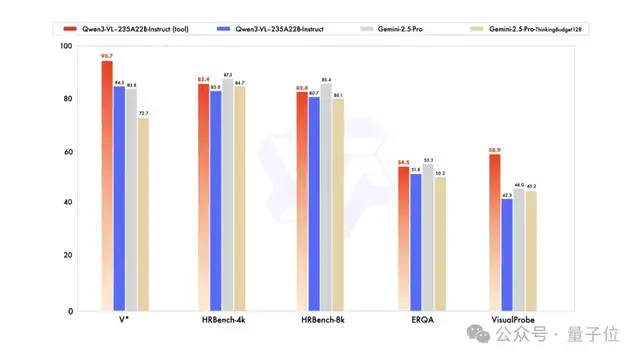

Additionally, the Instruct version of Qwen3-VL-235B-A22B supports visual reasoning (reasoning with images), showing improved scores across four benchmark tests.

Upon seeing these results, netizens exclaimed:

Qwen3-VL is truly a monster (too powerful).

Real-world test results are now available.

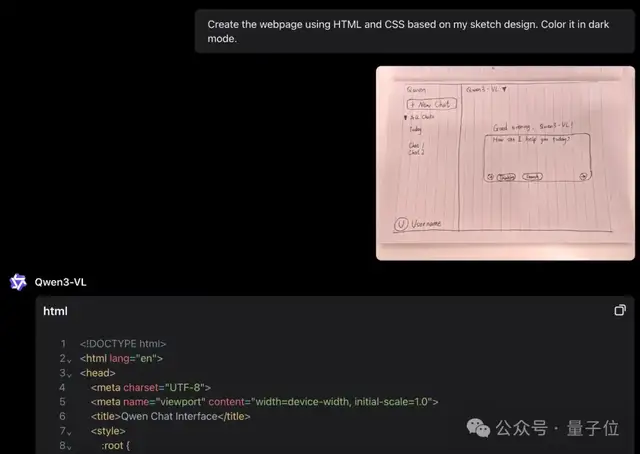

For example, when fed a hand-drawn webpage sketch, Qwen3-VL quickly generates the corresponding HTML and CSS:

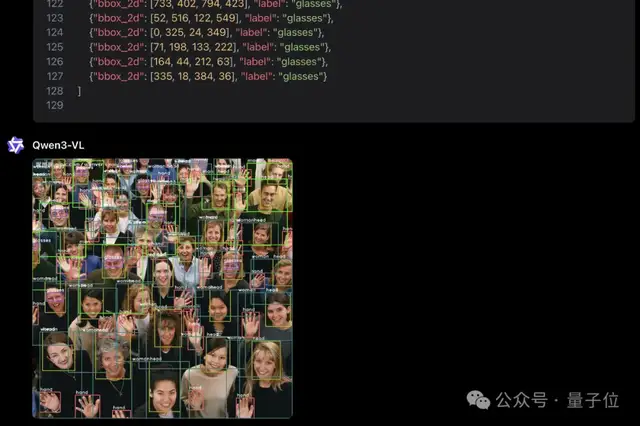

Or consider this image:

With the following task for Qwen3-VL:

Identify all instances belonging to the following categories: “head, hand, male, female, glasses.” Report bounding box coordinates in JSON format.

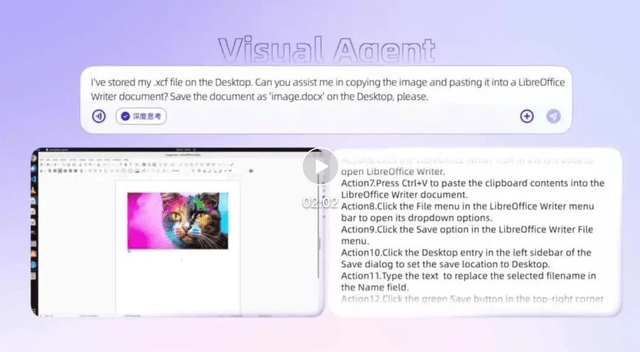

Qwen3-VL also handles more complex video understanding tasks with ease:

You can learn more through the video below:

Video link: https://mp.weixin.qq.com/s/nkNXwpDxxvFVleQ3yB-g5w

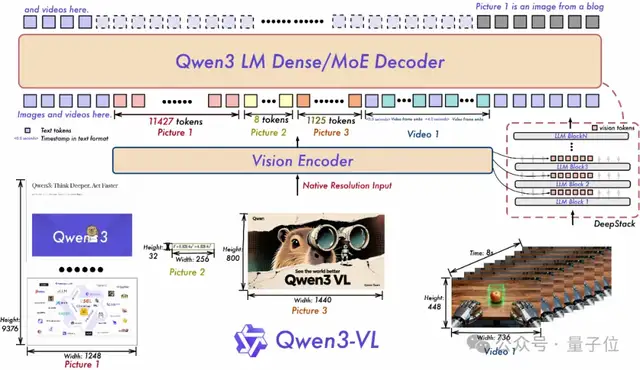

From a technical perspective, Qwen3-VL retains its native dynamic resolution design but features updated structural components.

First, it adopts MRoPE-Interleave. The original MRoPE divided dimensions in the order of time (t), height (h), and width (w), concentrating temporal information in high-frequency dimensions. Qwen3-VL interleaves t, h, and w to achieve full-frequency coverage, enhancing long-video understanding while maintaining image comprehension capabilities.

Second, it introduces DeepStack, which fuses multi-layer features from ViT to enhance visual detail capture and text-image alignment. The team expanded single-layer injection of visual tokens into the LLM to multi-layer injection, optimizing feature tokenization by tokenizing outputs from different ViT layers separately before inputting them into the model. This preserves multi-level visual information from low to high levels. Experiments show this design significantly improves performance across various visual understanding tasks.

Third, video temporal modeling has been upgraded from T-RoPE to a text-timestamp alignment mechanism. By interleaving “timestamps” with “video frames,” it achieves fine-grained alignment between frame-level time and visual content, natively supporting both “seconds” and “HMS” output formats. This improves the model’s semantic perception and temporal accuracy in complex sequential tasks such as event localization, action boundary detection, and cross-modal temporal question answering.

Full-Modal: Open-Source Release of Qwen3-Omni

Although Qwen3-Omni was open-sourced early yesterday morning, it also made its debut at the Apsara Conference, highlighting its full-modal capabilities.

It is the first native end-to-end full-modal AI model, unifying text, images, audio, and video within a single architecture, achieving SOTA levels across 22 audio-video benchmarks.

The currently open-sourced versions include:

- Qwen3-Omni-30B-A3B-Instruct

- Qwen3-Omni-30B-A3B-Thinking

- Qwen3-Omni-30B-A3B-Captioner

Furthermore, several specialized large models derived from Qwen3-Omni have been developed.

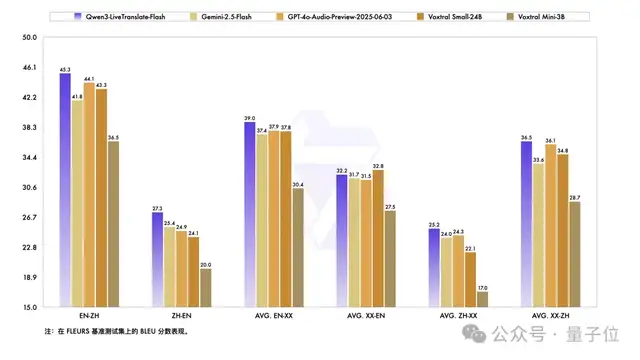

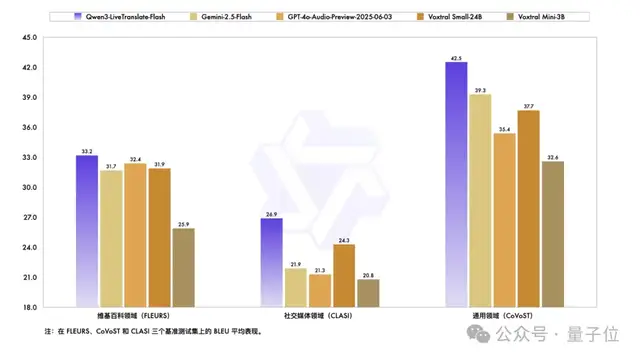

For instance, the newly released Qwen3-LiveTranslate is one such model—a visual, auditory, and vocal full-modal simultaneous interpretation system.

It currently supports offline and real-time audio-video translation across 18 languages.

Public test results show that Qwen3-LiveTranslate-Flash has surpassed models like Gemini-2.5-Flash and GPT-4o-Audio-Preview in accuracy:

Even in noisy environments, Qwen3-LiveTranslate-Flash maintains robust performance:

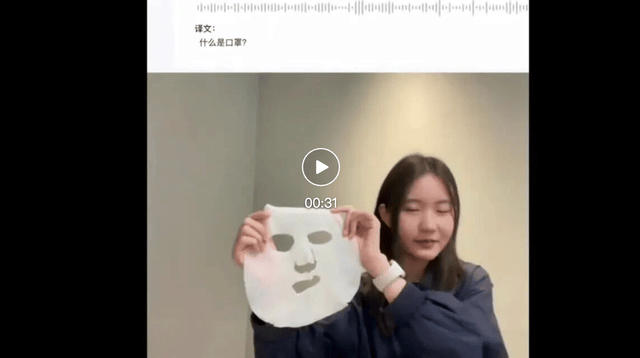

To experience the specific effects, see the practical demonstration below:

Video link: https://mp.weixin.qq.com/s/nkNXwpDxxvFVleQ3yB-g5w

Original English: What is mask? This is mask. This is mask. This is mask. This is Musk.

Before Visual Enhancement: What is a face mask? This is a face mask, this is a face mask, this is a face mask, this is a face mask.

After Visual Enhancement: What is a mask? This is a facial mask, this is a face mask, this is a theatrical mask, this is Musk.

Netizens were visibly stunned:

It’s getting a bit creepy now.

Beyond translation, the new version of Qwen3-Image-Edit—nicknamed “Qwen Banana”—is also a fascinating model.

It supports multi-image fusion, offering various combinations such as “person + person,” “person + product,” and “person + scene.” It has also enhanced single-image consistency for people, products, and text.

Moreover, it natively supports ControlNet, allowing users to change character poses via keypoint maps and easily fulfill outfit-changing requirements.

Coding: Upgrade of Qwen3-Coder

The newly upgraded Qwen3-Coder-Plus employs a “combo” strategy, jointly training with Qwen Code and the Claude Code system.

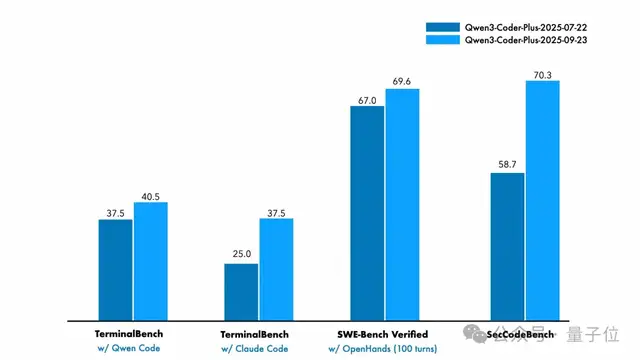

This approach has significantly boosted its performance; compared to previous versions, scores have increased across all benchmark tests:

At the same time, its associated coding product, Qwen Code, has also been upgraded to support multimodal models and sub-agents.

In other words, you can now input images when using Qwen Code:

Netizens have already begun real-world testing. The 3D pagoda generated by Qwen3-Coder-Plus looks like this:

The Endgame for Qwen Is Not Just Open Source

To summarize, the highlights from this Apsara Conference…

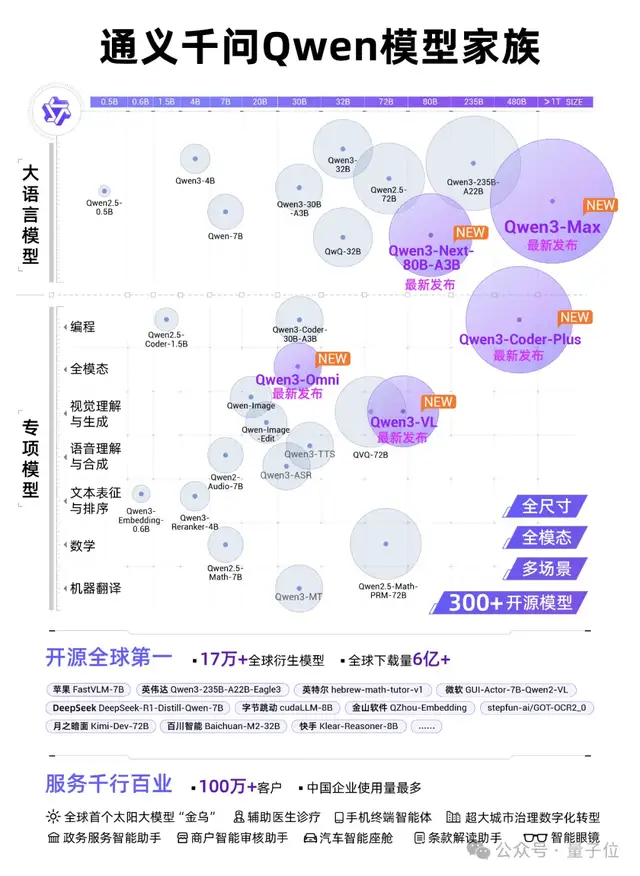

First, from the day before yesterday until now, Alibaba’s Tongyi Qianwen has successively released and open-sourced nearly ten models of varying sizes, leaving industry insiders both domestic and international in awe of Alibaba Cloud’s open-source speed.

That said, after listening to the speech by Wu Yongming, Chairman and CEO of Alibaba Cloud Intelligence Group, we realized that Tongyi Qianwen’s ambitions extend far beyond this.

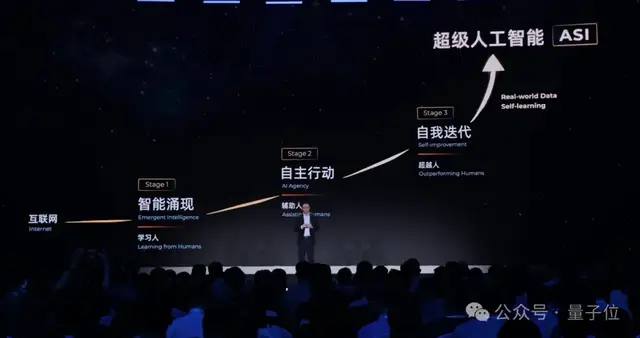

Wu stated that achieving Artificial General Intelligence (AGI) is already a certainty, but this is merely the starting point; the ultimate goal is to develop Artificial Superintelligence (ASI) capable of self-iteration and comprehensive superiority over humans.

To achieve ASI, Wu outlined a path beginning with the internet, progressing through four stages:

The first stage is the emergence of intelligence (learning from humans), followed by autonomous action (assisting humans), then self-iteration (surpassing humans), and finally, Artificial Superintelligence (ASI).

Furthermore, Wu offered a forward-looking perspective:

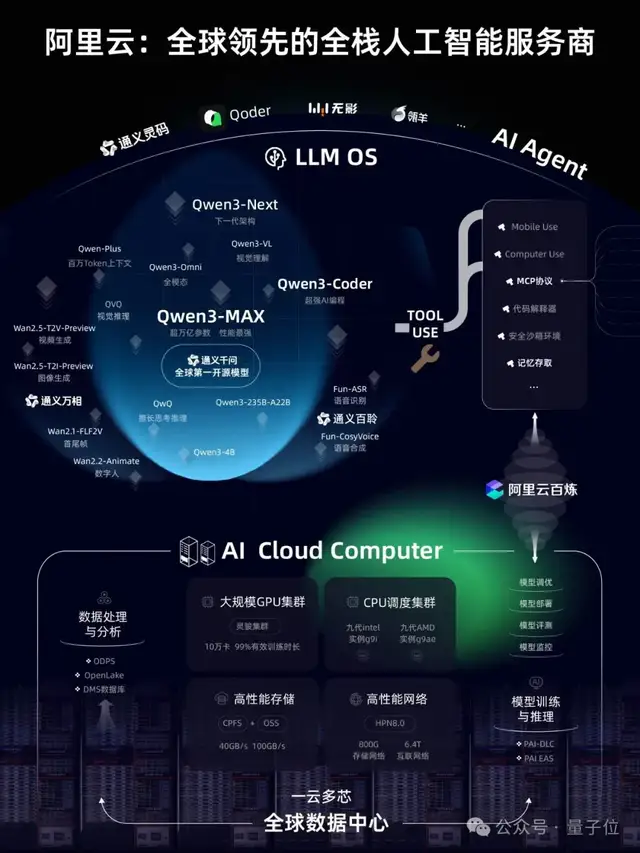

Large models will become the next-generation operating system, natural language will be the source code of the future, and AI Cloud will be the next-generation computer.

In the future, there may only be 5–6 super cloud computing platforms worldwide.

However, one point must be clear: The stronger the AI, the stronger humanity becomes.

One More Thing

Oh, by the way, Tongyi Qianwen’s new generation of foundational model architecture—Qwen3-Next—was officially released today!

Its total parameter count is approximately 80B. However, with only 3B parameters activated, its performance rivals that of Qwen3-235B.

In terms of computational efficiency, it is simply on another level.

Compared to the dense model Qwen3-32B, its training costs have been reduced by over 90%, while long-text inference throughput has increased more than tenfold.

It must be said that the future of large model training and inference efficiency is about to become much more interesting.