Is this amazing?!

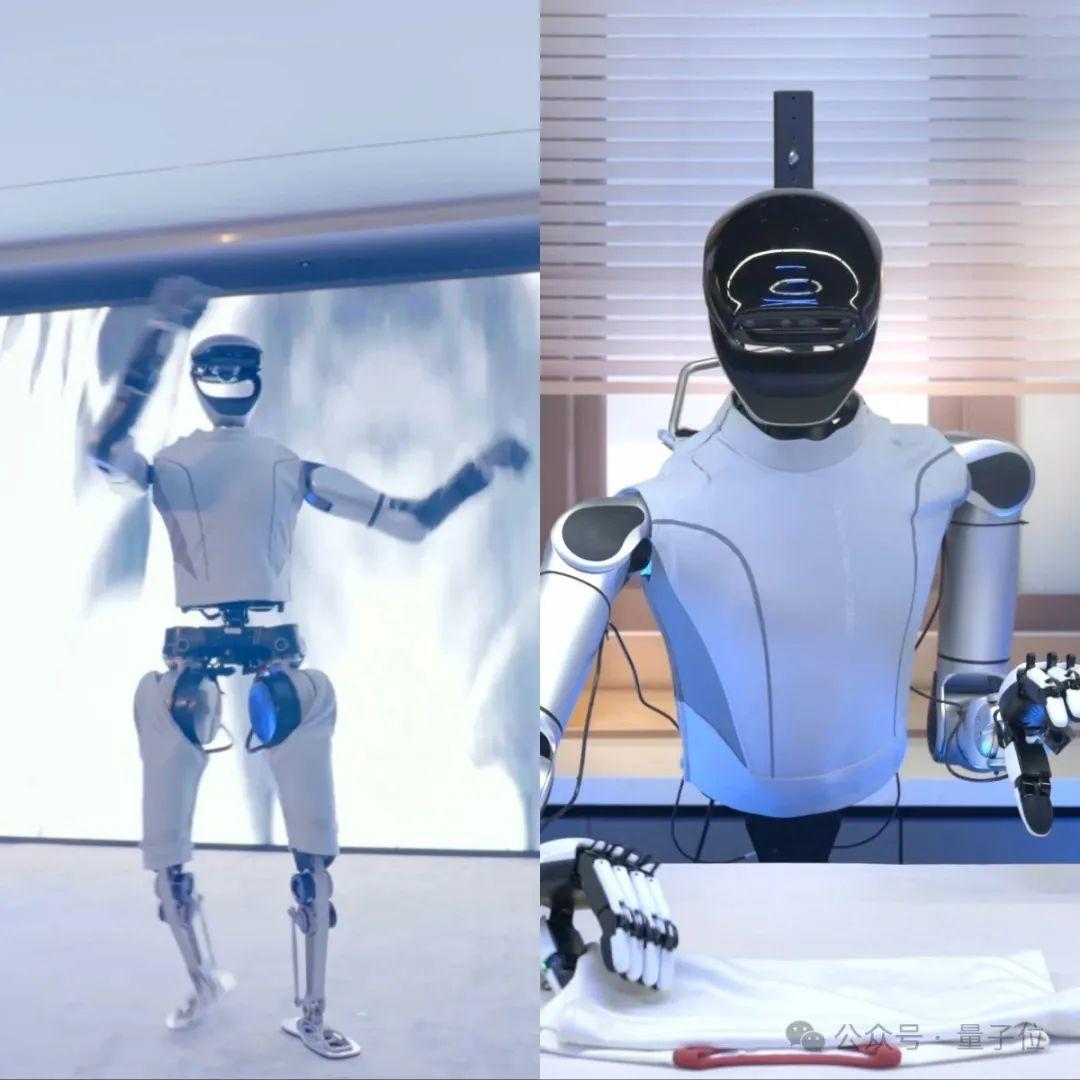

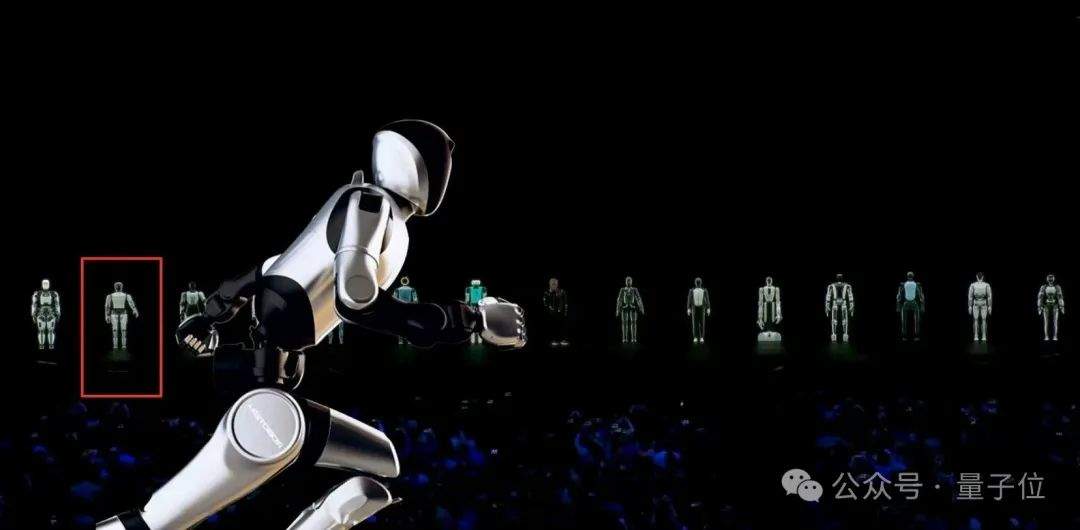

Watch closely: this is a robot breakdancing. Its movements are fluid, tackling Breaking, a style that demands high levels of power and coordination.

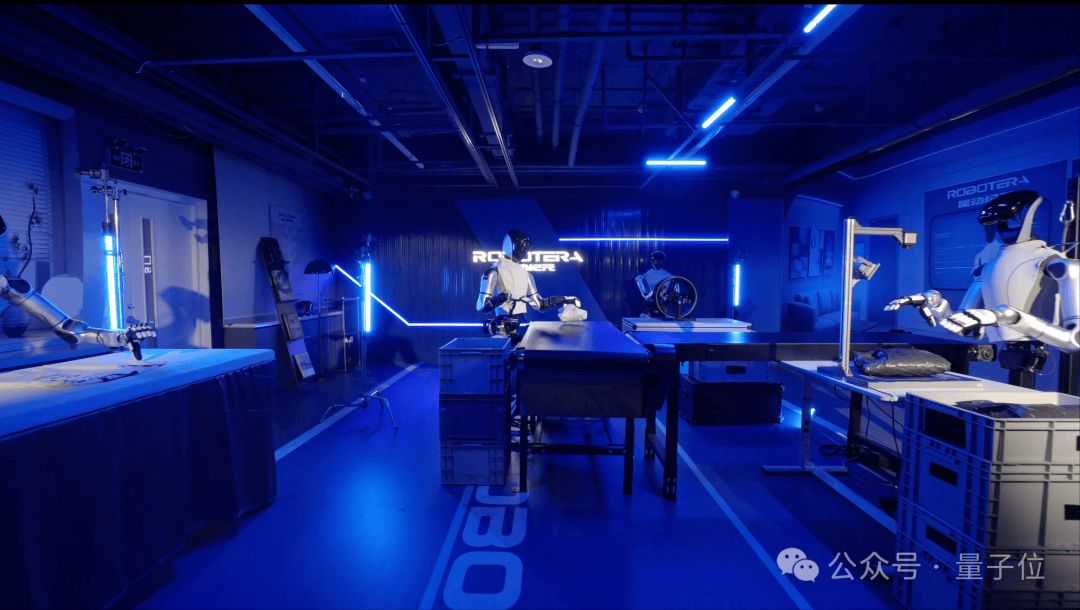

But it’s not just about movement; when it comes to “working,” it performs with impressive precision:

Whether it’s delicate daily tasks like folding clothes, tearing tissues, pulling curtains, or using chopsticks, or industrial duties such as material handling, sorting, and scanning in a factory, the robot executes them flawlessly. It even demonstrates collaborative swarm behavior, reminiscent of science fiction films.

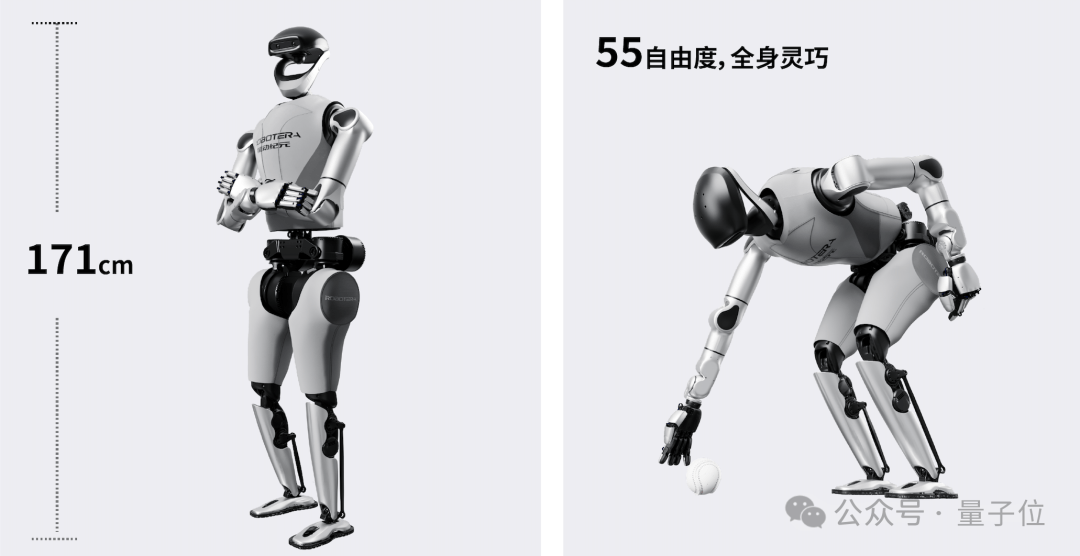

Moreover, this is a large-scale bipedal humanoid robot, standing 171 cm tall and weighing 65 kg. In the field of embodied AI robotics, it sets the standard for full-size models right out of the gate.

It combines robust physical capabilities with delicate dexterity, capable of both dynamic movement and precise stillness.

Behind these feats lies a smart brain.

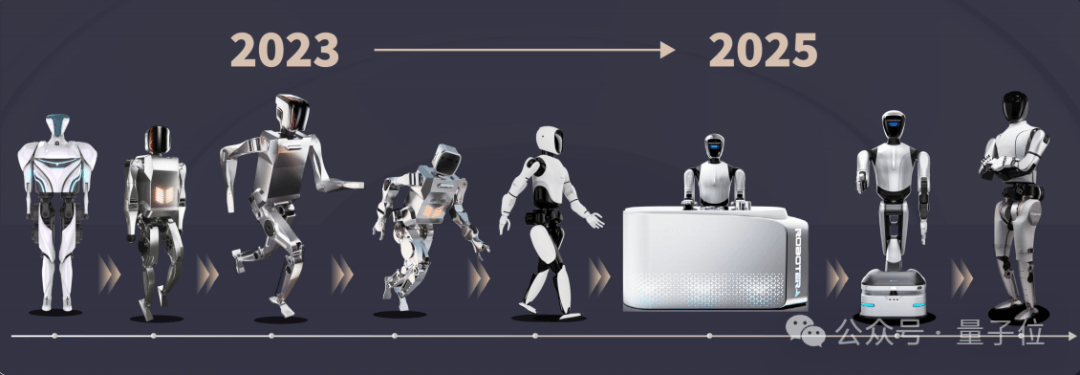

This showcases the latest capabilities of the Xingdong L7 robot, developed by the star team Xingdong Era, which has strong ties to Tsinghua University’s Interdisciplinary Information Sciences Institute. The team recently sparked industry-wide discussion with nearly 500 million RMB in new funding. Just as momentum was building, they unveiled these stunning advancements.

These capabilities have set multiple records:

The first to complete a 360° rotational jump; the first capable of breakdancing; the fastest runner, surpassing human speed; and the first full-size humanoid robot to possess fine manipulation skills.

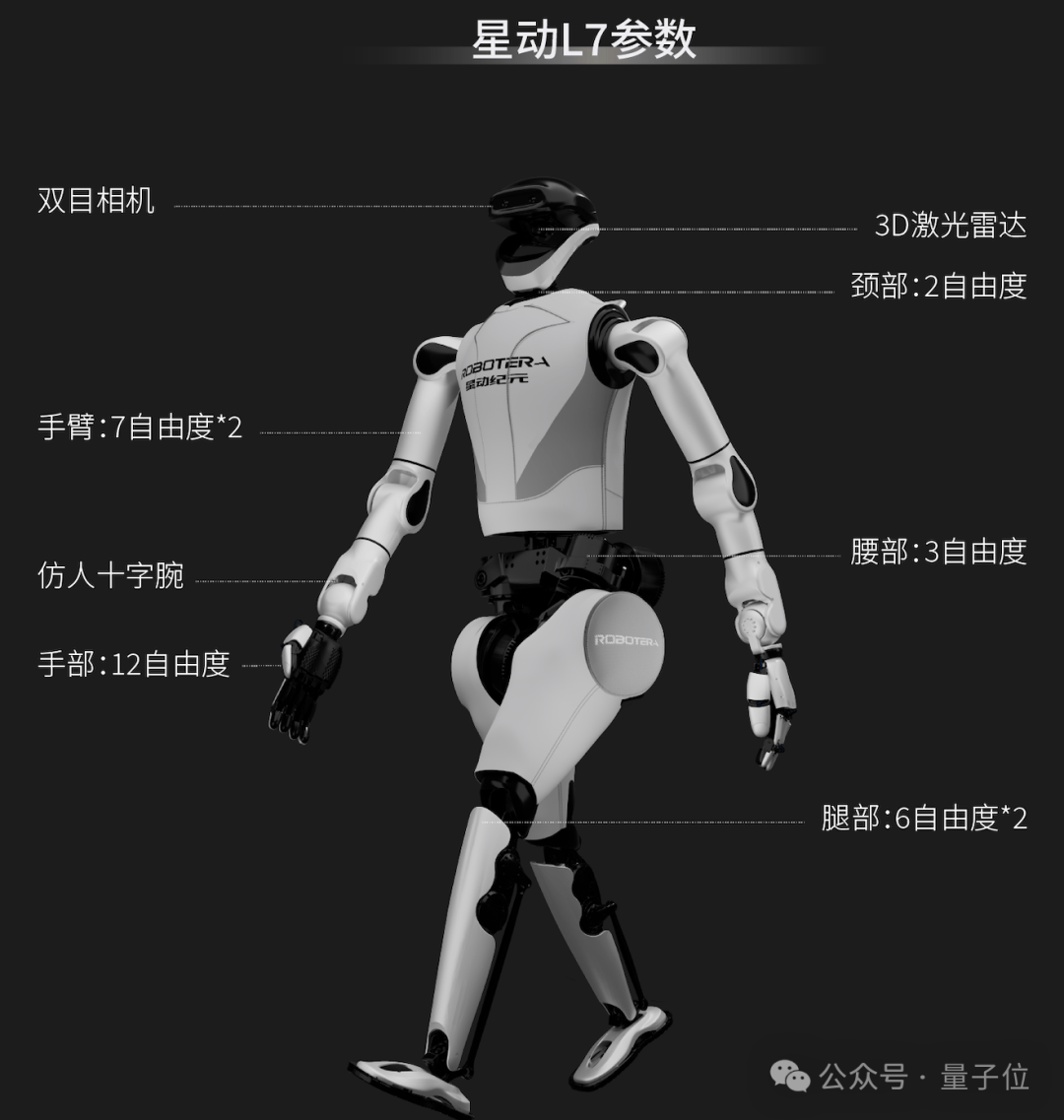

Behind these records are not only an advanced AI brain but also leading-edge motion control technology. Notably, it features up to 55 degrees of freedom (DOF) across its body—far exceeding benchmarks like Tesla’s Optimus.

China’s top embodied AI player indeed sets a new height upon debut.

China’s First! Full-Size Humanoid Robot “Can Entertain and Work”

Specifically, the Xingdong L7 is a full-size humanoid robot with a height of 171 cm, a weight of 65 kg, and 55 degrees of freedom. Powered solely by an end-to-end embodied large model called ERA-42, it drives flexible whole-body movement. This integration is the most astonishing aspect of its debut.

Industry experts focus on several “major moves” to evaluate its capabilities.

First is the 360° rotational jump: starting smoothly and landing steadily.

Running speed also breaks industry records, reaching 4 m/s.

Beyond stage performances, it can work in factories, demonstrating both strength and finesse.

For heavy lifting, its arms can bear a load of up to 20 kg (40 jin).

It can also demonstrate delicate control, such as gently tearing off a single sheet of tissue.

Finally, it can enter factories to tighten screws, holding a tool gun and controlling the trigger with its index finger for precision operations:

It even handles tasks previously considered the pinnacle of Silicon Valley robotics, such as folding clothes:

You may have noticed a “bug” in the videos… because Xingdong Era’s product form factors vary across different scenarios.

This is because Xingdong Era pioneered selectable full-size and half-body configurations. Users can flexibly choose solutions based on scenario needs, reducing comprehensive usage costs and unlocking broader markets.

Overall, the Xingdong L7 combines the power and explosiveness of a large frame with the agility of a smaller one. Compared to its previous products, it features slimmer arms and higher wrist flexibility. Combined with an extended arm span, it offers superior operational range.

In the industry, robots capable of boxing or dancing are common, as are those that can tighten screws in workshops.

However, few achieve such a balance between entertainment (“showing off”) and practical work.

This is why the Xingdong L7 sparked intense industry discussion upon its debut.

Why Is It Worth Attention?

Embodied AI, a new concept naturally derived from technological development, essentially uses models as brains and robots as bodies, achieving intelligent behavior through interaction between the body and the environment.

While the general approach is clear, specific implementation methods vary among developers.

The Xingdong L7 deserves attention not only for breaking numerous industry records but also for the technical solutions behind these achievements, which offer valuable reference points.

Why say this? We must evaluate it based on the development of embodied AI, looking at three key dimensions from end to start:

Dynamic capabilities, task execution ability, and scenario generalization capability.

Dynamic Capabilities: How Are High-Explosive Movements Achieved?

A robot’s dynamic capability is the foundation of its stability and reliability. High-explosive movements like flips, rotational jumps, and dancing place extreme demands on joint response speed and balance control algorithms, becoming a key benchmark for measuring technical strength in the industry. Successful implementation indicates significant prowess.

While there have been previous examples, they either focused on small-scale humanoid robots or suffered from insufficient real-time response and lack of continuity.

The stunning dynamic performance of the Xingdong L7 lies in its success as a large-scale humanoid robot in overcoming key challenges in high-dynamic motion, including insufficient power systems and complex balance control.

For instance, for small-scale robots with lighter weight, joint motors only need to provide tens of N·m of torque to complete rotational movements.

However, consider a large-scale robot weighing 130 jin (65 kg). At the moment of takeoff during a spin, it may experience impact forces equivalent to 2-3 times its own body weight. Slightly insufficient torque leads to failure, and even minor response lag results in loss of balance.

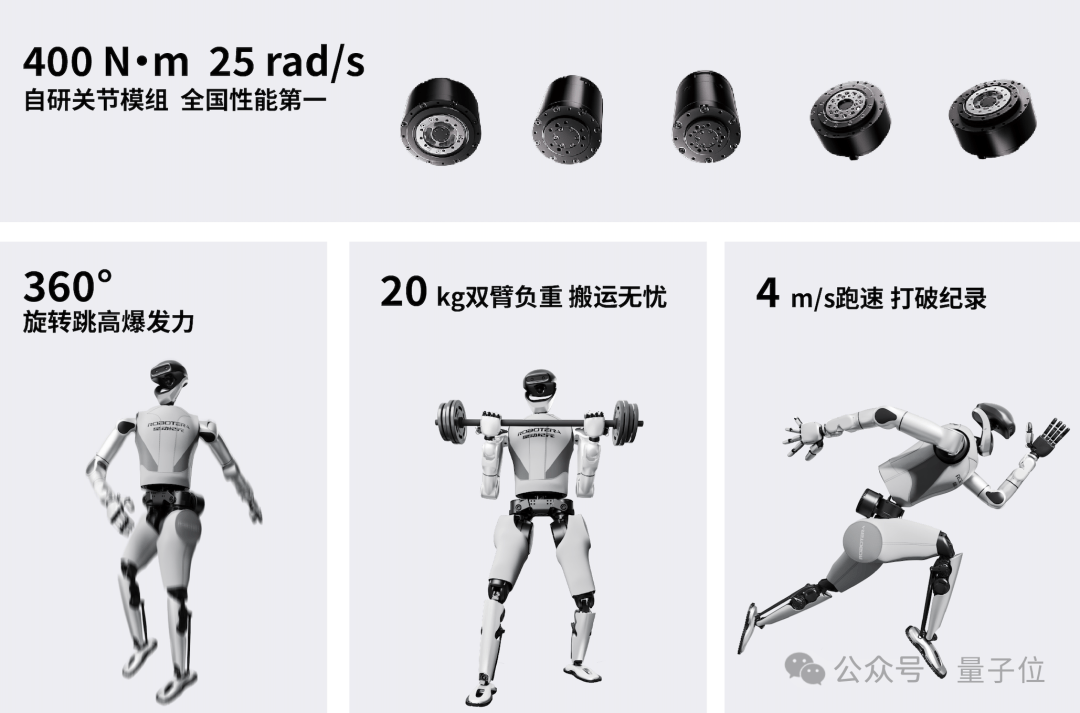

Currently, peak torque for well-known industry players is generally around 360 N·m with a response level of 20 rad/s. The Xingdong L7’s configured 400 N·m torque breaks this record, supporting instantaneous explosive power to complete actions; its 25 rad/ms response speed allows joints to adjust posture within 0.1 seconds, preventing imbalance and solving the problem.

Continuous dancing further tests balance control. When the center of gravity shifts drastically, whole-body joint coordination is required for real-time balance. This is easier for small-scale robots due to their lower center of gravity but significantly more difficult for large-scale ones.

According to reports, Xingdong L7 addresses this through two technological innovations.

First is the ultra-redundant design with up to 55 degrees of freedom. It should be noted that more DOFs mean greater flexibility and the ability to execute complex movements, but also significantly increase control difficulty.

Typically, humanoid robots have between 40-50 DOFs. Xingdong L7 increases this to 55, forming an efficient collaborative network that enables millisecond-level joint linkage through advanced algorithms.

The second innovation is end-to-end reinforcement learning trained on motion data. This method establishes a mapping directly between sensor data and joint actions without relying on manually designed balance rules. It dynamically adjusts the output force and angle of all 55 joints based on real-time movement requirements, optimizing action strategies through continuous environmental interaction to achieve precise real-time balance control.

Additionally, it employs a quasi-direct drive joint scheme combined with a fully self-developed modular skeleton to ensure sufficient lightweighting and impact resistance for long-limb robots.

Task Execution Ability: Esports-Level Hand Speed, Learning Hundreds of Skills via Video

Secondly is task execution ability—essentially, the ability to work. Without strong operational capabilities, practical application is impossible.

In short, it’s not just about whether the body is flexible enough, but also how smart the brain is.

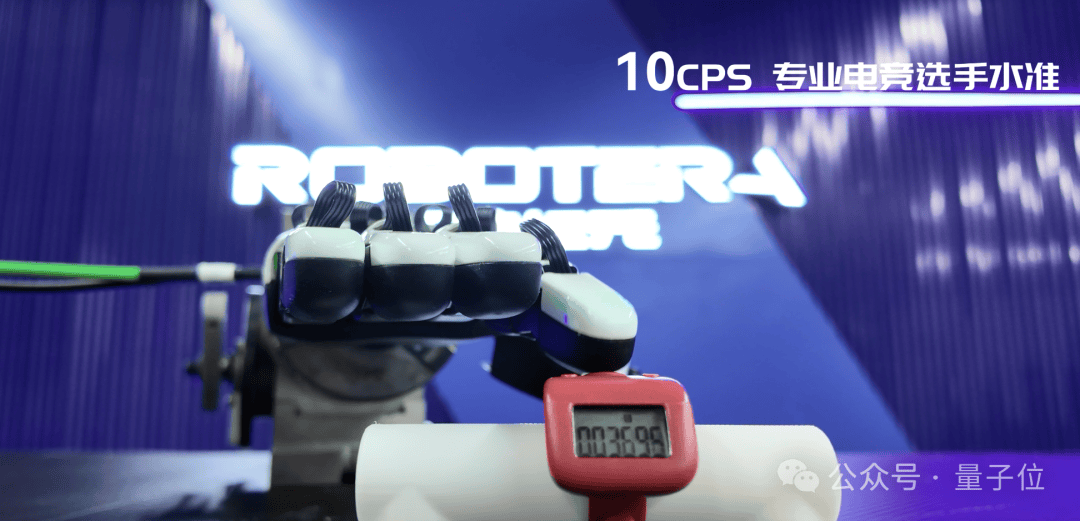

On the body side, hand capability is crucial. The Xingdong L7’s hands have reached 12 degrees of freedom, with each finger independently driven. Compared to Tesla’s Optimus (11 DOFs), the Xingdong L7 can replicate 90% of human hand movements, adapting to more specialized work scenarios.

Each finger achieves a click response rate of 10 times per second, comparable to esports players’ operation speeds. This allows for seamless completion of highly continuous processes like “screw tightening-scanning-labeling” in electronic assembly.

Furthermore, the ten-axis wrist design (side swing ±45°, front-back ±90°) allows for flexible posture adjustments when assembling non-standard parts, eliminating the need to frequently rotate the entire body.

Looking at the brain side, there have been various approaches to training AI brains in the past. For example, reinforcement learning in virtual environments is effective but significantly increases computational costs and requires extensive adaptation for real-world deployment;

Motion capture—where high-precision tasks are completed through hands-on human teaching—also has limitations. It relies on professional motion-capture equipment and requires extensive repetitive demonstration training for each task, a process that is time-consuming and labor-intensive, while struggling to achieve capability generalization.

Currently, one of the most ideal approaches recognized by the industry is video-based training. However, only a few companies have truly implemented it in practice; StarDynamics is one of them.

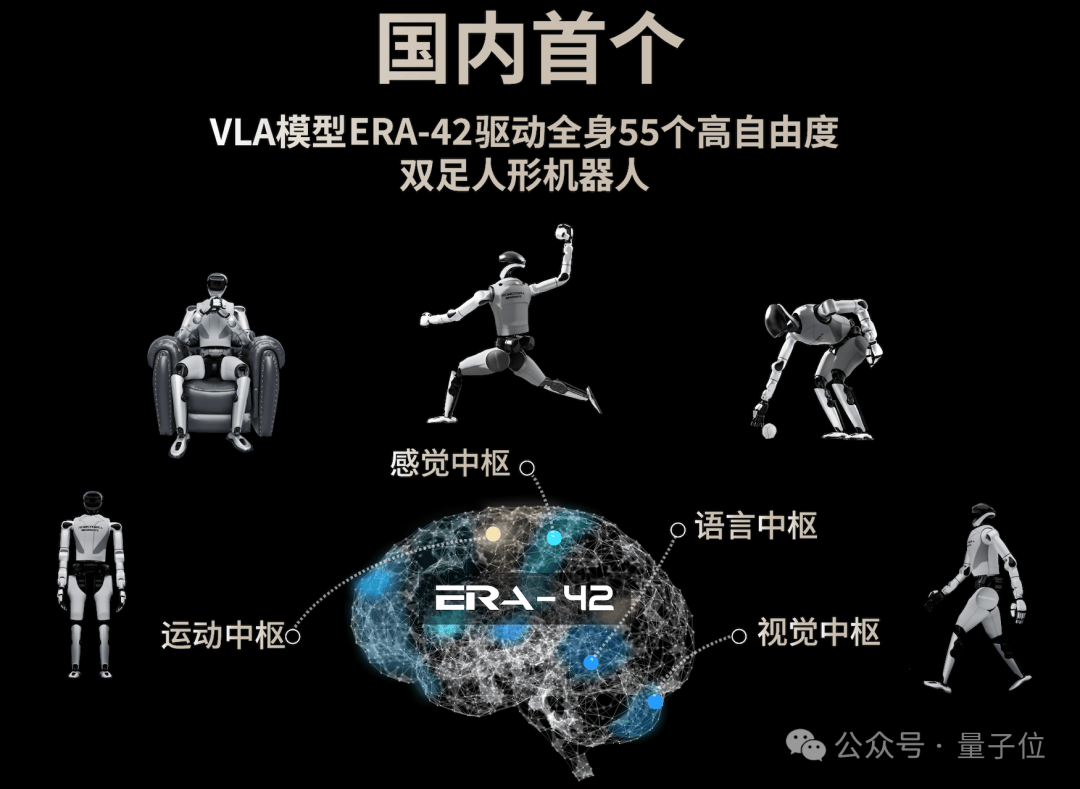

The embodied large model ERA-42 behind the StarDynamics L7 possesses “world model” capabilities that allow robots to watch human operation videos and directly learn skills.

This video-based learning approach is highly scalable, relying on the processing of massive amounts of video data to infer action execution logic. In a sense, robots must develop a form of “self-awareness.” Its significant advantage lies in drastically reducing data collection costs and enabling rapid deployment across various new scenarios.

Furthermore, another key factor supporting adaptation to complex scenarios is its “long-sequence task prediction and anti-interference” capability:

- High-frequency real-time anti-interference: Relying on ERA-42’s high-frequency model inference (above 30Hz), the robot completes real-time feedback to counter interference;

- Proactive action planning: Utilizing video prediction capabilities, it plans optimal action sequences in advance, ensuring operations are “stable, accurate, and decisive.”

Coupled with flexible limbs, it feels as though any field or scenario is fully covered.

Scenario Generalization: Full-Size or Half-Body Forms at Will

Finally, and most critically for commercialization, is scenario generalization capability. The ability to both “show off skills” and “get work done” encompasses a wide range of application scenarios.

Beyond the generalization inherent in its brain, its body has also been adapted through modular design.

StarDynamics pioneered an optional solution featuring “full-size and half-body forms,” allowing robots to switch flexibly based on the scenario, further expanding applicable use cases.

For example, in factories, logistics sorting and electronic assembly can utilize the half-body form to reduce space occupation and improve operational efficiency; whereas in scenarios requiring full-body dynamic expression, such as shopping malls and exhibitions, it can switch to the full-size form.

In other words, what you see in a factory and what you see in a mall may look different, but they might both originate from the same robot.

The StarDynamics L7 has greatly expanded the application boundaries of humanoid robots. Its flexible limbs and intelligent brain are outlining the prototype of a truly general-purpose humanoid robot.

What supports the continuous evolution of this capability and the expansion of its boundaries is StarDynamics’ pioneering implementation of a closed-loop flywheel of “model-body-scenario data” in the industry.

The question remains: Why must it be StarDynamics?

The Player Who First Ran Through the Physical AI Closed-Loop Flywheel

If you understand the founding background of StarDynamics and the industry recognition it has received, you might be surprised.

StarDynamics was founded in August 2023 as the only embodied intelligence enterprise solely held by Tsinghua University. Its founder and CEO, Chen Jianyu, holds a bachelor’s degree from Tsinghua University and a Ph.D. from the University of California, Berkeley. He currently serves as an Assistant Professor and PhD Supervisor at the Institute for Interdisciplinary Information Sciences (IIIS) at Tsinghua University, with over 10 years of experience in robotics and AI R&D.

A hardcore team deeply engaged in underlying technology R&D has attracted capital investment. In less than two years since its establishment, StarDynamics completed multiple rounds of financing, with investors including Lenovo Capital, Alibaba, and Haier Capital.

Sufficient ammunition continuously supports StarDynamics’ technological R&D. Its products have been successfully deployed in scientific research and commercial scenarios, achieving significant commercial results.

StarDynamics has delivered over 200 units this year, with another hundred orders currently being fulfilled. Among the top 10 tech giants by market capitalization globally, nine are already StarDynamics’ customers. In terms of scientific research, StarDynamics products have become the first choice in the global developer market, utilized by top talent from ByteDance’s Robotics Lab, MIT, Stanford University, and UC Berkeley.

Thus, StarDynamics is not only well-known domestically but also internationally renowned. Its overseas business has developed rapidly; within just half a year of layout at the beginning of the year, overseas market revenue accounts for more than 50%. At this year’s CES, Jensen Huang showcased 14 humanoid robots, including StarDynamics STAR 1, the predecessor to the StarDynamics L7.

Assisting scientific research, landing commercial applications, and going global has provided StarDynamics with rich scenario data, becoming one of its first-mover advantages.

Why did this innovative company quickly break out? Ultimately, it stems from StarDynamics’ technical strength. From its inception, StarDynamics decided to take a difficult but correct path—combining software and hardware to create general-purpose robots for the physical world.

General-purpose robots encompass two levels: a general brain and a general body.

StarDynamics’ latest achievement in the general brain is the end-to-end native robot large model ERA-42. This marks the industry’s first instance of driving full-body dexterity through a single embodied large model—not only capable of large movements but also achieving human-like hand operation capabilities with five-finger dexterous hands, allowing flexible use of various tools to complete hundreds of complex tasks.

ERA-42 has three main highlights. First is architectural innovation, which reduces data costs and dependence on real-machine data.

Compared to Helix, the VLA model produced by Figure AI, ERA-42 holds a significant advantage. In comparison, Helix learned fewer than 100 tasks using 500 hours of teleoperation data; whereas StarDynamics’ ERA-42 can learn hundreds of tasks with only about 10 hours of real-machine data, ranking among the industry leaders.

The secret behind this lies in StarDynamics’ adherence to first principles. When training its humanoid robots, it does not simply mimic behaviors but learns the causal relationships behind them—i.e., what results from performing a specific action. This allows for an understanding of unified physical laws, achieving complete generalization.

Second is cross-task learning. Because ERA-42 has strong generalization capabilities, it can execute new operational tasks through a single policy. By inputting just text or voice commands along with perception hardware data, it directly outputs operations end-to-end, generalizing to unseen environments or tasks.

For instance, StarDynamics previously collected simple red, yellow, and blue block grasping data, which allowed the robot to generalize grasping tasks to diverse objects never seen before, such as carrots and eggplants, significantly improving success rates in generalization tasks compared to other model algorithms.

Finally is cross-body migration. A single VLA large model connects single-arm, dual-arm, and upper-body configurations. This means the L7 can implement form customization, relying not only on hardware advantages but also demonstrating its model’s generalization capability.

This also reflects StarDynamics’ hardware capabilities, achieving generalization and modularity, much like building a general body using Lego blocks. It has self-developed five joint modules, including frameless torque motors, high-performance coreless motors, high-power drivers and control units, and low-reduction-ratio reducers. Because it masters key technologies itself, the body can be updated rapidly, iterating eight generations in two years.

After achieving general models and general bodies, these two core components face a common problem: the data bottleneck. The lack of high-quality data has long plagued the industry; obtaining real-machine data is costly, while choosing simulation is difficult, and data types tend to be monotonous.

StarDynamics’ solution strategy involves using massive amounts of unlabeled internet video data combined with a small amount of exclusive real-machine data, extracting fully and transmitting losslessly, allowing robots to understand the physical world more efficiently and at lower cost.

Low-cost acquisition of scenario data strengthens the model’s brain, driving the hardcore body. In summary, StarDynamics has pioneered the “closed-loop flywheel of the Physical AI era” in the embodied intelligence industry: “model-body-scenario data,” akin to running through the three elements of “compute, algorithms, and data” in the AI model era.

Such players are not without precedent in other tracks; for example, in the previous wave of physical AI, the hottest startup track was Robotaxi. Tesla self-developed the FSD model, used Model series vehicles as the body to gather massive scenario data for FSD, and has now become a superstar in the Robotaxi sector.

Now, embodied intelligence is replacing Robotaxi to become the most attractive and cutting-edge track of physical AI. StarDynamics has pioneered the closed-loop flywheel of Physical AI, becoming a new star in the field of embodied intelligence, representing China’s most advanced level and even the global forefront.

Indeed, each generation produces its own talents.