Market Cap Plummets by $75 Billion in Just Six Minutes Following Apple’s Event!

What caused investors to collectively lose faith in Apple’s latest product launch?

Ahem, the “culprit” is once again: Siri.

Even before this year’s WWDC conference, users and investors had high hopes for Siri updates. However, shortly after the keynote began, Craig Federighi, Apple’s Senior Vice President of Software Engineering, awkwardly announced that these updates might be delayed until next year.

Almost immediately following this announcement, Apple’s stock price dropped by more than 2.5%, falling from approximately $206 to below $201, representing a market capitalization loss of $75 billion (approximately RMB 538.58 billion).

In fact, the main highlights of this year’s Apple keynote can be summarized in three aspects:

- A new “Liquid Glass” design language, touted as the “largest design update to date”;

- Regarding AI, beyond opening up its on-device models, Apple focused more on integrating third-party models and introducing a series of developer tools;

- Feature updates across all operating systems, including iOS and macOS, signaling a return to focusing on user experience.

Judging solely from the perspective of AI, Apple’s moves were heavily criticized by netizens for being “too slow.”

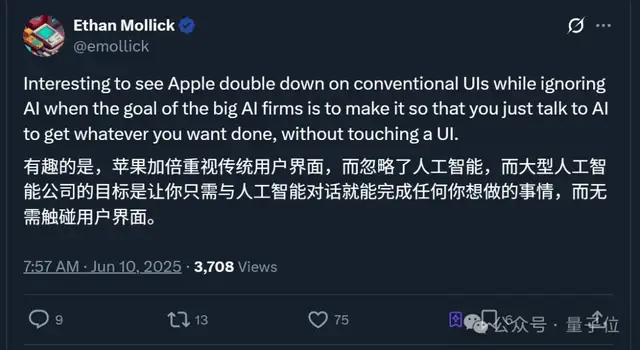

Furthermore, Professor Ethan Mollick of the Wharton School observed that Apple’s actions are going in the opposite direction of other major tech companies:

Apple is doubling down on traditional user interfaces while neglecting AI.

So, what exactly were the AI updates announced at this year’s WWDC?

Built-in ChatGPT Model

First, developers now have more model options to choose from.

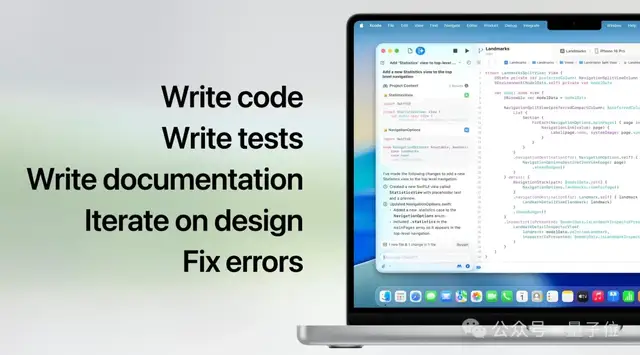

This time, Apple released a new version of its application development kit, Xcode 26, which integrates OpenAI’s ChatGPT model.

With Xcode 26, developers can directly connect AI models into their coding workflows for writing code, testing and documentation, iterating designs, fixing bugs, and more.

This means developers can use ChatGPT within Xcode without creating an account, while some paid ChatGPT users can link their accounts to increase usage limits.

Second, developers can integrate more of Apple’s AI capabilities into their own applications.

In addition to introducing third-party models, Apple launched the Foundation Models framework, allowing developers to easily utilize Apple Intelligence.

According to official statements, the framework natively supports Swift; developers need only write three lines of code to access Apple’s on-device models. The framework also includes various generative AI capabilities and supports tool calling.

This means integrating generation capabilities directly into existing applications will become significantly easier.

The framework is currently available for testing via the Apple Developer Program (developer.apple.com), with a public beta version set to launch next month through the Apple Beta Software Program (beta.apple.com).

Additionally, Apple rolled out AI feature updates across its entire suite of operatingating systems.

These include but are not limited to:

- System-level real-time translation across apps;

- Visual intelligence capabilities enabling system-wide cross-app AI visual search;

- macOS integration with iPhone mirroring for checking orders and answering calls, along with AI-customized shortcuts;

- ……

Notably among these is the Visual Intelligence update. Similar to common photo-search functions but enhanced with AI, it allows users to take a screenshot to identify specific objects within an app and perform an AI search.

It can also automatically detect task events on the screen and suggest adding them to the calendar; upon user approval, it will automatically extract details such as date, time, and location.

In short, users and investors seem unconvinced by these updates so far.

Not long after the keynote ended, some people were already “nostalgic” for previous events:

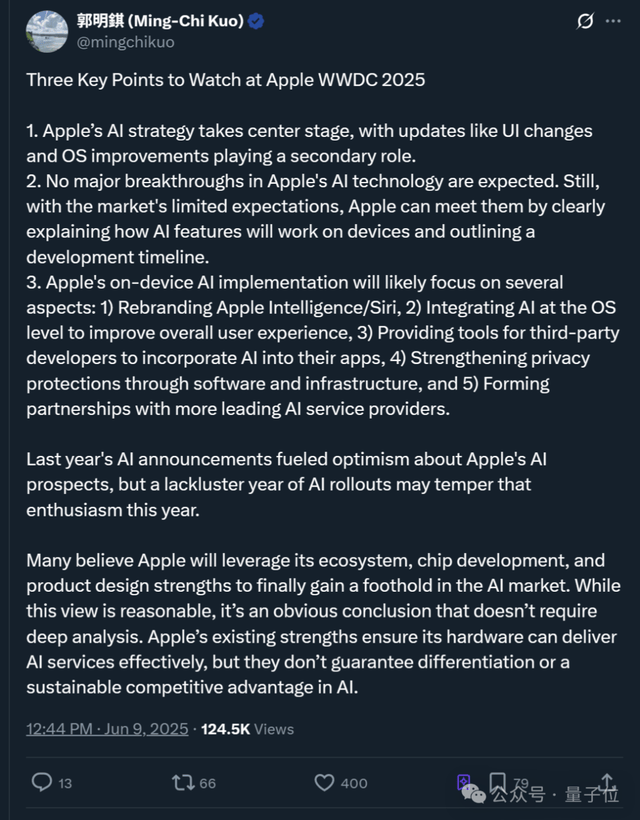

Renowned analyst Ming-Chi Kuo also summarized the event, with key points as follows:

- Apple’s AI strategy takes center stage, while UI changes and OS improvements play secondary roles.

- Apple’s AI technology is not expected to achieve major breakthroughs. Nevertheless, due to limited market expectations, Apple can meet these expectations by clearly explaining how AI features operate on devices and providing development timelines.

- Potential actions regarding on-device AI may include: integrating AI at the OS level to improve overall user experience; providing tools for third-party developers to incorporate AI into their apps; and establishing partnerships with more leading AI service providers.

In short, facing the reality that it has fallen behind competitors in AI, Apple currently finds itself “willing but unable” to act independently, prompting it to consider collaborations with third parties like OpenAI.

One More Thing

Interestingly, some netizens used “remixed” versions of the keynote to subtly mock Apple’s slow progress.

According to users, it took less than two minutes for them to use AI to seamlessly replace the dialogue in the video.

The original segment introduced emoji blending features, but the modified background voiceover changed to: “When you are the last major tech company doing things related to AI.”

So, what are your thoughts on Apple’s latest keynote?