Submitted by the BrowseComp-ZH Team

Do you think large language models can easily “surf the web”?

The new benchmark dataset BrowseComp-ZH directly challenges mainstream AI.

BrowseComp-ZH is a newly released benchmark jointly developed by institutions including SUSTech (Guangzhou), Peking University, Zhejiang University, Alibaba, ByteDance, NIO, and others. It has caused over 20 major domestic and international large language models to collectively “fail”:

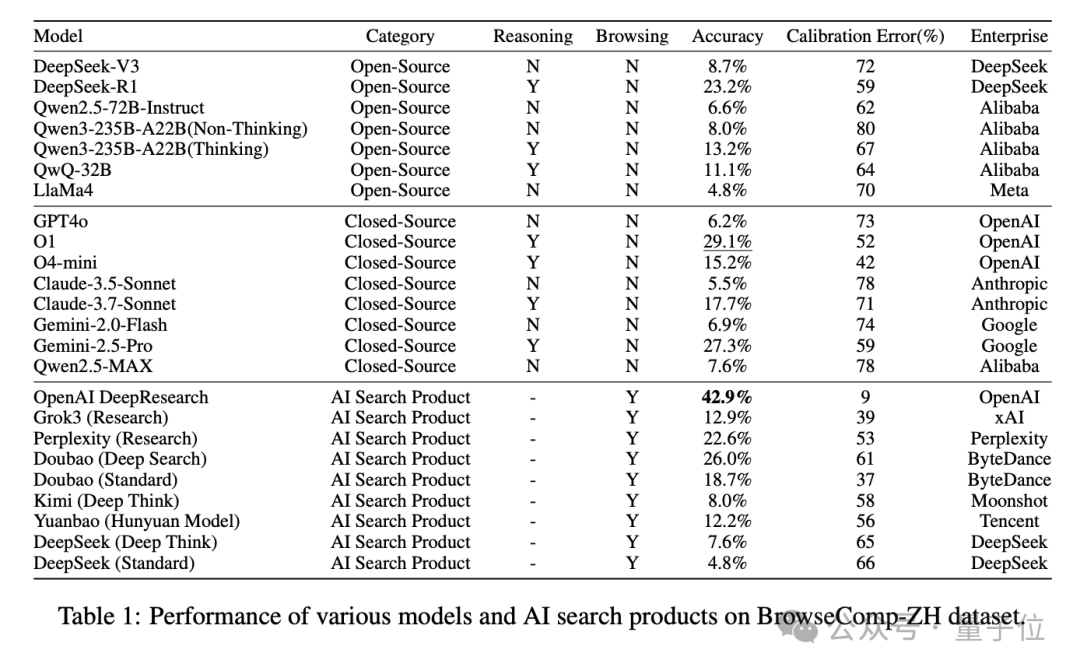

GPT-4o achieved an accuracy of only 6.2% in the test; most domestic/international models saw their accuracy drop below 10%; even OpenAI’s currently best-performing DeepResearch model scored only 42.9%.

All data from BrowseComp-ZH has been open-sourced and released.

The research team stated plainly:

Why Do We Need Tests for Chinese Web Capabilities?

Modern large models are increasingly adept at “using tools”: they can connect to search engines, invoke plugins, and “view webpages.”

However, many evaluation tools are built solely within English contexts, giving little consideration to Chinese contexts, Chinese search engines, or the Chinese platform ecosystem.

Yet, information on the Chinese internet is severely fragmented, with diverse search entry points and complex linguistic expressions.

How difficult is the Chinese web world? Consider these examples:

- Information is fragmented across multiple platforms such as Baidu Baike (Baidu Encyclopedia), Weibo, local government websites, and Video Accounts.

- Common language structures contain omissions, allusions, and references, causing keyword searches to often “miss the mark.”

- Search engine quality varies significantly; it is common for information to be buried or lost.

Therefore, simply “translating” English test sets is insufficient.

Tests must be natively designed within the Chinese context to truly measure whether large models can “understand,” “search effectively,” and “recommend accurately” on Chinese webpages.

How Was BrowseComp-ZH Developed?

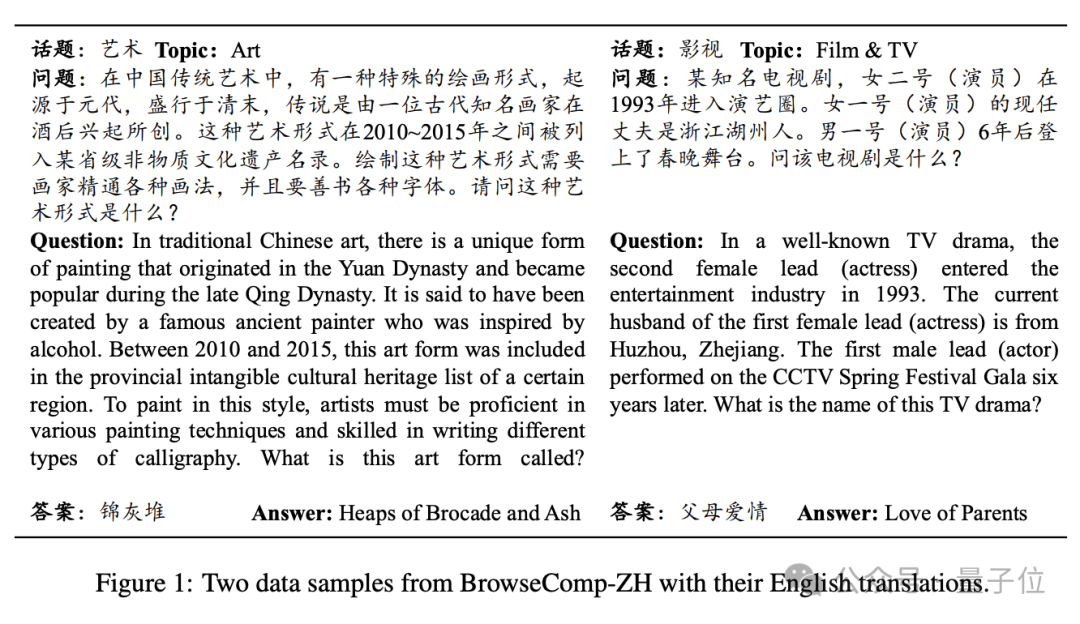

The research team adopted a “reverse design method”: starting from a clear, verifiable factual answer (such as a specific painting genre, institution, or film/TV title), they constructed complex questions with multiple constraints in reverse, ensuring the following three points:

- The first screen of results on Baidu/Bing/Google cannot directly hit the answer.

- Multiple mainstream large models cannot answer correctly even in retrieval mode.

- After manual verification, the question structure is clear and has only one unique answer.

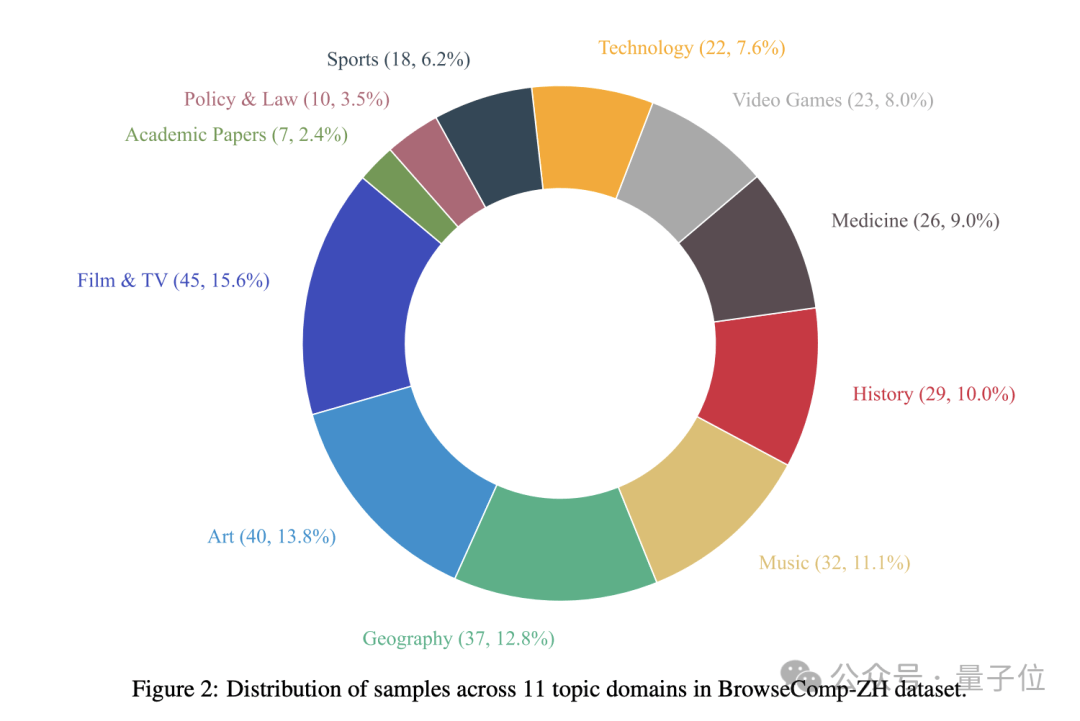

Ultimately, they constructed 289 high-difficulty Chinese multi-hop retrieval questions, covering 11 major domains including film/TV, art, medicine, geography, history, and technology.

Large Models Collectively “Crash”? DeepResearch Barely Breaks 40%, Most Fall Below 10%

Under the BrowseComp-ZH test, several major domestic and international large models collectively “crashed”:

Although these models have demonstrated strong capabilities in dialogue understanding and generative expression, their accuracy rates were surprisingly low when facing complex retrieval tasks on the Chinese internet:

- The accuracy of most models was below 10%, with only a few breaking through 20%.

- OpenAI DeepResearch ranked first with 42.9%, still far from “passing.”

Researchers pointed out that this result indicates: models need not only to know how to “look up information” but also to master “multi-hop reasoning” and “information integration” to truly find answers on the Chinese internet.

Four Key Findings Reveal “Model Blind Spots” in Chinese Web Tasks

1. Memory Alone Is Not Enough; Real Skill Is Required

Models relying purely on parameter memory (without search) often achieved accuracy rates below 10%, indicating that “hard memorization” is unreliable.

2. Models with Reasoning Capabilities Performed Better

DeepSeek-R1 (23.2%) outperformed DeepSeek-V3 (8.7%) by a full 14.5%, and Claude-3.7 improved over Claude-3.5 by 12.2%. Reasoning capability became the key variable.

3. Searching More ≠ Searching Accurately; Multi-Turn Strategy Is Key

AI search products with multi-turn retrieval capabilities won comprehensively:

- DeepResearch: 42.9%

- Doubao Deep Search: 26.0%

- Perplexity Research Mode: 22.6%

In contrast, models that only retrieve once (such as Kimi and Yuanbao) had accuracy rates as low as single digits.

4. “Crashing” in Search Functions? Integration Can Make Things Worse

The most typical counterexample is DeepSeek-R1, where enabling the search function caused its accuracy to plummet from 23.2% to 7.6%.

Research indicates that the model failed to effectively integrate webpage retrieval information with existing knowledge, instead being misled by it.

Dataset Open! Model Developers Invited to Challenge

All data for BrowseComp-ZH has been open-sourced and released.

Researchers hope this benchmark will serve as a touchstone for promoting the implementation of LLMs in Chinese information environments, aiding in the construction of agents that can truly “use the internet in Chinese.”

Next steps include expanding the sample size, diversifying question-and-answer formats, and conducting in-depth analysis of model reasoning paths and failure cases.

_Paper Address:

https://arxiv.org/abs/2504.19314

Code Address:

https://github.com/PALIN2018/BrowseComp-ZH

— End —