It’s getting out of hand. Tongyi Qianwen is truly going all out.

Just days after Qwen3-Coder made waves, the brand new open-source Qwen3 series strongest reasoning model—Qwen3-235B-A22B-Thinking-2507—was released.

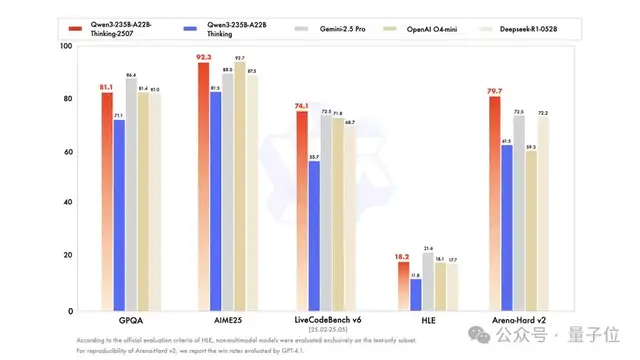

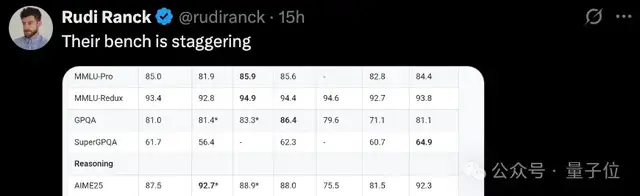

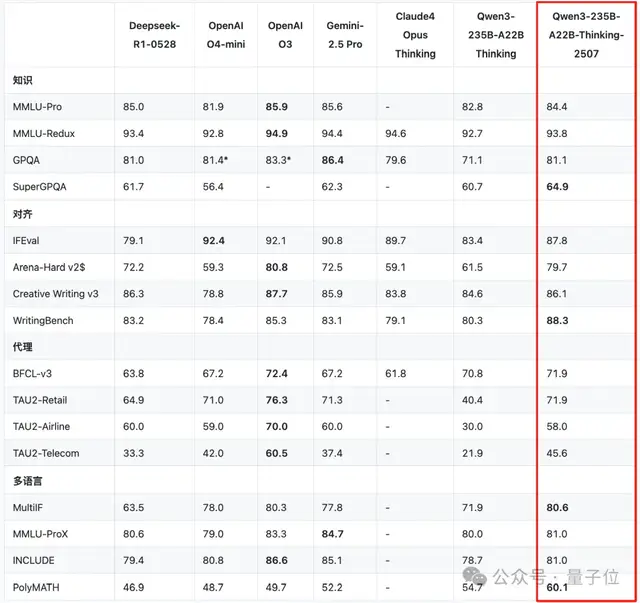

How is it the strongest? Upon its debut, it once again set new State-of-the-Art (SOTA) benchmarks, securing the title of “World’s Strongest Open-Source Model” across various evaluations, rivaling top-tier closed-source models like Gemini-2.5 Pro and o4-mini.

International netizens are envious:

Crucially, within this short week—counting the newly open-sourced base model Qwen3-235B-A22B-Instruct-2507 (non-thinking version) released two days prior, along with Qwen3-Coder—Tongyi Qianwen has completed a trio of open-source releases.

And it’s not just about being open source; every release achieves SOTA status: successively claiming the titles of global strongest open-source models in base models, coding models, and reasoning models.

This intensity of model updates and efficiency improvements is undoubtedly leading the world globally.

One has to wonder if Mark Zuckerberg is panicking (doge emoji).

New Qwen3 Reasoning Model Tops Global Open-Source Rankings

Just as DeepSeek R1 was built upon V3, the new Qwen3 reasoning model is based on Qwen3-235B-A22B—the 235 billion parameter MoE version with 22 billion active parameters.

Official statements indicate that the new reasoning model has significantly enhanced core capabilities in three areas:

- Significant performance improvements in tasks such as logical reasoning, mathematics, science, and coding;

- Better adherence to instructions, tool usage, and text generation;

- Support for 256K native context length, suitable for highly complex reasoning tasks.

This SOTA achievement and topping of open-source rankings is not a marginal improvement. A closer look at the evaluation scores reveals “substantial capabilities.”

Let’s first look at reasoning capabilities.

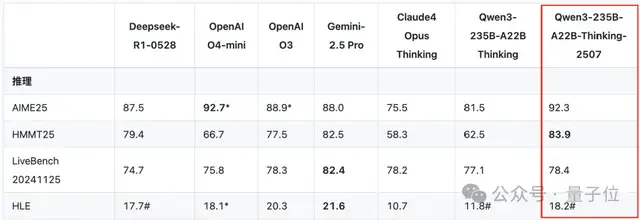

In the ultra-high-difficulty test known as “The Last Exam for Humans,” the latest 2507 version of the reasoning model improved its score from 11.8 (as seen in the initial Qwen3 reasoning model released at the end of April) to 18.2.

This surpasses DeepSeek-R1-0528’s score of 17.7 and OpenAI o4-mini’s score of 18.1 achieved under high-performance reasoning mode.

In coding, the new Qwen3 reasoning model surpassed closed-source industry benchmarks like Gemini-2.5 Pro in LiveCodeBench v6 and CFEval, setting new SOTA records.

Furthermore, across benchmarks for knowledge, alignment, agents, and multilingual capabilities, the new Qwen3 reasoning model demonstrated performance comparable to closed-source models, achieving open-source SOTA status.

The paper metrics are undeniably excellent. But how does this new reasoning model perform in actual usage?

We conducted a simple test ourselves.

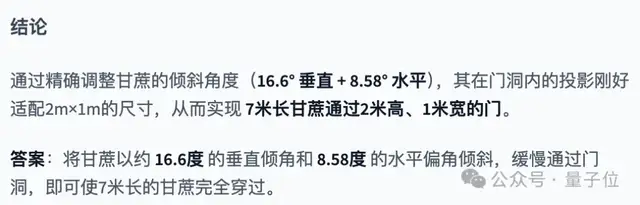

Consider the classic question: How can a 7-meter long sugarcane pass through a door that is 2 meters high and 1 meter wide?

Qwen3-235B-A22B-Thinking-2507 thought for 43 seconds before providing the answer:

The thinking process was as follows:

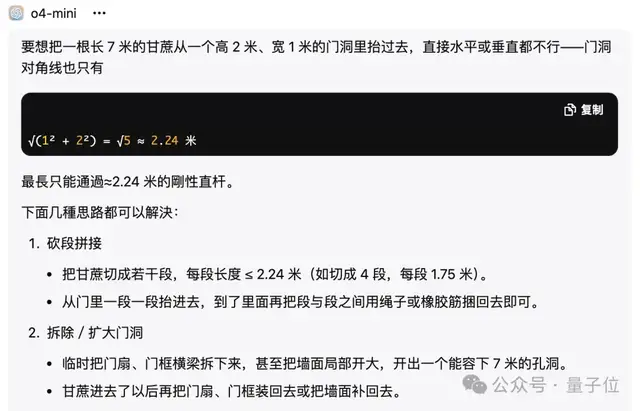

In contrast, o4-mini’s answer was simpler and more blunt.

Trio of Open-Source Releases, Claiming Three SOTAs

As mentioned earlier, this brand new reasoning model is actually Alibaba’s third open-source release of the week.

To summarize the vibe:

The first two releases left everyone dizzy with excitement as they tested and deployed them. Just when things were heating up, the “king of grinding” at Tongyi Lab slapped down another pair of aces.

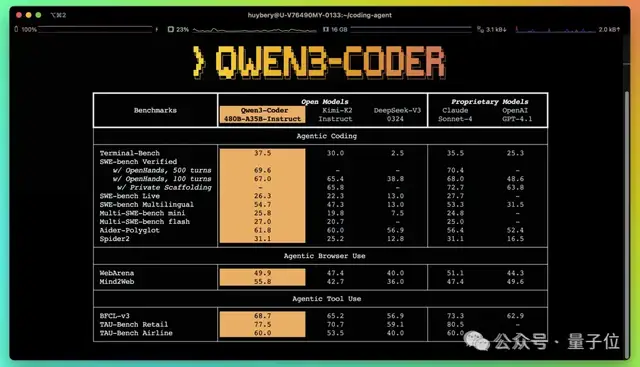

Take Qwen3-Coder, for instance. It set new AI coding SOTA standards upon open-sourcing—not only surpassing DeepSeek V3 and Kimi K2 in the open-source community but also beating industry benchmarks like the closed-source Claude Sonnet 4.

When netizens tested it, the ball bouncing effect looked like this:

Industry heavyweights such as HuggingFace CEO Clement Delangue and Perplexity CEO Aravind Srinivas joined the discussion and liked the posts immediately:

This is a victory for open source.

The popularity of Qwen3-Coder has driven a surge in Alibaba’s Tongyi API call volume.

Data from the well-known overseas model API aggregation platform OpenRouter shows that Alibaba’s Tongyi API calls exceeded 100 billion tokens in the past few days, securing top three spots globally on the OpenRouter trend list, making it the hottest model currently available.

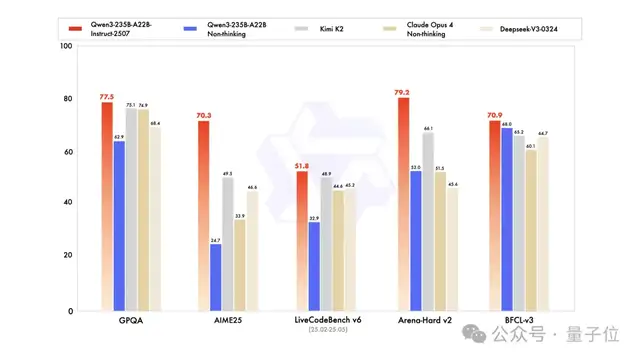

In the base model sector, the latest version of Qwen3—Qwen3-235B-A22B-Instruct-2507 (non-thinking version)—has also topped global open-source rankings. It performed exceptionally well in numerous evaluations including GPQA (knowledge), AIME25 (mathematics), LiveCodeBench (coding), Arena-Hard (human preference alignment), and BFCL (agent capabilities), surpassing leading closed-source models like Claude 4 (Non-thinking).

Chinese Open Source Reaches the World’s Forefront

Three open-source releases in a row, claiming three championships. For China’s open-source community, this may just be the beginning.

Let’s be honest: ever since DeepSeek went viral and Llama 4 stumbled, if there is one force in the open-source domain that is most active and leading new trends, it has to be the mysterious Eastern power.

Whenever a new open-source king emerges—DeepSeek, Qwen, Kimi—it always seems to be “made in China.”

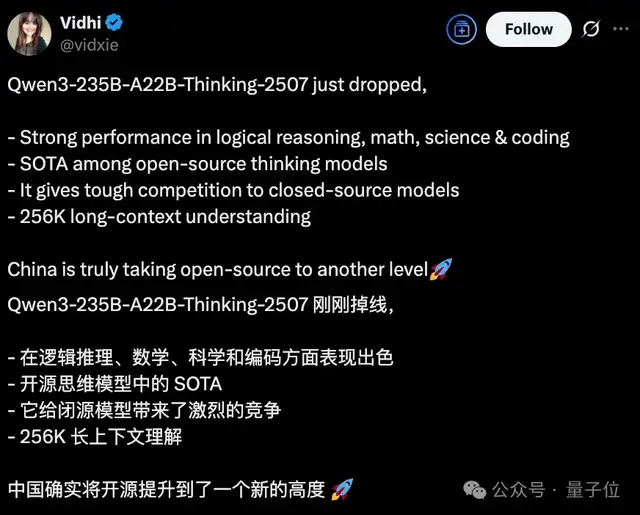

“China has indeed elevated open source to a new height” is becoming an increasingly discussed and agreed-upon sentiment.

Crucially, as Jensen Huang stated in his latest visit to Beijing, regarding open-source models, “China’s development speed is extremely fast.”

Taking Qwen as an example, Alibaba has currently open-sourced over 300 Tongyi large models. The number of derived models under the Tongyi Qianwen brand has exceeded 140,000, truly surpassing the previous global open-source leader, the Llama series, to become the world’s largest open-source model family.

Alibaba has revealed that over the next three years, it will invest more than 380 billion yuan in building cloud and AI hardware infrastructure, continuously upgrading full-stack AI capabilities.

More importantly, the gap between open-source and closed-source models is being compressed at this “China speed.”

When the intersection point of their growth curves will appear remains unknown, but domestic models have undeniably positioned themselves at the forefront of the global landscape.

— End —