Submitted by the AdvML Team

The IEEE/CVF International Conference on Computer Vision and Pattern Recognition (CVPR), one of the most academically influential top-tier conferences in the field of artificial intelligence, will be held majestically from June 11 to June 15, 2025, in Tennessee, USA.

At this prestigious event, the 5th Workshop on Adversarial Machine Learning will be co-organized by globally renowned academic institutions including Beihang University, Zhongguancun Laboratory, and Nanyang Technological University. Centered around the theme of Foundation Models + X, this workshop aims to deeply explore robustness challenges in foundation models (FM) and their domain-specific applications (XFM).

Thematic Focus: Foundation Models + X

Foundation models (FM), with their powerful generative capabilities, have revolutionized multiple fields including computer vision. Building on this foundation, domain-specific foundation models (XFM)—such as autonomous driving FM and medical FM—further enhance professional task performance within their respective domains through training on curated datasets and architecture modifications tailored to specific tasks. However, as XFM applications expand widely, their vulnerability to adversarial attacks is increasingly exposed. These attacks can lead to incorrect classification of input images or prompts, or even generate outputs desired by adversaries, posing significant threats to safety-critical applications such as autonomous driving and medical diagnosis.

Call for Papers

This workshop invites submissions related to (but not limited to) the following topics, with a Best Workshop Paper Award established:

- Robustness of X-domain-specific foundation models

- Adversarial attacks on computer vision tasks

- Improving the robustness of deep learning systems

- Interpreting and understanding model robustness, especially foundation models

- Adversarial attacks for social good

- Datasets and benchmarks that evaluate foundation model robustness

Important Dates

Paper Submission Opens: February 1, 2025

Abstract Submission Deadline: March 15, 2025

Full Paper Submission Deadline: March 20, 2025

Notification of Acceptance: March 31, 2025

Final Version Submission: April 7, 2025

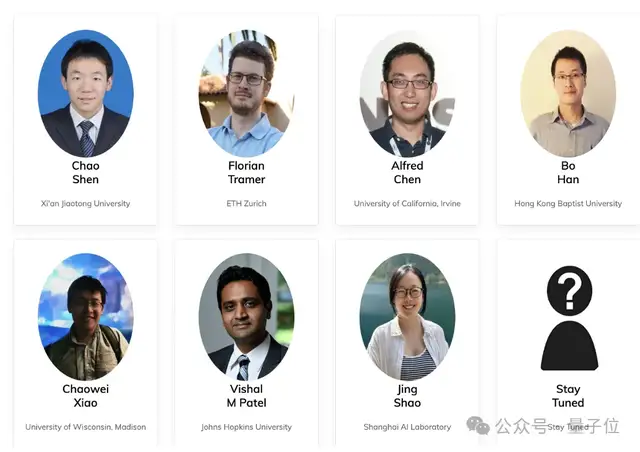

Keynote Speakers

…

More speakers to be announced soon!

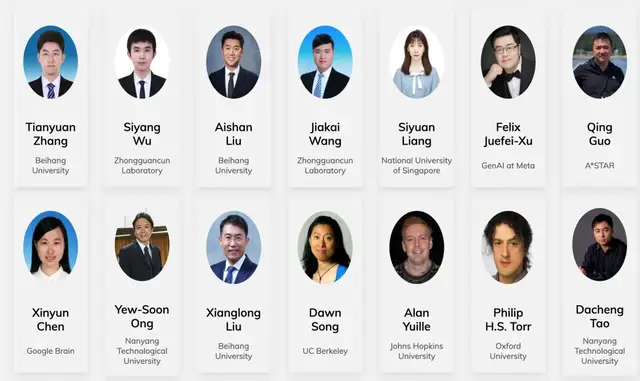

Organizing Committee

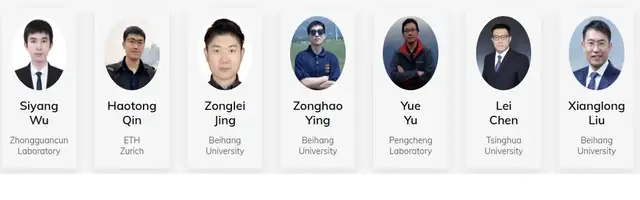

Program Committee

Competition

Additionally, this workshop will host a competition focused on adversarial attacks against Multimodal Large Language Models (MLLMs) within foundation models. This challenge aims to invite global developers, researchers, and enthusiasts to design and submit image-text pairs that trigger MLLMs to generate harmful, inappropriate, or illegal content from a “red team” perspective. Through this process, the goal is to expose the vulnerabilities of MLLMs, drive innovation in security technologies, and provide important directions for improvement in future model development.

The competition consists of two stages: preliminary and final rounds. In the preliminary round, organizers will provide harmful text queries across several risk categories; participants must design corresponding adversarial image-text pairs to induce safety-risk outputs from the MLLM. In the final round, organizers will provide more difficult harmful text queries across various risk categories, with the same objective as the preliminary round. Ultimately, teams will be scored based on their attack success rates in the final round, and winning teams will be determined.

Detailed competition information will be announced later on the workshop website. Global researchers are encouraged to stay tuned and participate!

Challenge Chair:

Competition Organizers and Co-organizers:

The 5th CVPR Workshop on Adversarial Machine Learning

https://cvpr25-advml.github.io/

Paper Submission Portal: https://openreview.net/group?id=thecvf.com/CVPR/2025/Workshop/Advml