On March 29, the Beijing Academy of Artificial Intelligence (BAAI) unveiled its first cross-embodiment cerebellum-cerebrum collaboration framework, RoboOS, and the open-source embodied brain, RoboBrain, at the “Future AI Pioneer Forum” during the 2025 Zhongguancun Forum. These innovations enable lightweight, rapid deployment across scenarios for multi-tasking and cross-embodiment collaboration, propelling single-machine intelligence toward swarm intelligence. They provide foundational technical support to accelerate scenario-based applications in building an open-source unified ecosystem for embodied AI.

△ Demo of cross-embodiment collaborative delivery tasks among multiple robots based on RoboOS and RoboBrain

Video Link:

https://mp.weixin.qq.com/s/APgi5k53hrJo8lpxcAkE-g

Enhancing Long-Horizon Task Capabilities: Building a Perception-Cognition-Decision-Action Closed Loop

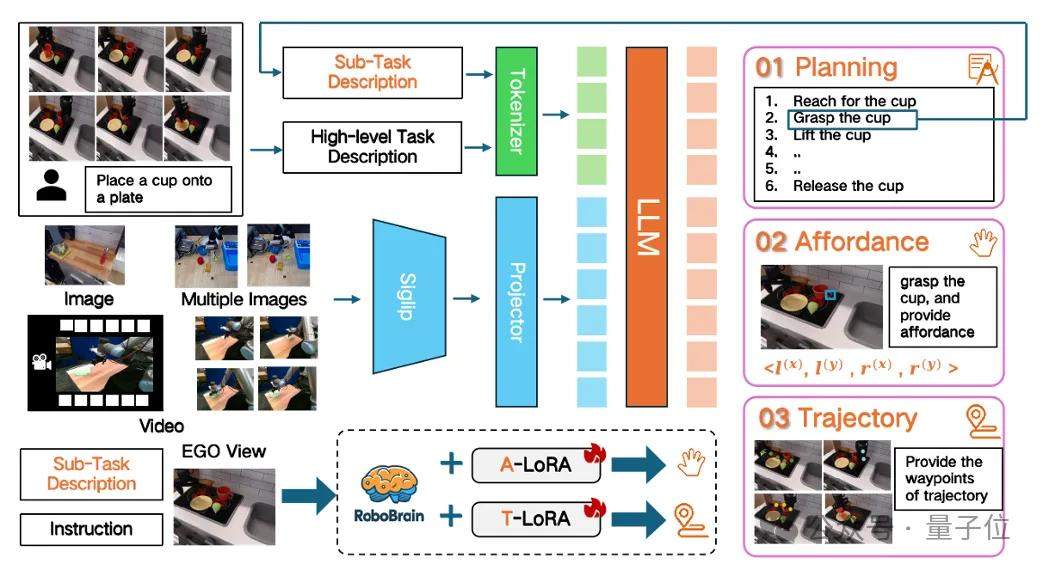

In embodied AI scenarios, long-horizon manipulation tasks are a core capability for robots executing complex operations. The embodied brain, RoboBrain, integrates three-dimensional capabilities in robot task planning, affordance perception, and trajectory prediction. By mapping abstract instructions into concrete action sequences, it enhances the robot’s ability to handle long-horizon tasks.

RoboBrain consists of three modules: a foundation model for task planning, an A-LoRA module for affordance perception, and a T-LoRA module for trajectory prediction. During inference, the model first perceives visual inputs and decomposes instruction commands into a series of executable sub-tasks, followed by performing affordance perception and trajectory prediction. RoboBrain employs a multi-stage training strategy to equip it with long-term historical frame memory and high-resolution image perception capabilities, thereby improving scene understanding and operational planning.

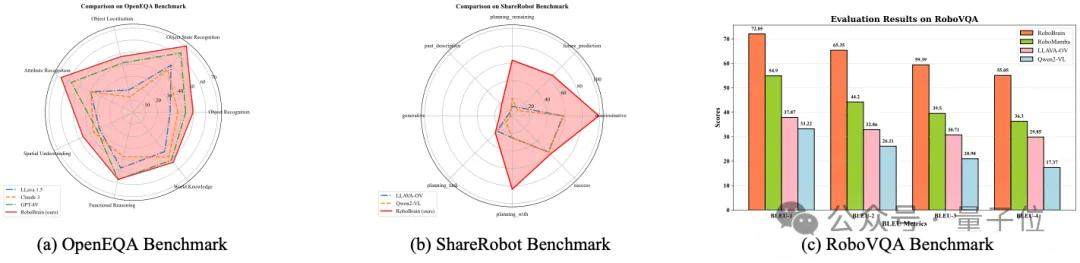

RoboBrain demonstrates excellent performance in evaluations for task planning, affordance perception, and trajectory prediction.

In terms of task planning, RoboBrain outperforms six leading closed-source/open-source Multimodal Large Language Models (MLLMs), including GPT-4V and Claude 3, across multiple dimensions on robot planning benchmarks such as OpenEQA, ShareRobot (self-built), and RoboVQA, without compromising general capabilities.

△ RoboBrain Performance on Embodied Planning Benchmarks

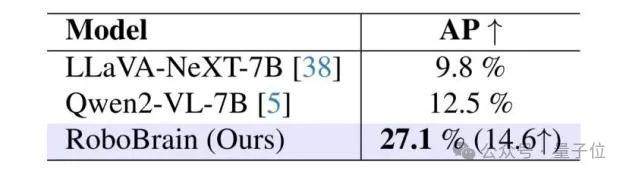

Regarding affordance perception, RoboBrain achieved an average accuracy on the AGD20K test set that surpassed the then state-of-the-art open-source model Qwen2-VL, validating its superior capabilities in instruction understanding and object attribute recognition.

△ RoboBrain Performance on Affordance Perception Benchmarks

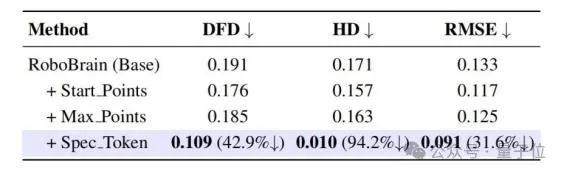

△ RoboBrain Performance on Trajectory Prediction Benchmarks

In trajectory prediction, the operational trajectories predicted by RoboBrain exhibit high similarity to real-world trajectories, demonstrating high precision and stability. Future iterations of RoboBrain will continue to enhance its trajectory prediction capabilities.

Currently, RoboBrain can interpret human instructions and visual images to generate action plans and evaluations based on real-time image feedback, predict the trajectory for each step, and perceive corresponding affordances. Specifically, RoboBrain effectively utilizes environmental information and the state of interactive objects—whether captured from first-person or third-person perspectives—to generate task plans tailored to different types of robotic manipulation tasks. Based on human instructions and visual data, it provides reasonable affordance regions and demonstrates strong generalization across various scenarios, generating trajectories that are both feasible and logical.

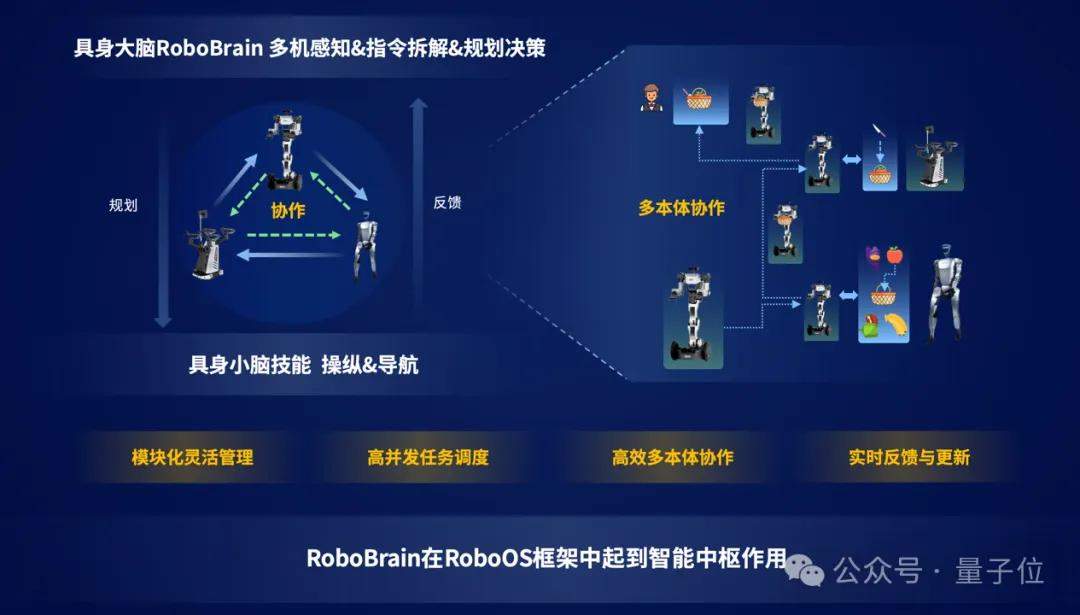

The embodied brain RoboBrain, the cerebellum skill library, and the cross-robot data hub are core components of the cross-embodiment framework, RoboOS. The embodied brain RoboBrain is responsible for global perception and decision-making, constructing mechanisms for dynamic spatiotemporal perception, planning guidance, and feedback error correction. The cerebellum skill library handles low-latency precise execution, enabling flexible and delicate operations. The cross-robot data hub facilitates the real-time sharing of spatial, temporal, and embodiment memories, providing informational support for decision planning and optimized collaborative operations, thus forming a closed loop of perception-cognition-decision-action.

One Brain, Multiple Robots: Achieving Cross-Embodiment Collaboration from Single-Agent to Swarm Intelligence

The cross-embodiment cerebellum-cerebrum collaboration framework, RoboOS, is based on a “brain-cerebellum” hierarchical architecture. Through modular design, intelligent task management, and cross-embodiment collaboration, it provides efficient, flexible, and scalable underlying support for robots, enabling the leap from single-machine intelligence to swarm intelligence.

Under RoboOS’s hierarchical architecture, the complex scene perception and decision-making capabilities of the embodied brain RoboBrain are deeply integrated with the high-efficiency execution capabilities of the cerebellum skill library, ensuring stable operation in long-cycle, highly dynamic tasks. This achieves “plug-and-play” integration between brain models (such as LLMs/VLMs) and cerebellum skills (such as grasping and navigation). Currently, it supports various types of embodied embodiments, including Unitree’s dual-arm robots, Realman’s single/dual-arm robots, Zhiyuan humanoid robots, and Unitree G1 humanoid robots.

By sharing a memory system (spatial memory/time memory/embodiment memory), RoboOS achieves state synchronization and intelligent collaboration among multiple robots, breaking through traditional “information silo” limitations to enable cross-embodiment collaborative control.

RoboOS can dynamically manage multi-robot task queues, supporting priority preemption and resource optimization allocation to ensure real-time response in complex scenarios, achieving high-concurrency task scheduling.

Furthermore, RoboOS dynamically adjusts strategies based on execution feedback and environmental changes, continuously optimizing task planning to enhance robustness and achieve real-time closed-loop optimization.

In a “deliver apples and fruit knives” task scenario, based on RoboOS and RoboBrain, collaboration is divided among three robots: a Realman single-arm robot (transport), a Unitree humanoid G1 (selecting fruits), and a Unitree dual-arm robot (selecting fruit knives).

The overall task flow involves the Realman robot calling “navigation skills” to move to the dining table. The Unitree G1 calls “visual grasping skills” to select specified objects. The Realman robot then calls “grasping skills” to lift the fruit basket and navigate to the Unitree dual-arm robot at the table. Subsequently, the Unitree dual-arm robot calls “grasping skills” to retrieve a fruit knife and place it in the center of the fruit basket. Finally, the Realman robot navigates to an office desk location based on “spatial memory,” delivers the fruit basket, and returns to standby mode.

Upon receiving the instruction “pick the fruit closest to the cup and deliver a fruit knife”, RoboBrain decomposes the task via its embodied brain module and distributes the decomposed sub-tasks to the three cross-embodiment robots. The embodied brain perceives the environment through “spatial memory,” identifies the locations of the fruit basket and apples, and breaks down the task into: “Unitree G1 selects apple → Realman transports fruit basket → Unitree dual-arm robot grasps fruit knife → Realman returns.”

During the execution of sub-tasks by each robot embodiment, RoboOS provides edge-cloud collaboration capabilities, decomposing tasks into skill granularities. This enables cloud-based distribution of plans via RoboBrain and edge-side execution of skills with real-time feedback. The embodied brain identifies the “location of the fruit closest to the cup,” “affordance for grasping the fruit basket,” “affordance for grasping the fruit knife,” and “pointing to an empty spot in the fruit basket.” These insights are delivered via RoboOS to guide each robot embodiment in completing their tasks.

”Plug-and-Play” Rapid Lightweight Generalized Deployment: Building a Unified Ecosystem

RoboOS, as a cross-embodiment cerebellum-cerebrum collaboration framework for multi-robot systems, is specifically designed to address challenges in general adaptability and multi-machine scheduling during the implementation of embodied AI. Addressing pain points such as difficulty in unifying access for heterogeneous embodiments, low task scheduling efficiency, and lack of dynamic error feedback mechanisms, RoboOS adopts a “cerebellum-cerebrum synergy” architectural paradigm. The cloud-based embodied brain RoboBrain is responsible for unified task understanding, planning decision-making, and context awareness, while the embodiment side integrates lightweight cerebellum execution modules to achieve closed-loop collaboration of perception-cognition-decision-action.

This mechanism dynamically perceives embodiment differences, flexibly adapts operation instructions, and automatically repairs abnormal behaviors, effectively enhancing the system’s robustness and generalization in complex task scenarios. RoboOS natively supports flexible access for heterogeneous robot embodiments, using a Profile template mechanism to quickly complete robot capability modeling and adaptation.

The embodiment’s cerebellum module can call various skill interfaces, including open-source skill libraries and self-developed low-level controllers, forming an operational system that supports modular reuse and plug-and-play functionality, significantly lowering development barriers and integration costs.

On the cloud side, RoboOS provides comprehensive model adaptation and API access capabilities, compatible with self-developed multimodal VLMs, acting as a pluggable brain decision engine. This supports multi-robot collaboration for complex tasks in fields such as service robots, industrial automation, smart logistics, and intelligent manufacturing.

Leveraging RoboOS’s edge-cloud integrated collaborative capabilities and dynamic scheduling mechanisms, the entire system possesses high scalability and portability, laying a general operating-system-level foundation for the large-scale deployment and ecosystem construction of future embodied AI.

RoboOS is built upon FlagScale, BAAI’s parallel training and inference framework. It natively supports edge-cloud collaboration for multi-robot systems, creating a unified foundation for embodied AI. The system design fully considers “multi-robot-multi-modal-multi-task” scenarios, offering extremely high scalability and low-latency response capabilities.

In edge-side deployment, robots can automatically establish bidirectional communication links with the cloud-deployed RoboBrain brain upon registration. Through an efficient publish-subscribe mechanism, it achieves real-time task scheduling and state feedback, with instruction response latency under 10ms, meeting the closed-loop control requirements for complex dynamic tasks.

Facing the massive perception and behavioral data generated by robots during long-term operation, RoboOS provides a memory-optimized data access engine that supports random memory access to TB-level historical data. This provides foundational capabilities for scenarios such as task reproduction, anomaly tracing, and cross-task knowledge transfer. Combined with RoboBrain’s task reasoning and strategy optimization modules, historical data can also be used for collaborative knowledge sharing among multiple robots, enabling stronger intelligent evolution and autonomous learning capabilities.

Additionally, FlagScale, as the underlying support framework, supports parallel inference across multiple devices and multi-task collaborative scheduling for large models. It can seamlessly integrate subsystems such as vision-language models, trajectory generation modules, and perception recognition systems, fully unleashing the systemic potential of embodied large models.

Currently, leveraging its advantages in multimodal large model technology resources, BAAI is collaborating with universities and research institutes such as Peking University, Tsinghua University, and the Chinese Academy of Sciences, as well as industry chain enterprises like Galbot, Leju, Accelerate Evolution, and Unitree, to actively build an embodied AI innovation platform. Key research focuses include data, models, and scenario validation.

The release of the cross-embodiment cerebellum-cerebrum collaboration framework RoboOS and the open-source embodied brain RoboBrain by BAAI will organically integrate and widely connect differently configured embodied embodiments with diverse embodied models, accelerating cross-embodiment collaboration and large-scale applications in embodied AI.

Openness, collaboration, and sharing are inevitable paths to a prosperous embodied AI ecosystem. BAAI is willing to join hands with more industry partners to co-draw the blueprint for the embodied AI ecosystem.

Open Source Links:

Embodied Multimodal Brain Model RoboBrain

Github: [http

High-quality heterogeneous dataset ShareRobot designed for robotic manipulation tasks

GitHub: https://github.com/FlagOpen/ShareRobot

Gitee: https://gitee.com/flagopen/share-robot

Huggingface: https://huggingface.co/datasets/BAAI/ShareRobot