Ilya’s secretly recorded backup of OpenAI’s confidential documents has just been exposed.

70 pages of internal evidence, 200+ pages of private notes, secretly filmed videos, messages passed by bypassing company systems, and delivered via self-destructing methods—culminating in a direct, devastating blow:

Sam Altman is a pathological liar!!! (Loud.jpg)

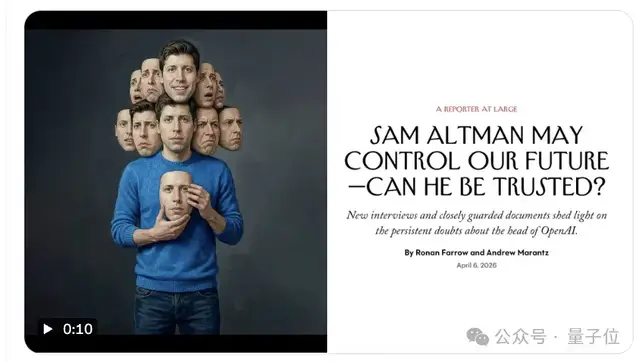

The New Yorker has just revealed the details of this incident. The content is nothing short of dramatic:

- From OpenAI’s board to Microsoft’s senior executives, spanning 20 years and multiple organizations, the core assessment of Altman by almost everyone was that he is pathologically dishonest.

- OpenAI’s non-profit mission requires its CEO to possess integrity. However, Ilya later discovered that Altman precisely lacked this quality, and indeed, possessed the opposite…

- Five days after being fired, Altman set up a palace-intrigue war room in his mansion, launching attacks on three fronts: capital, employees, and public opinion. Ultimately, the board of directors was unable to resist!

- After this internal power struggle, instead of reining himself in, Altman accelerated the collapse of safety commitments, marginalizing the SuperAlignment team.

Who would have thought? Just when Silicon Valley’s Empresses in the Palace drama seemed to be over, the love-hate saga between Altman and Ilya has updated once again.

Why did OpenAI’s Chief Scientist use spy-thriller tactics to gather evidence of the CEO’s misconduct?

What exactly was written in this memorandum that left board members recalling only three words when asked: “He was terrified”?

Grab some snacks, everyone. Let’s watch and chew…

(It’s too exciting, too exciting, too exciting…)

Ilya Exposes 70 Pages of OpenAI Secrets!

Sam Altman is a Pathological Liar

It must be said that from the very beginning, OpenAI set a threshold for itself with almost no room for error.

The 70-page confidential memorandum mentions that OpenAI was designed from its inception as an organization with maximum safety protocols:

A non-profit structure where the board is primarily accountable to humanity, and in extreme cases, can even sacrifice the company itself.

Because of this public interest nature, the requirements for the CEO were equally special: he had to be very, very, very, very trustworthy.

Coincidentally, that was precisely where the problem lay.

In 2019, while Ilya was still at OpenAI’s office, he officiated Greg Brockman’s wedding and even arranged for a robotic hand to serve as the ring bearer.

At that time, technology, ideals, and interpersonal trust seemed unified. But as model capabilities approached critical thresholds, he began to rethink the same question:

If this technology is truly built, who will control it? His answer became increasingly clear, calm down to just one judgment:

“I don’t think Altman is the person who can make the final call for OpenAI.”

The reasons—

Ilya didn’t doubt Altman’s competence. The main issue was his suspicion of this man’s humanity…

Thus, Ilya’s subsequent actions were less like internal discussions at a tech company and more like what we see now:

70 pages of materials systematically compiled, including Slack records and internal documents; key content photographed with mobile phones to deliberately avoid company devices; then sent to the board via messages that automatically disappear.

A director who received the materials later recalled that when he saw this big reveal, he said bluntly: I was genuinely terrified…

Five Days of Palace Intrigue: From F1 Tracks to a Mansion War Room

In Ilya’s 70-page confidential memorandum, the palace intrigue incident at OpenAI is retold more specifically and dramatically.

Moreover, the beginning of this matter itself carried a touch of absurdity:

Altman was in Las Vegas watching F1 when he was called away by a video call. On the other end, Ilya read an extremely brief statement:

He (Altman) is no longer the CEO of OpenAI.

No buildup, no disclosure of evidence. Even more absurdly, core investors like Microsoft’s Reid Hoffman were completely unaware beforehand.

(At one point, people even thought it was a major scandal involving embezzlement, but nothing was found??)

Hearing the news that he had been “executed on the spot,” Altman immediately set up a temporary command center in his mansion that night, themed around “Saving Altman.”

△Image generated by AI

Altman’s goal was to rapidly advance three things:

First, capital pressure.

Thrive froze key investments. Microsoft stated directly that they could start over: You can replace the CEO, but OpenAI could also cease to exist from then on.

Second, employee alignment.

A joint letter spread quickly, signed by almost everyone. Even Murati, who temporarily succeeded as CEO and initially stood with the board, eventually sided with Altman, signing a joint letter supporting his return.

Next came the loss of control over public opinion.

The board chose silence, while Altman’s side continuously released information, controlling the narrative.

The result? Ilya ultimately had to concede: If Altman didn’t return, the company might collapse entirely.

Five days later, the outcome was settled—Altman returned to power, and the board was ousted.

Even OpenAI employees gave this classic Silicon Valley palace intrigue reversal a name—The Blip (meaning “a brief interlude” or “temporary malfunction”).

After The Blip, Safety Commitments Began to Collapse

After The Blip ended, changes at OpenAI did not stop at the power level. That mechanism used to “limit power” began to loosen gradually.

The first thing to go wrong was resources.

OpenAI had publicly promised to allocate 20% of its computing power for long-term safety research—this was OpenAI’s core safety card at the time.

But actual execution quickly changed flavor—the proportion of computing power dropped to 1%-2%, allocated to the oldest clusters and worst chips.

When head Jan Leike raised objections, he received the response: This was unrealistic from the start.

From a priority to a symbolic existence, safety began to lose real investment.

The second step was that processes began to be bypassed.

At OpenAI, releases involving high-risk capabilities normally required review by the safety committee.

But in subsequent product iterations, some of the most controversial features went live without completing the full approval process. Even regional versions of products were released before safety assessments were completed.

(Whether there’s a process or not seems less important now…)

The third step was that investigations lost their binding force.

In fact, after the board turmoil, OpenAI hired the law firm “WilmerHale,” which had participated in investigating multiple corporate scandals, to conduct an independent investigation.

According to this firm’s normal workflow and general external expectations, there should have been a formal, traceable written report on OpenAI’s affairs.

But the final result was—no report. (doge)

The conclusion of the investigation was reported orally to the new board. The suggestion not to write a report came precisely from the private lawyer of a director chosen by Altman.

Three things—first cut resources, then loosen processes, and finally weaken investigations.

Each step looked like a minor adjustment, but when the system can bypass restrictions, the restrictions themselves cease to exist.

Did Someone See Through Sam Altman 20 Years Ago?

To be honest, the bucketload of good and bad evaluations surrounding Altman did not appear only at OpenAI.

In fact, spanning 20 years and multiple organizations, the core assessment of Altman is surprisingly consistent:

This kid tells no truth; his actions are completely off-base. (doge)

The earliest instance can be traced back to 2013.

At that time, the late genius programmer Aaron Swartz left an extremely extreme judgment about Altman in private conversations with friends:

You must understand, this man is never trustworthy. He has antisocial personality traits and will do anything for his goals.

Problems already emerged during his first entrepreneurial phase.

Internal employees twice pushed the board to replace him, requiring investors to personally step in and watch over him within the company.

Later, as he entered more core entrepreneurial and investment circles, the problems escalated—

From internal conflicts to a crisis of trust.

Someone inside later admitted directly that Altman had been hiding things continuously—for example, constantly changing statements in public announcements, repeatedly altering job descriptions, and eventually deleting them altogether.

Yet in official regulatory filings, he was still listed in his original position??

In other words, the surface changed, but the actual relationships did not adjust synchronously.

These scattered reputations from different stages converged and exploded at OpenAI—

Board members’ evaluations were: He is unconstrained by facts. Altman was even described as lacking basic concern for the consequences of deception.

Another executive’s statement was: In the future, he might be discussed in the same sequence as figures involved in major commercial scandals.

There was another unconventional diarist who later became Anthropic CEO, Dario Amodei, who left over 200 pages of notes containing extensive evaluations of OpenAI and Altman himself.

Although not particularly close friends with Ilya, Amodei reached the same conclusion—

That is, the bucketload of problems within OpenAI were not in the company system itself, but in Altman personally.

Even more intensely worded, Amodei’s evaluation of Altman was—what he says can almost certainly be determined as nonsense!!!

How to say it? Different sources, different stages, but the direction is highly consistent.

More subtly, despite so many negative reviews, Altman’s backbone remained straight. When questioned, he simply said:

Sorry, I can’t change my personality~ (What an awesome mental state.)

Finally, worth mentioning is that in Ilya’s 70-page confidential memorandum, there was another thing quite worth noting—

In OpenAI’s office area, the slogan “Feel the AGI” was everywhere.

This phrase was originally used by Ilya to warn colleagues about AGI risks. But after The Blip palace coup, it became a slogan celebrating the future.

(This is somewhat of a dark joke, isn’t it? Ilya: Me???)

OMT

Fam, this melon (gossip) isn’t finished yet.

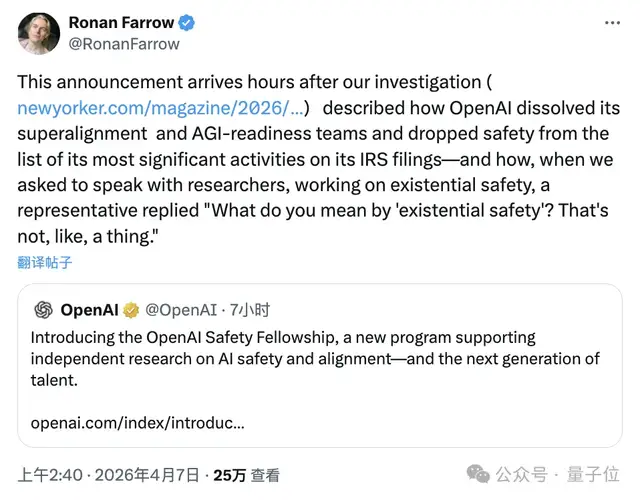

Just now, The New Yorker journalist who exposed this memorandum stated on X that when they tried to interview researchers studying “AI existential risk,” OpenAI’s official response was:

What do you mean by existential safety? That thing doesn’t exist~

But still, it has to be OpenAI. Words are one thing, actions another—these are two different matters:

Just a few hours after this investigative report was published, OpenAI coincidentally launched a project supporting safety research. (doge)

It has to be OpenAI. It has to be Altman.

(Ilya: Now you know I’m telling the truth, right? This Altman really says one thing and does another??)