Resignation Sparks Outcry: Mistral Accused of “Distilling” DeepSeek

A former female employee at Mistral AI has sent a mass email to colleagues, exposing what she describes as several dark practices within the company.

The most explosive allegation is that Mistral’s latest model appears to have been directly distilled from DeepSeek, yet it was marketed externally as a success story in Reinforcement Learning (RL), with benchmark test results allegedly distorted.

Mistral has long been hailed as “Europe’s OpenAI” and remains one of the global stars in open-source AI, with its models consistently receiving high praise for performance.

It is precisely because of this strong reputation that these allegations have caused such shockwaves.

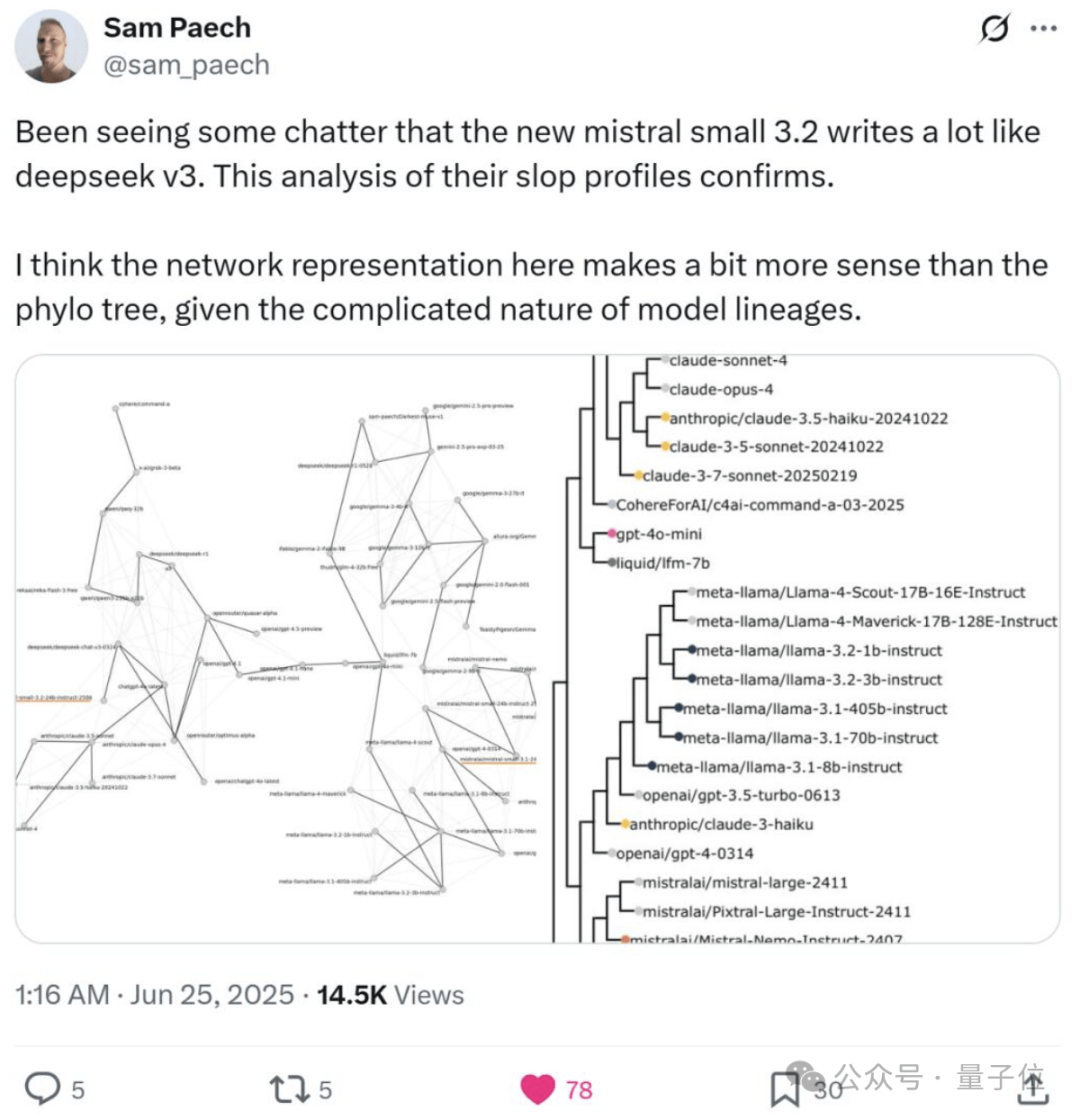

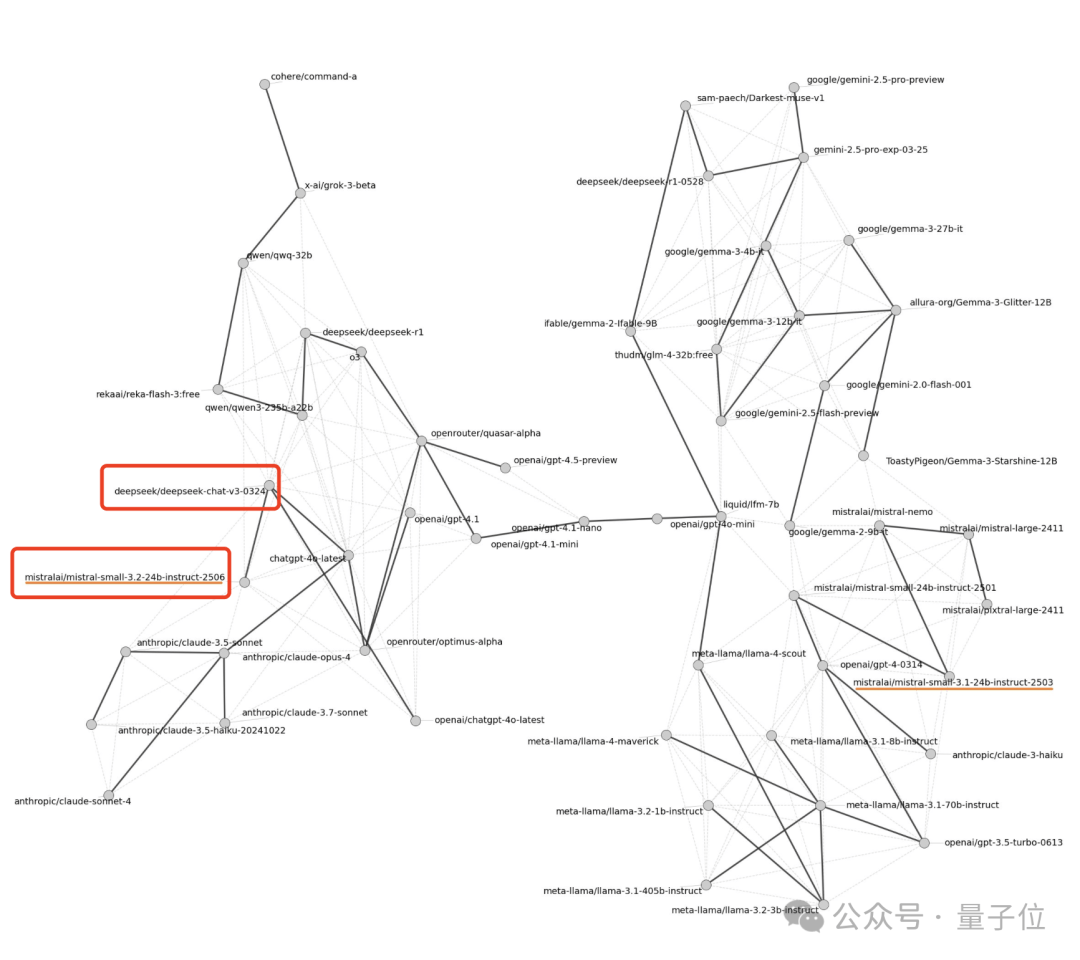

Earlier this June, a blogger analyzed “language fingerprints” and found significant similarities between Mistral-small-3.2 and DeepSeek-v3.

Interestingly, in February this year, netizens jokingly referred to DeepSeek as “China’s Mistral.”

However, six months later, the plot has reversed: not only did Mistral fail to outperform DeepSeek, but it was also accused of “borrowing” its achievements.

This is a classic case of a boomerang with GPS—going halfway around and striking precisely back at its thrower.

Solid Evidence That Mistral Distilled DeepSeek

As mentioned at the beginning, Twitter blogger Sam Peach discovered surprising similarities between Mistral-small-3.2 and DeepSeek-v3 by analyzing overused vocabulary patterns (“slop”) in model outputs.

Such similarity is rarely accidental through independent training; it strongly suggests that distillation was used:

Mistral-small-3.2 “learned” the output style of DeepSeek-v3.

Specifically, Sam Peach conducted his analysis as follows:

- He first counted words and n-grams (word groups) in creative writing outputs (creativewriting) that appeared more frequently than in human text.

- He then aggregated this big data into a feature set.

- Finally, he performed hierarchical clustering on these high-frequency features to generate a “similarity map.”

By comparing the relative positions of models on the similarity map, it was revealed that Mistral-small-3.2 and DeepSeek-v3 are positioned very close to each other, indicating highly similar output patterns.

The latest allegations further indicate that the similarity between Mistral models and DeepSeek is not a coincidence but likely the result of distillation.

Because the whistleblower, Susan Zhang, has set her Twitter account to private, more details remain unavailable for now.

However, it should be noted that distillation itself is not an illicit practice; many models currently use this method to rapidly enhance their capabilities.

The issue with Mistral lies in the potential concealment of this fact.

According to the former employee, Mistral’s actions were aimed at falsely claiming that its own Reinforcement Learning efforts were effective. This not only distorted benchmark test results but also misled the public.

Many agree with this perspective: distilled models must be clearly labeled, and transparency is key.

Additionally, some netizens pointed out that distillation actually opens a shortcut for model development, allowing developers to avoid reinventing the wheel.

No Official Response Yet

This incident is highly controversial, partly due to Mistral’s significant standing in the open-source AI community.

Founded in 2023 and based in Paris, France, Mistral has been called “Europe’s OpenAI.” It was co-founded by Arthur Mensch (formerly of Google DeepMind) and Guillaume Lample and Timothée Lacroix (formerly of Meta).

In August this year, it was reported that Mistral’s valuation reached $10 billion, and the company is raising a new round of $1 billion in financing.

In its previous funding round (June 2024), Mistral completed a €600 million ($645 million) raise led by General Catalyst, pushing its valuation to €5.8 billion ($6.2 billion). This ranked it fourth globally and first outside the US Bay Area.

Since its inception, Mistral has maintained an open-source strategy. Models released this year include the lightweight Mistral Small and Mistral Code, which focuses on programming.

Compared to mainstream large language models, Mistral’s focus on open source and agility (“small but fast”) gives it considerable competitiveness in multilingual processing and reasoning capabilities, securing a unique position in the large model market.

The company also launched its own chatbot, LeChat, to compete with ChatGPT, featuring deep research modes, native multilingual reasoning, and advanced image editing functions.

As of now, Mistral has not officially responded. Just yesterday, they released a new model, Mistral Medium V3.1.

References

1937786948380434780. 1937786948380434780 — x.com/sam/_paech/status/1937786948380434780